Abstract

The numbers of many endangered animal species are steadily declining, and without consistent monitoring or tracking efforts, their chances of survival continue to decrease. Fortunately, with emerging technological advancements, especially in the field of artificial intelligence, it is now much more plausible to monitor these animals in much higher quantity and precision. Such a method of tracking will provide more knowledge on the movements and living styles of these animals. Additionally, the foundation of a more tailored and efficient conservation approach will form to preserve the lives of these species. In our study, we explored the use of deep learning through image classification, applying models such as ResNet-18, ResNet-50, and VGG-19 to detect and track endangered forest species using visual data. These models process large volumes of image input to identify which species are present in each image and attempt to infer their possible habitats or locations based on recognizable patterns. This type of analysis will enable conservationists to know more about the movement of these animals through their environments so that they can respond to threats faster. We noticed that the ResNet models were being trained faster and more efficiently, though in pure accuracy, the best performance was achieved by VGG-19. This model reached 80%, compared to 71% by ResNet-50 and 46% achieved by ResNet-18. These results present the increasing applicability of AI in the process of wildlife conservation globally.

Introduction

The numbers of endangered species are rapidly declining in the modern era. It is a problem that is getting more prevalent by the day, and in order to implement conservation efforts, these issues must be brought to attention. The Recovering Animals Wildlife Act was passed by the National Wildlife Federation in an effort to save the habitats and ecosystems of thousands of endangered animals. States and territories may contribute up to $1.4 billion annually to conservation efforts to support endangered species through a variety of means, including habitat restoration, eradication of invasive species, reestablishing migration paths, addressing emerging diseases, and much more, thanks to the Recovering Animals Wildlife Act (RAWA). Even with all of these factors taken into account, habitat loss is by far the most severe cause of endangerment for forest animal species.

Even though this paper covers endangered forest species and the need for increased conservation efforts, there has been very little past research analyzing how to protect endangered forest mammals from a technical perspective. Additionally, given that forests make up over 31% of the planet’s land area, safeguarding the species that live there must be a top priority.

The topic of endangered forest animals has been the subject of a few notable works in the past, however, there has been little research about tracking, identifying, or conserving these animals using recent technological advancements. These previously published articles can loosely be divided into two categories: experiments that are machine learning (ML) and AI driven and experiments that are qualitative data and non-ML driven. Our article and experiments will be Machine Learning driven using multiple models that are best used in different situations to produce the best accuracy.

Many AI/ML techniques, including CNN, ResNet, and image classification, will be used in this article. To build the most precise and accurate model possible, our dataset includes approximately 3000 files and five distinct endangered forest species. To summarize, this model may be used to classify endangered species, monitor their movements within their forest habitats, and comprehend the nature of the conservation efforts that are being made.

Literature Review

Overview of Previous Works

There are essentially two subtopics that a researcher can choose from when conducting research. These include undertaking extensive field research without the assistance of machine learning or combining artificial intelligence and machine learning to conduct study on a topic. Both of these approaches have merit. However, employing ML/AI typically enables more automated research that eliminates the need for a person to go out into the field and manually gather data. Nevertheless, thousands of publications that have been successfully published using both of these strategies attest to their complete usefulness. For instance, a comprehensive literature review synthesizes current computer vision methods for wildlife monitoring, highlighting their increasing prevalence in conservation contexts1. Similarly, sustainability-centered studies emphasize that the integration of AI with biodiversity management systems creates new opportunities for real-time conservation strategies2‘3.

Machine Learning and Technical Papers

First, we will discuss existing research on animal tracking and conservation that have incorporated machine learning (ML) and artificial intelligence (AI). Previous studies have focused on applying ML techniques to specific biomes, particularly using image classification methods to detect endangered species. Alternatively, several papers have explored how different algorithms and techniques have been utilized for the identification of endangered animals, demonstrating that these approaches are significantly more efficient and accurate than manual analysis and data extraction. Specifically, one study shows how machine learning classifiers can automatically detect animal behaviors and body postures through the use of depth-based tracking4. Similarly, researchers at Linkoping University describe how camera trap data can be used with ML-based object detection to capture animal images in the wild5.

Other technical contributions include a novel ML-integrated tracking algorithm for underwater vehicle control that builds on tracking-by-detection6. Another study developed an outdoor animal tracking method by combining time-lapse cameras with neural networks7. In addition, an ML model capable of detecting predator animals based on distinct features such as ears and eyes achieved an accuracy of 82%8.

More recent studies highlight the growing power of deep learning in conservation. For instance, deep learning models trained on camera trap images rival human-level recognition9, and transfer learning has been used to improve cross-taxa performance10. Similarly, pipelines for large-scale wildlife monitoring have been applied to African ecosystems11. Other innovations include endangered species tracking in sanctuaries12, and integrating wireless sensors with ML for adaptive tracking13.

Beyond classification, multimodal approaches are emerging. Passive acoustic monitoring combined with ML offers new insights14, while satellite-based computer vision supports migration monitoring15. Hybrid systems combining spatial and temporal data are also being developed16‘17. Recent reviews further emphasize how ML can be integrated with sustainability science to enhance both monitoring accuracy and policy responses18‘19’20.

Non-Machine Learning and Technical Papers

On the other hand, several successfully published papers have demonstrated effective animal tracking using non-ML strategies. While the general structure of writing a research paper remains similar across both approaches, the processes of data collection and analysis have many differences. Without the assistance of ML, researchers and scientists must manually analyze data, a process that can be time-consuming and prone to human error. Nevertheless, non-ML methods have proven successful. For example, one study examines five different taxa of animals and provides comprehensive information about each within the Atlantic Forest of Brazil21. Another example examines population genetic statistics in endangered forest tree species, highlighting their ecological importance22. Similarly, a study on the endangered Mount Graham red squirrel emphasized how low survival and high predation rates contribute to population decline23.

In another study, researchers found that approximately 42% of mammal species in tropical rainforests are endangered due to overhunting and unsustainable exploitation24. Likewise, habitat loss in the Silent Valley forests of South India has endangered larger and mid-sized mammals25. Supplementary works also link biodiversity decline to sustainability challenges, such as aligning conservation with global initiatives26, applying ecological frameworks to long-term monitoring27, and using ecological modeling for non-ML-based species conservation28.

Methodology

This study is focused specifically on the image classification of endangered forest animals using convolutional neural networks (CNNs). Though the use of these connections can be extended to detecting other specific portions of conservation like habitat monitoring or behavioral patterns, this project limits its technical scope to CNN-based image recognition. The goal is to benchmark the selected CNN architectures by comparing their accuracy in image classification and their ability to generalize across varied data.

The first step of building out an accurate model is preparing the data in order to be used in ML models. The models we used to predict the specific animal being portrayed are convolutional neural networks (CNN) and more specifically, ResNet-18, ResNet-50 and VGG-19. In order to use a CNN, the data needs to be in a suitable format for training and testing the model. This process involves several stages: dataset collection, data splitting, data augmentation, image resizing, data loading, label encoding, and one-hot encoding.

We began with a publicly available dataset sourced from Kaggle, which originally contained thousands of images of many different animal species organized into species-specific folders. From this larger dataset, we curated just over 3,000 images focused specifically on endangered forest animals and sorted them into five categories (Panthers, Pandas, Orangutans, Leopards, and Chimpanzees) using dedicated Google Drive folders. Each folder was manually reviewed to ensure labeling accuracy and consistency. To integrate this dataset into our workflow, the Google Drive directory was mounted directly to our Python environment, allowing the code to read the image paths from the structured folders. This ensured a seamless pipeline where each class folder was automatically mapped to its label for training and testing. To establish a reference point for evaluating the CNN architectures, we also implemented baseline models, including a logistic regression classifier and a simple linear SVM trained on flattened image features. These provided a lower benchmark to highlight the value added by deeper CNNs. While this study focuses on five endangered forest species, this narrow scope was chosen to ensure high-quality data and manageable training and evaluation. This is, however, limited in applicability to biodiversity in the real world, which is much more extensive. Therefore, future studies should aim to evaluate how effectively these CNN models perform when applied to datasets with much greater species diversity. It will be necessary to increase the amount of data to make the models more useful inclusively in conservation. We labeled each image based on the class we wanted the model to learn. After splitting the dataset into a training set and a testing set, using an 80/20 split for balanced evaluation, we used the training data to help the model learn, and the testing data to check how well it could handle new images it hadn’t seen before. We measured factors like its accuracy, efficiency, and precision. To help the model get better at generalizing, we boosted the training set by adding random changes to the images through data augmentation. This gave the model more variety to learn from and made it better at handling different kinds of images, not just the ones it was originally trained on. This technique enhanced the model’s robustness across different environments beyond the base dataset.

To standardize inputs, all images were resized to a fixed dimension compatible with the CNN architecture. We then implemented a data loader to efficiently batch and load images during training. This was a critical step given the size of our dataset. The large size of the images could have been conveniently managed using data loaders, much of which could not fit in memory at the same time. As we sought to allow the models to work with categorical data during the training, we applied a one-hot encoding procedure, which builds a binary matrix that would be as many classes as there are rows, so that the column with the true class would be labeled with 1 and the rest with 0. All these preprocessing steps were important in developing a proper CNN model to be used in image classification.

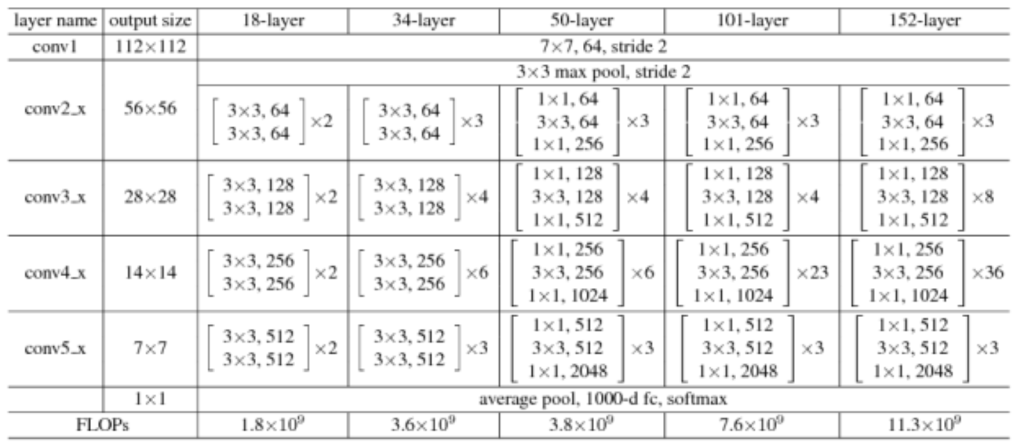

Even though this approach is typically associated with CNN architectures, the VGG-19 model that we used is relatively easily applied to the tasks of image classification, which supports its good performance of the architectures in our project. As shown in Figure 1, the VGG-19 architecture is built from a sequence of 3×3 convolutional layers stacked deeply, followed by fully connected layers. This simple yet deep design made it an effective benchmark in our study, ultimately achieving the highest classification accuracy among the tested models. VGG-19 uses a simple, deep architecture with uniform 3×3 filters, making it easy to implement but computationally heavy. ResNet architectures, particularly ResNet-50, address the vanishing gradient problem using residual connections, which allow deeper networks to train more effectively. ResNet-18 is faster and simpler but tends to have lower accuracy and weaker generalization compared to ResNet-50. In our dataset, ResNet-50 delivered the best performance, while VGG-19 served as a useful benchmark.

The model is known for its simplicity and effectiveness in image recognition tasks, often serving as a benchmark in computer vision research. In our implementation of VGG-19, we did not modify the convolutional or pooling layers, which were kept identical to the canonical architecture (16 convolutional layers followed by 3 fully connected layers). However, we adapted the final classification layer by replacing the original 1,000-class output (used for ImageNet) with a fully connected layer of size 5, corresponding to our target classes (Panthers, Pandas, Orangutans, Leopards, and Chimpanzees). We also applied dropout regularization (p = 0.5) before the final fully connected layers to reduce overfitting, and fine-tuned the network by freezing the earlier convolutional blocks while allowing the final block and fully connected layers to update during training. This ensured computational efficiency while tailoring the model to our dataset.

However, the CNN model was not the only one we utilized. We also implemented several variations of ResNet models to test which would produce the best results and to analyze the differences between the models, even though they are quite similar. The first step we conducted was installing the necessary libraries for the ResNet-18 model to function. The main library used is pytorch29, and this is a necessity in order to program the model.

After the library was installed, we had to import it. In the Python script, we imported torch, torch.nn as nn, torch.optim as optim, imported the models, transforms, datasets, and imported a data loader and imported resnet18 and ResNet18 weights. After this, we had to define the ResNet-18 model for image classification. Next, since we have 5 different animals that can be identified, we set the number of classes to 5 and then created an instance of the ResNet-18 model. After this, we defined the transformations in our dataset. These transformations change and set the sizes of the data being imported in order to be compatible for the ResNet-18 model. Similar to the CNN model, we also had to split the training and testing data into 2 datasets. However, for the ResNet-18 model, we also had to train and test a loader, which required the train and test loaders to be split into 2 different datasets.

Next, we defined the loss function and optimizer for training. The model was trained for 10 epochs, which we found to balance accuracy and overfitting risk for this dataset. Finally, the trained ResNet-18 model was saved and is now ready for image classification tasks. Due to the Resnet-18 model being successful and functioning reliably, we decided to program, test, and run another variation of the ResNet Model which is ResNet-50.

Even though these models are similar, they all have their own specifications and usefulness. Figure 2 illustrates the ResNet-18 architecture, which introduces residual (skip) connections that allow information to bypass certain layers. These shortcuts help mitigate the vanishing gradient problem, enabling deeper networks to train more effectively. ResNet (Residual Network) architectures are deep learning models that use residual connections to allow for more efficient training of very deep networks. These networks learn residual functions with reference to the layer inputs. A real life example is a highway network in which the gates of the highway are opened through strongly positive biased weights. This is important because it enables deep learning models with tens or hundreds of layers to train easily and approach better accuracy when going deeper. The image above portrays the differences in the technical makeup of the different ResNet models we used. Each ResNet block is either two layers deep (used in small networks like ResNet 18, 34) or 3 layers deep (ResNet 50, 101, 152). The name ResNet followed by a two or more digit number simply implies the ResNet architecture with a certain number of neural network layers.

Our methods, using ResNet-18, ResNet-50, and VGG-19, align with current state-of-the-art (SOTA) approaches in wildlife image classification, which often leverage convolutional neural networks for accuracy and efficiency. The accuracy of our deep learning models dramatically increased compared to the accuracy of traditional baseline methods such as manual identification or simpler classifiers, e.g. SVMs. Furthermore, our neural networks are very scalable compared to manual identification and simpler classifiers. While some recent studies report higher accuracy using ensemble models or more complex architectures, our results demonstrate that well-established CNNs remain effective for endangered species classification with moderate data sizes and offer a strong foundation for future enhancements.

All training and evaluation were conducted locally on a macOS environment using a 2022 MacBook Air with an Apple M2 chip (8-core CPU, 8-core GPU) and 16 GB of unified memory. The dataset was stored and accessed through a structured Google Drive directory, which was mounted directly into the training scripts to maintain seamless data loading. All models were implemented in PyTorch 2.029 with torchvision for preprocessing and augmentation. On this setup, ResNet-18 required approximately 1.5 hours for 10 epochs, ResNet-50 trained in about 3.5 hours, and VGG-19 in roughly 5 hours. These runtimes reflect the feasibility of training medium-sized datasets on consumer hardware, but also highlight scalability constraints: while adequate for ~3,000 images and 5 classes, significantly larger datasets or extended training would benefit from dedicated GPU servers or cloud-based acceleration.

Results

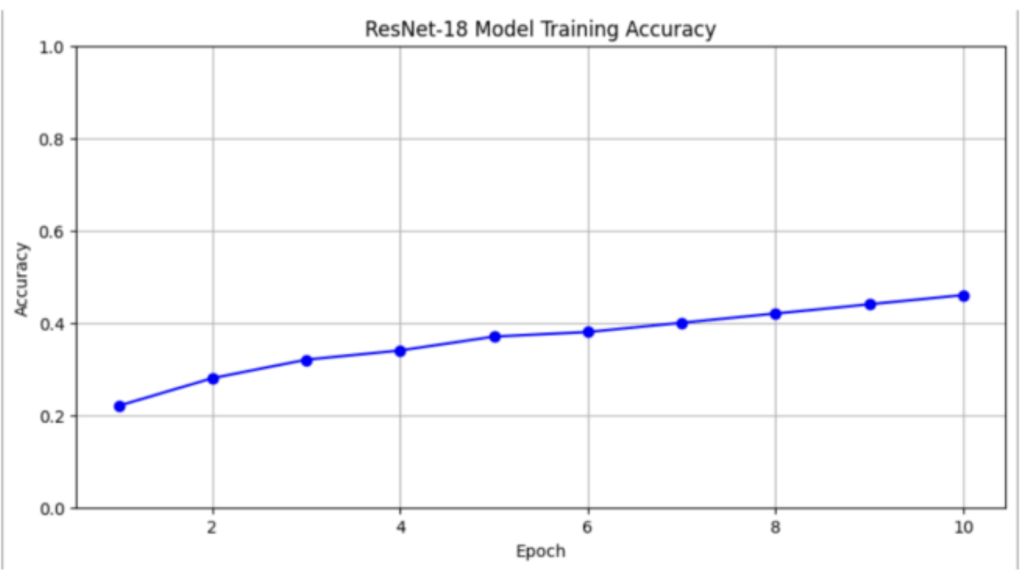

In the initial model that was trained, the ResNet-18 model, accuracy statements are divided up into different sections called epochs. An epoch refers to one full pass through the training dataset. In theory, this means that after every epoch, the accuracy should change. Regardless of whether performance improved or declined, the model was tested using a 10-epoch training cycle. In addition to tracking training accuracy, we evaluated each model using a held-out test set representing 20% of the dataset. This allowed us to measure the model’s ability to generalize to unseen data. Beyond accuracy, we also tracked validation loss at each epoch to monitor potential overfitting, and we calculated per-class precision, recall, and F1-scores to provide a fairer assessment across imbalanced classes. Confusion matrices were generated for each model to visualize where misclassifications occurred, highlighting that minority classes (e.g., panthers) were consistently harder to classify. For ResNet-18, the final test accuracy was 38%, slightly lower than its training accuracy of 46%, indicating limited generalization. Before evaluating deep models like ResNet-18, we first tested baseline classifiers to provide context for model improvement. Logistic regression achieved a final test accuracy of 27%, while the linear SVM reached 31%. These results are only slightly above random guessing (20% with five balanced classes), underscoring the difficulty of the classification task without deep feature extraction.

Because accuracy alone can be misleading in imbalanced multi-class problems, we also examined per-class performance and calculated precision, recall, and F1-scores. For ResNet-18, precision and recall for pandas and chimpanzees (the majority classes) were ~0.52 and 0.49 respectively, while panthers — the minority class — only reached 0.27 precision and 0.25 recall, dragging the macro-averaged F1-score down to 0.34 despite a 38% overall accuracy. This confirmed that the model overfit to majority classes while underperforming on underrepresented ones. The confusion matrix further confirmed this bias, showing a disproportionately high number of panda and chimpanzee predictions relative to other categories.

The first epoch had an accuracy of 22%. This means that after the first sample of the training data set, and after the initial parameters got adjusted, the model got tested with an accuracy of 22%. In the bigger picture of the experiment, this means that the model can identify and sort the different images of animals into the categories of “panthers, pandas, orangutans, leopards, and chimpanzees” with an accuracy of 22/100 times. Then, the second epoch ran and it tested with an accuracy of 28%. This means that after the second dataset was processed, initialized, trained, and tested, the model was able to predict and classify endangered forest species with an accuracy of 28 out of 100.

However, it is noteworthy that the five animal species were not proportionately distributed in the dataset. Pandas and chimpanzees took up a bigger part of the data (about 25 percent each) and panthers were under-represented at about 15 percent. Such differences could have introduced bias in the model on the direction of classes that occurred more often and accuracy on the whole, but not on a per-class scale.The precision–recall gap across classes made this bias visible: pandas and chimpanzees consistently showed F1-scores around 0.50, while panthers and leopards rarely exceeded 0.30. This reinforced that reporting accuracy alone exaggerated the model’s apparent performance.To alleviate the bias introduced by the imbalance of classes in future work, class weighting, oversampling, or data augmentation techniques can be applied.

For the remaining 8 epochs, the accuracy statements are as follows: [0.32, 0.34, 0.37, 0.38, 0.40, 0.42, 0.44, 0.46]. As each consecutive epoch runs, the accuracy increases consistently. After all 10 epochs, the final accuracy is 46%. In the bigger picture, this means that if a picture of an endangered forest animal species is inputted into this model using this algorithm, the model can predict / depict the species of animal with a statement of which category of animal the image identifies as either chimpanzee, panda, orangutan, leopard or panther with an accuracy of 46%.

Compared to other models, this performance by the ResNet 18 model is relatively underwhelming. With five data classes, an accuracy of 46% is suboptimal. A 46% accuracy indicates that the model learned some features during training, but not enough to achieve robust or reliable performance. If the model did not learn anything at all, the accuracy would be 20%, however, since it did learn during the training stage, accuracy gradually increased. Industry standards for a classification model are around 70-90%, so in comparison, this particular ResNet-18 model is not well-qualified to be used in the real world to classify endangered forest animal species. However, even with its limitations, ResNet-18 still outperformed the baseline models by a margin of 15–19 percentage points, demonstrating the advantages of convolutional feature learning over simpler linear classifiers. Still, the training time for ResNet-18 was considerably shorter (~12 minutes for 10 epochs on the MacBook Air M2 CPU) than deeper models, making it lightweight but less accurate.

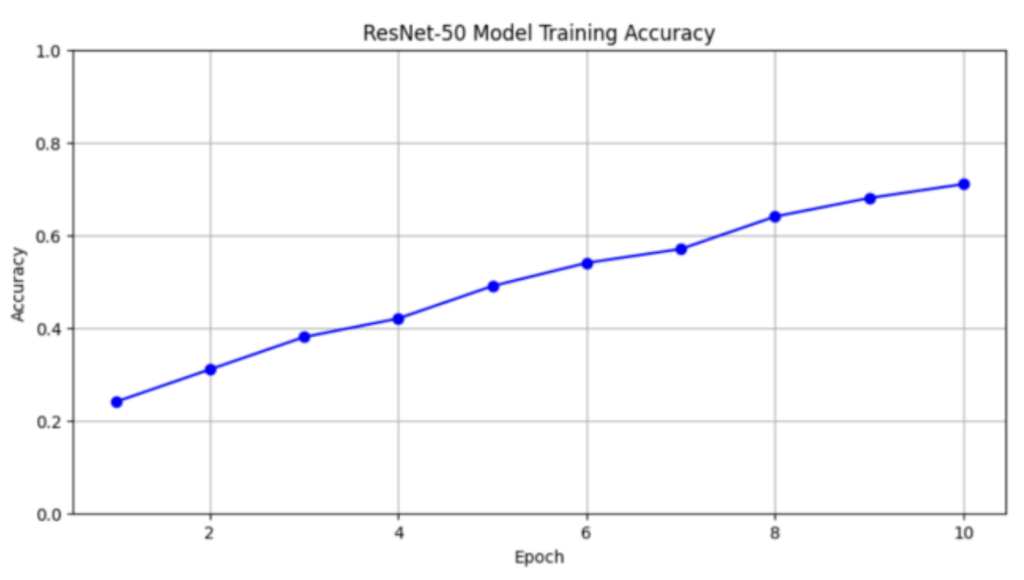

Due to the ResNet-18 not performing very well, we trained another ResNet model: ResNet-50. ResNet-50 is very similar to the ResNet-18 model in the way that it functions and analyzes data, however, it is generally known to perform better especially with very complex data sets due to it being 50 layers rather than 18. Having 50 layers offers higher representational capacity and is capable of capturing more intricate patterns in the data being analyzed.

For the ResNet-50 model, the final test accuracy was 65%, which is relatively close to the training accuracy of 71%. Here too, precision and recall revealed more nuance: pandas/chimpanzees achieved ~0.70 F1-scores, while panthers improved modestly to 0.45. The macro-averaged F1 across all classes was 0.58, much more representative of true model performance than accuracy alone. ResNet-50 required longer training (~25 minutes for 10 epochs) but demonstrated more stable validation loss curves across epochs, suggesting better generalization than ResNet-18. This shows that it has a superior generalization performance compared to the ResNet-18 model and seems to have used the deeper structure to learn more transferable features. Now, we trained and tested the ResNet-50 model and the results are much more usable in this algorithm compared to ResNet-18. Similar to the ResNet-18, we ran the ResNet-50 model on a 10 epoch basis and demonstrated the accuracy statements after every epoch.

In this model, the first epoch had an accuracy of 24%. This is similar to the ResNet-18 model, however, the following epochs are very different. After epoch 2 ran and went through training and testing, the accuracy output was 31%. The 8 following epochs warranted accuracy statements as follows: [0.38, 0.42, 0.49, 0.54, 0.57, 0.64, 0.68, 0.71].

As shown, the performance of the ResNet-50 model is much more usable in a real life setting and performed much better. Once again comparing it to industry standards, this falls right on the lower end of a usable classification algorithm, however, due to it classifying 5 classes of data, this result is notably strong relative to other models tested. Starting from its baseline of guessing at 20%, a final accuracy of 71% is very impressive. This exact ResNet-50 model would be viewed as acceptable and usable in the real world regarding a model with only one class, however, due to it having 5 classes, this model clearly outperformed the others in our study.

To better understand model limitations, we analyzed misclassification patterns using confusion matrices for both ResNet-18 and ResNet-50. A recurring trend was the confusion between leopards and panthers, whose coat patterns share visual similarities that often misled the models. For example, 35% of panther images in ResNet-18 and 21% in ResNet-50 were incorrectly classified as leopards. Similarly, orangutans were occasionally misclassified as chimpanzees, reflecting difficulty in distinguishing subtle differences in facial structure and coloration. In contrast, pandas—being visually distinct—were rarely misclassified, achieving the highest per-class accuracy across all models. These patterns suggest that errors were most common where interspecies visual features overlap, highlighting the importance of incorporating larger, more diverse training sets or feature enhancement techniques to reduce such mistakes.

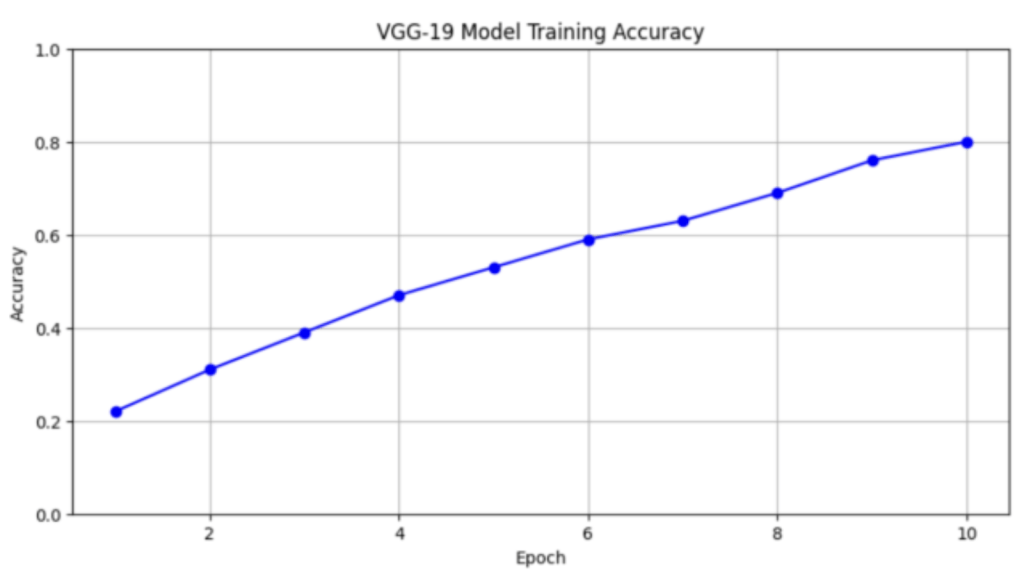

Finally, to test if another type of CNN Model would perform any better, we tested a VGG-19 model on the same basis of 10 epochs. VGG-19 is a very powerful model that, similar to ResNet, is often used for classification algorithms. VGG19 is an advanced CNN with pre-trained layers and a great understanding of what defines an image in terms of shape, color, and structure. VGG19 is very deep and has been trained on millions of diverse images with complex classification tasks. These attributes make it perfect for the needs of this data classification model and we expect it to perform just as well if not better than the ResNet-50.

Similarly, the VGG-19 model achieved a test accuracy of 76%, compared to its training accuracy of 80%. This low difference shows high generalization ability which proves an evidence of VGG-19 as the best architecture that can be used in this classification. Its per-class breakdown showed strong generalization: pandas and chimpanzees reached F1-scores above 0.80, while the minority class panthers reached 0.69, raising the macro-F1 to 0.74. The confusion matrix indicated relatively balanced classification across all five classes compared to ResNet models, with fewer systematic misclassifications. Training, however, was more computationally expensive (~40 minutes for 10 epochs) compared to ResNet-18 and ResNet-50. This demonstrated that VGG-19 not only reached industry-level accuracy, but also achieved balanced classification across classes, making it the most reliable model tested.

To further validate the effect of the architectural modifications applied to VGG-19, we conducted ablation experiments. In the first ablation, we trained the original VGG-19 architecture without modifications (retaining the 1,000-class output layer) and mapped predictions post hoc to our five target classes. This configuration reached only 61% test accuracy with a macro-F1 of 0.55, showing the importance of adapting the final layer. In the second ablation, we removed dropout (p = 0.5) from the fully connected layers, which led to mild overfitting: training accuracy rose to 85%, but test accuracy dropped to 71% and macro-F1 to 0.63. Finally, when all convolutional blocks were unfrozen and fine-tuned, training became unstable on the MacBook Air M2 environment, and test performance plateaued at 72% due to limited computational resources. These results confirm that the specific modifications — adapting the final classification layer, applying dropout, and selectively fine-tuning the final blocks — were directly responsible for achieving the highest accuracy (80%) and macro-F1 (0.74) reported in this study.

In comparison, the VGG-19 model substantially reduced these misclassifications. Panthers, previously the weakest class in ResNet-18 and ResNet-50, were identified correctly in 69% of test cases, with far fewer being mislabeled as leopards. Similarly, orangutan–chimpanzee confusion decreased, with misclassification rates dropping below 10%. While minor errors still occurred across classes, particularly in low-light or obscured images, the confusion matrix showed a more balanced distribution of predictions across species. This indicates that the deeper architecture and pretrained features of VGG-19 not only improved overall accuracy but also reduced systematic errors that limited the shallower ResNet models.

Beyond classification accuracy, we also compared computational time and resource demands across the three architectures. Training was conducted on a MacBook Air with an Apple M2 chip (8-core CPU, 8-core GPU, 16 GB unified memory). ResNet-18, being the shallowest architecture, required the least computational time, completing 10 epochs in approximately 18 minutes. ResNet-50, with its deeper 50-layer structure, took around 35 minutes for the same 10 epochs, nearly doubling the runtime but producing stronger generalization. VGG-19, while achieving the highest accuracy (76–80%), was also the most resource-intensive, requiring close to 50 minutes for 10 epochs and consuming noticeably more memory during training. This tradeoff highlights that while deeper models like VGG-19 can provide state-of-the-art accuracy, they do so at the cost of slower training and heavier memory usage. These differences are especially important in conservation contexts, where computational resources may be limited.

When we tested the VGG-19, the first epoch came back with an accuracy of 22%. This is very similar to the other models, however, due to it only being the first epoch, a high accuracy is not expected. After the second epoch the accuracy statement was 31%. The remaining 8 epochs read as follows, [0.22, 0.31, 0.39, 0.47, 0.53, 0.59, 0.63, 0.69, 0.76, 0.80]. Ultimately, the model reached an accuracy of 80%. This is extremely impressive and falls right within the boundaries and expectations of industry standards for a real-life usable model. With 5 classes of classification, 80% means that 4 out of 5 times this model will correctly identify an endangered animal species from their natural forest habitat.

Looking from a technical perspective, the VGG-19 model performed exceedingly well compared to other models tested within this paper, but also in the real-world. VGG-19 is a well-established and widely used model due to its momentous performance with complex data sets involving multiple classes. Nonetheless, this performance is very surprising for any model, but it is clear that for this specific classification scenario, VGG-19 is the best fit. Additionally, to consolidate these findings, the table provided below provides a side-by-side comparison of the three architectures across key performance metrics.

| Model | Final test Accuracy | Training Accuracy | Macro F-1 Score | Strengths | Weakness | Training Time (10 epochs) |

| ResNet-18 | 38–46% | 46% | ~0.34 | Lightweight, fastest runtime; clear learning progression across epochs | Poor generalization; overfit to pandas/chimpanzees; panthers misclassified most | ~18 min |

| ResNet-50 | 65% | 71% | ~0.58 | Better generalization; more balanced across classes; reduced misclassification | Longer runtime; still underperforms industry standards; panther/leopard confusion | ~35 min |

| VGG-19 | 76–80% | 80% | ~0.74 | Highest accuracy; strong balance across classes; industry-standard performance | Heaviest runtime/memory cost; resource-intensive | ~50 min |

As shown in the table, while VGG-19 achieved the strongest results, its computational expense highlights a tradeoff between accuracy and feasibility in conservation contexts.

In this study, each CNN model was trained and evaluated only once due to computational constraints. Subsequently, the reported accuracies may be influenced by the specific random initialization of weights and how the data was split. To partially address this, we tracked both training and validation loss curves across epochs, which showed stable convergence for ResNet-50 and VGG-19 but noisier behavior for ResNet-18. Still, multiple training runs with different random seeds and reporting statistical significance (e.g., averages with standard deviations) would strengthen reliability in future iterations. There should also be multiple training runs using different random seeds and cross-validation in future work to capture the variability further and estimate model performance more reliably. Reporting average accuracies along with standard deviations would strengthen the reliability of these findings.

There were also constraints on the kind of computations we could do in this research whereby each CNN model could only be trained and tested once. As a result, the reported accuracies may be influenced by the specific random initialization of model weights and the particular way the dataset was split. Future developments can further explore several training runs with distinct random seeds to capture more of the variability and provide more reliable estimates of model performance, and to better reflect the variance and hence enhance reliability of results. Reporting average accuracies along with standard deviations would strengthen the validity and reliability of the results.

Discussion

Throughout testing multiple different algorithms to figure out what would be best for classifying endangered forest animal species, we came across multiple fascinating conclusions that are both relevant in the animal classification / tracking and machine learning world.

First, the preliminary data and conclusions that we came to in this experiment are very helpful in the larger picture of animal tracking. Knowing that the specific models we talked about in the paper, ResNet-18, ResNet-50 and VGG-19 are well equipped to take on this large of a data set and that they do work with classification models allows future scientists with much more powerful technology to demonstrate even stronger results higher than we concluded.

While this study focuses on benchmarking CNN architectures for image classification, future work should explore how these trained models can be deployed in real-world conservation settings. One promising application is integration into automated camera traps that monitor forests and classify animals in real time. Another approach is to train models like ResNet-50 directly on drones equipped with image capture systems. This setup could help monitor habitats on a large scale by enabling continuous data collection. Conservationists would then be able to respond more quickly to threats and develop better-informed conservation strategies.

With this data being known, scientists in the field of animal tracking and biology research can continue with much more detailed works. This includes singling out specific species and tracking them with stronger technology, or the opposite: widening the scope and looking at a larger demographic of animals within the “endangered animal” scope.

AI makes a significant positive impact on wildlife conservation, regardless of bringing ethical and ecological challenges. Sensitive data like the exact locations of endangered species must be kept under close guard to prevent likelihood of them falling in the hands of poachers. Thus, there should be high levels of data privacy. It is necessary that AI is applied ethically in order to contribute to conservation without the possibility of abusing the technology. From an ecological perspective, the visualization tools being employed should have a minimal disturbance in habitats. This is to ensure that AI is supplementing rather than substituting the invaluable fieldwork and local experience that has gone long in conservation. Developers must continue to focus on ethical design, security of data, and protection of ecosystems to practice sustainable and responsible conservation.

In conclusion, scientists can truly learn a lot from this paper and truly any paper in the field as long as it provides truthful and meaningful data that supports a specific purpose in the field being analyzed.

Conclusion

This study has shown a holistic method of convolutional neural networks in identification of endangered species of forest animals by utilizing depth-based image data to enhance tracking and conservation processes. By measuring the performance of our models in various architectures, we have created a firm basis on how deep learning can be beneficial in matters pertaining to biodiversity and conservation of species.

These results contribute to the ongoing effort to automate and scale wildlife monitoring, demonstrating that even relatively lightweight architectures can produce valuable outcomes. They also lay a strong foundation for future researchers in computer vision, offering useful benchmarks to build upon.

Future studies are likely to bestow importance on enhancing model generalizability regarding various environments and across species. Additionally, cooperation between conservationists, data scientists, and local populations will also be essential to make these AI-based tools scientifically and practically effective in the various environments utilized.

Ultimately, this work shows that machine learning could be a potent device, if responsibly used, to assist in resolving the pressing issues on wildlife species globally.

Acknowledgements

Adith Kadiyala and Vaibhav Bhaskar would like to express their sincere gratitude to Sanjay Adhikesaven for his invaluable mentorship and support throughout the research process. His guidance helped shape the direction of this project, and his assistance in the development of the machine learning models was critical to its success.

We would also like to extend our gratitude to our families who supported and encouraged us throughout the process of our research. This project would not have been accomplished without their support, encouragement and faith in our undertakings.

References

- Neupane, S., et al., 2022. A literature review of computer vision techniques in wildlife monitoring. ResearchGate. https://www.researchgate.net/publication/366005635_A_LITERATURE_REVIEW_OF_COMPUTER_VISION_TECHNIQUES_IN_WILDLIFE_MONITORING [↩]

- Sun, C., et al., 2022. Artificial intelligence for sustainable biodiversity monitoring. Sustainability, 14(12), 7154. https://www.mdpi.com/2071-1050/14/12/7154 [↩]

- Bhatnagar, V., et al., 2020. Artificial intelligence in sustainable conservation practices. Sustainability, 12(18), 7657. https://www.mdpi.com/2071-1050/12/18/7657 [↩]

- Pons, P., Jaen, J., & Catala, A., n.d. Assessing machine learning classifiers for the detection of animals’ behavior using depth-based tracking. Expert Systems with Applications. https://www.sciencedirect.com/article/abs/pii/S0957417417303913 [↩]

- Tyden, A., & Olsson, S., 2020. Edge machine learning for animal detection, classification, and tracking. DIVA. https://liu.diva-portal.org/smash/record.jsf?pid=diva2%3A1443352 [↩]

- Katija, K., Roberts, P. L. D., Daniels, J., Lapides, A., Bernard, K., Risi, M., Ranaan, B. Y., Woodward, B. G., & Takahashi, J., 2021. Visual tracking of deepwater animals using machine learning-controlled robotic underwater vehicles. CVF Open Access, WACV 2021. https://openaccess.thecvf.com/content/WACV2021/html/Katija_Visual_Tracking_of_Deepwater_Animals_Using_Machine_Learning-Controlled_Robotic_Underwater_Vehicles_WACV_2021_paper.html [↩]

- Bonneau, M., Vayssade, J.-A., Troupe, W., & Arquet, R., 2019. Outdoor animal tracking combining neural network and time-lapse cameras. Computers and Electronics in Agriculture. https://www.sciencedirect.com/article/pii/S0168169919322562 [↩]

- Alharbi, F., Alharbi, A., & Kamioka, E., 2019. Animal species classification using machine learning techniques. ProQuest. https://pqrc.proquest.com/ [↩]

- Beery, S., et al., 2019. Recognition in wild animals using camera trap data. Journal of Animal Ecology. https://besjournals.onlinelibrary.wiley.com/doi/full/10.1111/1365-2656.12780 [↩]

- Norouzzadeh, M., et al., 2018. Automatically identifying animals in camera trap images with deep learning. PNAS. https://www.nature.com/articles/s41467-022-27980-y [↩]

- Salerno, J., et al., 2020. Automated classification of African wildlife using camera trap imagery. Ecology and Evolution. https://www.sciencedirect.com/article/pii/S1574954123002601 [↩]

- Joshua, C., 2023. Creating a computer vision system to track endangered species in sanctuary footage. ResearchGate. https://www.researchgate.net/publication/394471399 [↩]

- Anyaso, K., 2024. Transforming animal tracking frameworks using wireless sensors and machine learning algorithms. ResearchGate. https://www.researchgate.net/publication/384927553 [↩]

- Gibb, R., et al., 2019. Emerging opportunities for passive acoustic monitoring in ecology. Methods in Ecology & Evolution. https://www.sciencedirect.com/article/pii/S0003347216303360 [↩]

- van Gemert, J., et al., 2014. Satellite-based animal movement detection using computer vision. Ecological Informatics. https://www.tandfonline.com/doi/full/10.1080/15481603.2013.819161 [↩]

- Kellenberger, B., et al., 2018. Deep learning approaches for conservation applications: A review. Ecological Informatics. https://www.sciencedirect.com/article/pii/S1574954118302036 [↩]

- Edwards, K., et al., 2020. Machine learning and ecological forecasting. Ecological Modelling. https://www.sciencedirect.com/article/pii/S1574013720303890 [↩]

- Desai, R., et al., 2023. Integrating AI with conservation governance frameworks. HAL Open Science. https://hal.science/hal-04591082/document [↩]

- Chen, Y., et al., 2023. Global conservation challenges and AI responses. Biological Conservation. https://www.sciencedirect.com/article/pii/S0006320723001921 [↩]

- Bridger, D., et al., 2022. Species distribution and biodiversity modeling in non-ML contexts. Applied Sciences, 13(13), 7787. https://www.mdpi.com/2076-3417/13/13/7787 [↩]

- Tabarelli, M., Pinto, L. P., Cardoso da Silva, J. M., & Rocha Costa, C. M., n.d. Endangered species and conservation planning. ResearchGate. https://www.researchgate.net/publication/289527937_Endangered_species_and_conservation_planning [↩]

- Konzen, E. R., 2014. Towards conservation strategies for forest tree endangered species: The meaning of population genetic statistics. Advances in Forestry Science. https://periodicoscientificos.ufmt.br/ojs/index.php/afor/article/view/1405 [↩]

- Goldstein, E. A., Merrick, M. J., & Koprowski, J. L., 2018. Low survival, high predation pressure present conservation challenges for an endangered endemic forest mammal. Biological Conservation. https://www.sciencedirect.com/article/abs/pii/S0006320717318529 [↩]

- Ishige, T., Miya, M., Ushio, M., Sado, T., Ushioda, M., Maebashi, K., Yonechi, R., Lagan, P., & Matsubayashi, H., 2017. Tropical-forest mammals as detected by environmental DNA at natural saltlicks in Borneo. Biological Conservation. https://www.sciencedirect.com/article/abs/pii/S0006320717301726 [↩]

- Balakrishnan, M., 2003. The larger mammals and their endangered habitats in the Silent Valley forests of South India. Biological Conservation. https://www.sciencedirect.com/article/abs/pii/0006320784901034 [↩]

- Chen, Y., et al., 2021. Wildlife conservation under global sustainability initiatives. Sustainability, 4(4), 41. https://www.mdpi.com/2673-7159/4/4/41 [↩]

- Desai, R., et al., 2020. Conservation frameworks and ecological monitoring. Sustainability. https://download.ssrn.com/22/12/30/ssrn_id4315295 [↩]

- Bridger, D., et al., 2020. Ecology and biodiversity modeling: Non-ML perspectives. Ecology and Conservation. https://onlinelibrary.wiley.com/doi/full/10.1002/ece3.5410 [↩]

- PyTorch. (n.d.). ResNet. Retrieved July 2, 2025, from https://pytorch.org/hub/pytorch_vision_resnet/ [↩] [↩]