Abstract

In this paper, we explore minimal surfaces and graphs in differential geometry. We derive the equation for a catenoid, a rotationally symmetric solution to the first variation of area functional for equidistant bounded discs. This analysis reveals two possible configurations for the catenoid, with an inner-radius and outer-radius catenoid that arise when the distance between the coaxial discs is below a critical threshold; we rigorously prove the stability of the outer-radius catenoid as the unique, area-minimizing surface. Additionally, we establish the rigidity and uniqueness of minimal (planar) graphs; we prove that the Dirichlet problem admits at most one minimal graph. Moreover, when the Dirichlet boundary curve lies in a plane, the corresponding minimal planar graph must reside entirely in the same plane.

Introduction

Plateau’s problem, first proposed in the late 18th century1 , asks whether a surface of minimal area exists under specific boundary constraints. Solutions to this problem, minimal surfaces, have since been studied extensively and have applications in fields such as physics, molecular biology, and architecture; for instance, minimal surfaces are used to model the apparent horizon of black holes2, describe the theoretical model of biomolecules3, and even inspire modern architecture4. As such, Differential Geometry, the broader context of minimal surfaces, remains a relevant field in mathematics, as it extends the familiar study of Euclidean geometry to higher-dimensional space to measure area, curvature, torsion, etc., using the tools of calculus, linear algebra, and topology.

This paper focuses on two specific cases of minimal surfaces: catenoids and minimal graphs. More specifically, we prove two main results: (i) that the outer-radius catenoid is stable and area-minimizing as the unique solution to Plateau’s problem, Theorem (4.2), and (ii) that the Minimal Graph Equation permits one unique solution, Theorem (5.3), implying that planar Dirichlet boundary conditions yield only the trivial planar minimal graph, in Corollary (5.1). While there exist previous proofs4 for the stability of the outer-radius catenoid, we provide a self-contained proof that avoids reliance on advanced background in Sturm-Liouville theory or Differential Geometry aside from that introduced in sections two and three, and is thus more attainable for a broader audience. Physically, regarding the stability of the catenoid, several soap ring experiments5,6 demonstrate the existence of two potential catenoid configurations bounded by coaxial rings, but that only the outer-radius catenoid is stable and persists under a critical separation distance d∗ between the rings; using the first and second variation of area functionals, we mathematically justify such observations.

In the Geometry of Surfaces section, we provide the necessary background in Differential Geometry to understand and prove the results of this paper. In section three, we investigate the context of Plateau’s problem and minimal surfaces, deriving important theorems in minimal surface theory for our main results. In section four, we present an overview of the catenoid and prove our first main result in Theorem (4.2) regarding the stability of the outer-radius catenoid. Finally, in section five, we introduce the Maximum Principle for linear elliptic equations and prove our second main result in Theorem (5.3) regarding the uniqueness of minimal graphs, concluding with Corollary (5.1).

Geometry of Surfaces

This section introduces the fundamental concepts in the geometry of surfaces to properly analyze minimal surfaces. Specifically, we need to answer the question of what defines the geometry of a surface? In its essence, a surface in ![]() has three major properties: length, area, and curvature; the latter two will be critical for defining a minimal surface. However, to start, we need to establish a formal definition of a regular surface and its composition from a local parametrization.

has three major properties: length, area, and curvature; the latter two will be critical for defining a minimal surface. However, to start, we need to establish a formal definition of a regular surface and its composition from a local parametrization.

Definition 2.1 (Local Parametrization).

Let ![]() represent a subset in

represent a subset in ![]() . A map

. A map ![]() is called a local parametrization of

is called a local parametrization of ![]() if the following conditions are satisfied:

if the following conditions are satisfied:

is

is  ; that is,

; that is,  is infinitely differentiable with respect to the codomain

is infinitely differentiable with respect to the codomain  .

. is a homeomorphism; i.e.,

is a homeomorphism; i.e.,  is bijective, and both

is bijective, and both  and

and  are continuous.

are continuous. , the cross product

, the cross product![Rendered by QuickLaTeX.com \[\frac{\partial F}{\partial u} \times \frac{\partial F}{\partial v} \neq 0\]](https://nhsjs.com/wp-content/ql-cache/quicklatex.com-483790a6499b56d2c3aadb03daaa1084_l3.png)

Note that the third condition in Definition 2.1 is necessary to establish the linear independence of the parametrization for ![]() ; i.e., the nonzero cross product implies that, at any point on

; i.e., the nonzero cross product implies that, at any point on ![]() , the surface cannot locally collapse to a single point or curve and must permit local tangent planes everywhere. This property is vital for Definition 2.3.

, the surface cannot locally collapse to a single point or curve and must permit local tangent planes everywhere. This property is vital for Definition 2.3.

Definition 2.2 (Regular Surface). A subset ![]() is called a regular surface if,

is called a regular surface if, ![]() , there exists an open subset

, there exists an open subset ![]() and a corresponding open subset

and a corresponding open subset ![]() containing

containing ![]() such that

such that ![]() is a local parametrization.

is a local parametrization.

Importantly, we need to establish a local coordinate system on the surface ![]() to examine its behavior in space; specifically, we need a basis to reference the curvature and geometry of

to examine its behavior in space; specifically, we need a basis to reference the curvature and geometry of ![]() around an arbitrary point

around an arbitrary point ![]() . Conveniently, the partial derivatives of a local parametrization

. Conveniently, the partial derivatives of a local parametrization ![]() are well-defined (nonzero) everywhere on

are well-defined (nonzero) everywhere on ![]() and are thus of interest for defining a local (tangent) plane.

and are thus of interest for defining a local (tangent) plane.

Definition 2.3 (Tangent Plane). Let ![]() be a regular surface and

be a regular surface and ![]() a point. If

a point. If ![]() is a local parametrization around

is a local parametrization around ![]() , then the tangent plane

, then the tangent plane ![]() at

at ![]() is defined as

is defined as

![]()

Remark 2.1. ![]() ,

, ![]() , since

, since ![]() by Definition 2.1.

by Definition 2.1.

With the proper local coordinates, we can now proceed with analyzing the behavior and geometry of ![]() around a point

around a point ![]() ; namely, we can examine the properties of curvature, area and length associated with

; namely, we can examine the properties of curvature, area and length associated with ![]() . The latter two are defined with respect to a local inner product; let

. The latter two are defined with respect to a local inner product; let ![]() . Then

. Then

![Rendered by QuickLaTeX.com \[\left\langle x,y \right\rangle = \begin{bmatrix} x_1 & x_2 & x_3 \end{bmatrix} \begin{bmatrix} \left\langle e_1,e_1 \right\rangle & \left\langle e_1,e_2 \right\rangle & \left\langle e_1,e_3 \right\rangle \\ \left\langle e_2,e_1 \right\rangle & \left\langle e_2,e_2 \right\rangle & \left\langle e_2,e_3 \right\rangle \\ \left\langle e_3,e_1 \right\rangle & \left\langle e_3,e_2 \right\rangle & \left\langle e_3,e_3 \right\rangle \end{bmatrix} \begin{bmatrix} y_1 \\ y_2 \\ y_3 \end{bmatrix}\]](https://nhsjs.com/wp-content/ql-cache/quicklatex.com-ac68f36a1cfa2dd6b7440fa99a552d65_l3.png)

such that

First Fundamental Form. As alluded to earlier, we can express area and length attributed to a regular surface ![]() using a local inner product; to do so, we must define the first fundamental form and its matrix definition.

using a local inner product; to do so, we must define the first fundamental form and its matrix definition.

Definition 2.4 (First Fundamental Form). If ![]() is a regular surface, the first fundamental form of

is a regular surface, the first fundamental form of ![]() is the inner product on

is the inner product on ![]() for all

for all ![]() , denoted as

, denoted as ![]() .

.

Remark 2.2 (Matrix Form). Let ![]() represent a local parametrization for a regular surface

represent a local parametrization for a regular surface ![]() and

and ![]() have tangent plane

have tangent plane ![]() . Then, for

. Then, for ![]() , by Definition 2.4, the first fundamental form for

, by Definition 2.4, the first fundamental form for ![]() at

at ![]() is defined in its matrix form as

is defined in its matrix form as

![Rendered by QuickLaTeX.com \[g_p = \begin{bmatrix} \frac{\partial F}{\partial u_1}(p)\cdot\frac{\partial F}{\partial u_1}(p) & \frac{\partial F}{\partial u_1}(p)\cdot\frac{\partial F}{\partial u_2}(p) \\ \frac{\partial F}{\partial u_2}(p)\cdot\frac{\partial F}{\partial u_1}(p) & \frac{\partial F}{\partial u_2}(p)\cdot\frac{\partial F}{\partial u_2}(p) \end{bmatrix}\]](https://nhsjs.com/wp-content/ql-cache/quicklatex.com-63779e40869f074f82aa1da27c4117a2_l3.png)

Thus,

Remark 2.3 (Length). Let ![]() represent a regular surface with local parametrization

represent a regular surface with local parametrization ![]() . Consider a parametrized curve

. Consider a parametrized curve ![]() from the interval

from the interval ![]() . In

. In ![]() , the length of

, the length of ![]() is

is

![]()

Since

![]()

![]()

![]()

Thus, we can express the length of

![]()

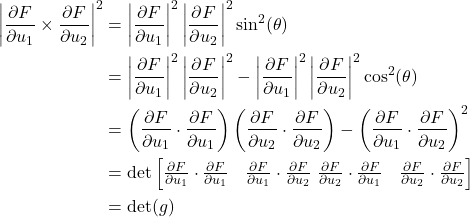

Remark 2.4 (Area). Let ![]() represent a closed region in the regular surface

represent a closed region in the regular surface ![]() with local parametrization

with local parametrization ![]() . Then, we know the surface area of

. Then, we know the surface area of ![]() is

is

![]()

However, we can further express this inner cross product in terms of the first fundamental form of

Thus, we find the area of

![]()

Second Fundamental Form. Now that we have explored the length and area of regular surfaces, we can investigate the nature of curvature, defining the second fundamental form in order to so. It is important to note that the typical, inherent idea of curvature only exists in space curves in ![]() as a measure of the rate at which a curve

as a measure of the rate at which a curve ![]() changes direction; clearly, such a measure is well defined, as

changes direction; clearly, such a measure is well defined, as ![]() only has one tangent vector

only has one tangent vector ![]() at any point along its trace.

at any point along its trace.

However, in a regular surface ![]() , there is no sole, unique tangent vector—only planes (

, there is no sole, unique tangent vector—only planes (![]() ). Thus, different curves along

). Thus, different curves along ![]() will often have varying curvatures, and so we must define curvature in the context of each “direction” along

will often have varying curvatures, and so we must define curvature in the context of each “direction” along ![]() (in a similar manner to a “directional derivative”).

(in a similar manner to a “directional derivative”).

To start, by Definition 2.3, we know that the (linearly independent) partial derivatives of a local parametrization form the tangent plane for the corresponding regular surface; this composition implies that the cross product between them defines a form of normal vector.

More concretely, let ![]() represent a regular surface with local parametrization

represent a regular surface with local parametrization ![]() around a point

around a point ![]() . Then, at

. Then, at ![]() , we can express the ‘‘normal vectors’’

, we can express the ‘‘normal vectors’’ ![]() as

as

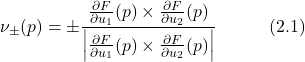

Importantly, there exist two normal vectors (

Definition 2.5 (Gauss Map). Let ![]() be a regular, orientable surface. Then, the Gauss map

be a regular, orientable surface. Then, the Gauss map ![]() is a smooth map such that

is a smooth map such that ![]() ,

, ![]() is the globally defined unit normal vector of

is the globally defined unit normal vector of ![]() at

at ![]() . We typically have

. We typically have ![]() . See equation (2.1).

. See equation (2.1).

Definition 2.6 (Normal Curvature). Let ![]() be a regular surface with Gauss map

be a regular surface with Gauss map ![]() . For each

. For each ![]() and any unit vector

and any unit vector ![]() such that

such that ![]() , we denote

, we denote ![]() to be the plane in

to be the plane in ![]() that contains

that contains ![]() and is spanned by

and is spanned by ![]() and

and ![]() . If

. If ![]() is the curve that is formed by the intersection of

is the curve that is formed by the intersection of ![]() and

and ![]() , then the normal curvature at

, then the normal curvature at ![]() along

along ![]() , denoted by

, denoted by ![]() , is the signed curvature of

, is the signed curvature of ![]() at

at ![]() with respect to

with respect to ![]() such that

such that

![]()

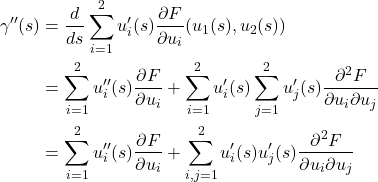

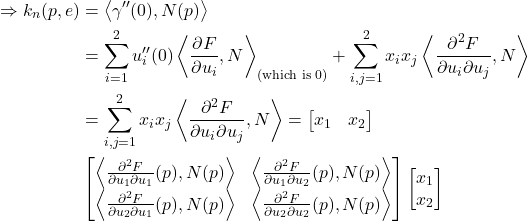

Theorem 2.1. Let ![]() be a regular surface with local parametrization

be a regular surface with local parametrization ![]() and Gauss map

and Gauss map ![]() . For a point

. For a point ![]() and unit vector

and unit vector ![]() , express

, express

![Rendered by QuickLaTeX.com \[e = \sum_{i=1}^2 x_i \frac{\partial F}{\partial u_i}\]](https://nhsjs.com/wp-content/ql-cache/quicklatex.com-464e583352c246e7456d637820c8ddd9_l3.png)

Then, the normal curvature

Proof. Let ![]() represent the arc-length parametrized curve of the intersection of

represent the arc-length parametrized curve of the intersection of ![]() and

and ![]() for

for ![]() , such that

, such that ![]() and

and ![]() .

.

Since ![]() , we can express

, we can express

![]()

![Rendered by QuickLaTeX.com \[\gamma'(s) = u_1'(s)\frac{\partial F}{\partial u_1} + u_2'(s)\frac{\partial F}{\partial u_2} = \sum_{i=1}^2 u_i'(s)\frac{\partial F}{\partial u_i}\]](https://nhsjs.com/wp-content/ql-cache/quicklatex.com-46208ebe3d6dc7aae077f7f6906429dd_l3.png)

![Rendered by QuickLaTeX.com \[\Rightarrow \gamma'(0) = e = \sum_{i=1}^2 u_i'(0)\frac{\partial F}{\partial u_i}\]](https://nhsjs.com/wp-content/ql-cache/quicklatex.com-dbac7d5a823f0d890c1cabecac140a66_l3.png)

![]()

Thus, we find the second derivative of

as desired.

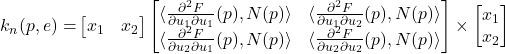

From Theorem (2.1), we are inspired to define a map in a similar fashion as Definition (2.4) to measure the normal curvature on a regular surface. This directly leads us to define the second fundamental form.

Definition 2.7 (Second Fundamental Form). Let ![]() be a regular surface with Gauss map

be a regular surface with Gauss map ![]() and local parametrization

and local parametrization ![]() . Then, for

. Then, for ![]() , the second fundamental form of

, the second fundamental form of ![]() is a map

is a map ![]() such that

such that

![Rendered by QuickLaTeX.com \[h(x,y) = \sum_{i,j=1}^2 x_i y_j \left\langle \frac{\partial^2 F}{\partial u_i \partial u_j}, N \right\rangle\]](https://nhsjs.com/wp-content/ql-cache/quicklatex.com-0201ae871eca7fea9a729aab76c2d3a2_l3.png)

evaluated at a given

Remark 2.5 (Matrix Form). Often, we express ![]() in terms of a matrix:

in terms of a matrix:

![Rendered by QuickLaTeX.com \[h_p = \begin{bmatrix} \left\langle \frac{\partial^2 F}{\partial u_1 \partial u_1}(p), N(p) \right\rangle & \left\langle \frac{\partial^2 F}{\partial u_1 \partial u_2}(p), N(p) \right\rangle \\ \left\langle \frac{\partial^2 F}{\partial u_2 \partial u_1}(p), N(p) \right\rangle & \left\langle \frac{\partial^2 F}{\partial u_2 \partial u_2}(p), N(p) \right\rangle \end{bmatrix}\]](https://nhsjs.com/wp-content/ql-cache/quicklatex.com-7956a56f4f5119fd745e4ad5fe15980a_l3.png)

such that

Remark 2.6 (Normal Curvature). For ![]() , the normal curvature in the direction

, the normal curvature in the direction ![]() is given by

is given by

![]()

However, while we can now find the normal curvature along any vector in ![]() relative to a point

relative to a point ![]() , several questions still arise: namely, which directions minimize/maximize the normal curvature and the respective implications. As such, we desire to optimize

, several questions still arise: namely, which directions minimize/maximize the normal curvature and the respective implications. As such, we desire to optimize ![]() given that

given that ![]() for some

for some ![]() .

.

To do so, define

![Rendered by QuickLaTeX.com \[e = \sum_{i=1}^2 x_i \frac{\partial F}{\partial u_i}\]](https://nhsjs.com/wp-content/ql-cache/quicklatex.com-464e583352c246e7456d637820c8ddd9_l3.png)

for a fixed point

![]()

![]()

Thus, we desire to optimize

![]()

![]()

Expanding equation (2.2), we are left with the system

![]()

![]()

Furthermore, noting the symmetry of

![]()

![]()

To solve equation (2.6), we note that

![]()

We therefore conclude from equation 2.7 that the extrema

Definition 2.8 (Shape Operator). For a regular surface ![]() , the shape operator of

, the shape operator of ![]() is a map

is a map ![]() such that

such that ![]()

Furthermore, we define the eigenvalues of ![]() as the principal curvatures, which are the critical values of

as the principal curvatures, which are the critical values of ![]() given that

given that ![]() . Note that this fact follows briefly as

. Note that this fact follows briefly as

![]()

Finally, since

Definition 2.9 (Mean Curvature & Gauss Curvature). For a point ![]() on a regular surface

on a regular surface ![]() with first fundamental form

with first fundamental form ![]() and second fundamental form

and second fundamental form ![]() , the mean curvature of

, the mean curvature of ![]() , denoted by

, denoted by ![]() , is given by

, is given by

![]()

and the Gauss curvature, denoted by

![]()

where

Here, for both the mean and Gauss curvature, we calculate them under the gauss map with the normal vector that (globally, under the orientability assumption) points outward from the enclosed volume of the surface; as such, the sign for the mean curvature is positive for all convex regions.

Minimal Surfaces

This section will introduce the notion of and context for minimal surfaces, providing the necessary background for the main results of this paper in regard to catenoids and minimal graphs. However, we must first explore the motivating problem that introduced minimal surfaces.

Plateau’s Problem. Given a closed curve ![]() of class

of class ![]() , find a regular surface

, find a regular surface ![]() such that

such that ![]() and

and ![]() , where

, where ![]() is the set of all regular surfaces in

is the set of all regular surfaces in ![]() that span

that span ![]() .

.

To solve Plateau’s Problem, we must employ the first variation of area functional; however, we require context. For a fixed closed and smooth curve ![]() , let

, let ![]() be a regular surface that spans

be a regular surface that spans ![]() with local parametrization

with local parametrization ![]() and Gauss map

and Gauss map ![]() . Consider a family of variational surfaces

. Consider a family of variational surfaces ![]() that span

that span ![]() for

for ![]() with

with ![]() . Then, let the local parametrization

. Then, let the local parametrization ![]() of

of ![]() be of the form

be of the form

![]()

for smooth

We restrict our examination to fixed boundary conditions, so ![]() is sufficient, and no additional-order boundary conditions arise. Note that since

is sufficient, and no additional-order boundary conditions arise. Note that since ![]() by Definition 2.1.

by Definition 2.1. ![]() . In fact, for all orientable surfaces,

. In fact, for all orientable surfaces, ![]() must be differentiable; see7 for more detailed explanation. As such,

must be differentiable; see7 for more detailed explanation. As such, ![]() is differentiable.

is differentiable.

Let ![]() such that

such that

![]()

![]()

Now, if ![]() solves Plateau’s Problem, then

solves Plateau’s Problem, then

![]()

by Remark (2.4).

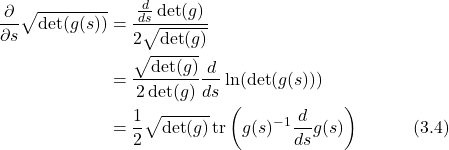

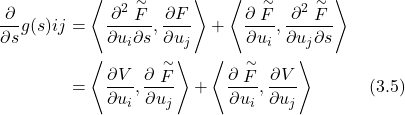

Lemma 3.1. Let ![]() be a family of symmetric, invertible

be a family of symmetric, invertible ![]() matrices. Then,

matrices. Then,

![]()

Proof. Let ![]() be eigenvalues for

be eigenvalues for ![]() .

.

![Rendered by QuickLaTeX.com \begin{align*} \frac{d}{ds}\ln(\det(g(s))) &= \frac{d}{ds}\ln(\lambda_1(s)\lambda_2(s)\dots\lambda_n(s)) \\ &= \frac{d}{ds}(\ln(\lambda_1(s)) + \ln(\lambda_2(s)) + \dots + \ln(\lambda_n(s))) \\ &= \frac{\lambda_1'(s)}{\lambda_1(s)} + \frac{\lambda_2'(s)}{\lambda_2(s)} + \dots + \frac{\lambda_n'(s)}{\lambda_n(s)} \\ &= \text{tr} \left( \left[ \begin{smallmatrix} \lambda_1^{-1}(s) & \dots & 0 \ \vdots & \ddots & \vdots \\ 0 & \dots & \lambda_n^{-1}(s) \end{smallmatrix} \right] \left[ \begin{smallmatrix} \lambda_1'(s) & \dots & 0 \ \vdots & \ddots & \vdots \\ 0 & \dots & \lambda_n'(s) \end{smallmatrix} \right] \right) \\ &= \text{tr} \left( g(s)^{-1}\frac{d}{ds}g(s) \right) \end{align*}](https://nhsjs.com/wp-content/ql-cache/quicklatex.com-2caedbf204ead09e854ebfc628bf0b2f_l3.png)

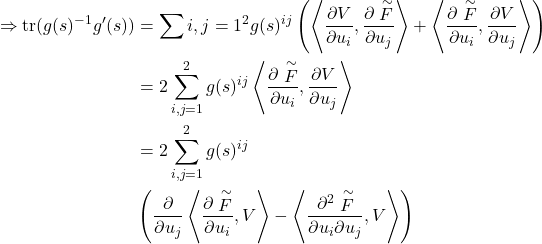

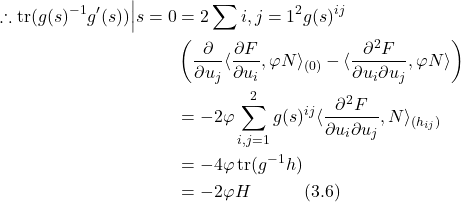

Applying Lemma (3.1), we have

Furthermore, since ![]() , we know

, we know

Now we substitute equation (3.6) into equation (3.4).

![]()

Finally, we plug equation (3.7) into (

Theorem 3.1 (Minimal Surface Equation). The First Variation of Area of a regular surface ![]() is given as

is given as

![]()

![]()

for all choices of variation

![]()

everywhere on

Definition 3.1 (Minimal Surface). A regular surface ![]() is a minimal surface if it is a solution to the Minimal Surface Equation; namely, if

is a minimal surface if it is a solution to the Minimal Surface Equation; namely, if ![]() everywhere on

everywhere on ![]() .

.

Remark 3.1. While we call regular surfaces that have a zero mean curvature everywhere “minimal surfaces,” they do not necessarily solve Plateau’s Problem; often, when solving for generalized minimal surfaces given boundaries, multiple solutions satisfying the Minimal Surface Equation arise, while some may not be truly “area-minimizing.” Put simply, satisfying the Minimal Surface Equation is not enough to warrant a surface a solution to Plateau’s Problem; refer to Theorem (4.1).

Catenoids

This section will introduce the catenoid and its properties as a minimal surface, providing the background for and proving the main result of the outer-radius catenoid’s stability. However, we must first define what a catenoid is; in order to do so, we pose a question: what is the solution to Plateau’s Problem for two separated, equiradial rings? Or more succinctly, what surface that connects two rings has the smallest possible surface area?

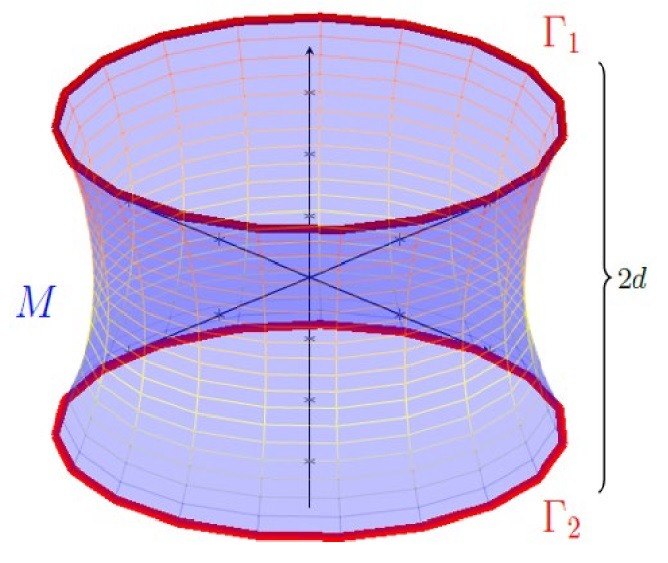

Formally, we can answer this question using the Minimal Surface Equation. Fix two unit circles and at and , respectively, as seen in Figure 1.

We want to find a minimal surface such that . Let us also assume that M is rotationally symmetric. A simple argument can be made that M must be rotationally symmetric because the boundary conditions are symmetric; if a solution for M were not rotationally symmetric, then there must exist an infinite number of solutions identical to M (but rotated slightly) that are also area-minimizing. However, this violates uniqueness; see8,9 for more. Thus, we may locally parametrize M as

Thus, we may locally parametrize M as

![]()

for some strictly positive ![]() with

with ![]() and

and ![]() . Therefore, our objective is to solve for

. Therefore, our objective is to solve for ![]() .

.

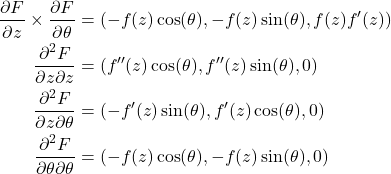

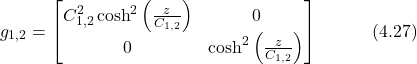

First, we compute the first fundamental form ![]() of

of ![]() .

.

![]()

![]()

Thus, by Definition (2.4), the first fundamental form is given as

![]()

Further, we can also compute the Gauss map and second fundamental form for M.

So, by Definition (2.5), we have

where the global (due to assumed orientability) direction of ![]() is of the form

is of the form ![]() in equation (2.1). Furthermore, by Definition (2.7), we also find

in equation (2.1). Furthermore, by Definition (2.7), we also find

![]()

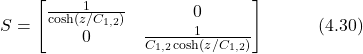

Thus, by Definition (2.8), we compute S as

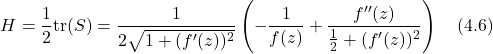

As such, by Definition (2.9), the mean curvature of M is

Therefore, by Theorem (3.1), the Minimal Surface Equation for M is given by

(![]() )

) ![]()

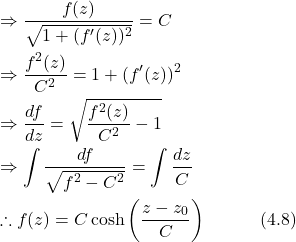

where ![]() . To find a solution

. To find a solution ![]() for (

for (![]() ), let

), let

![]()

Then, we have

by (![]() ). However, equation (4.7) implies that

). However, equation (4.7) implies that ![]() , for some constant

, for some constant ![]() . Thus, we can solve for

. Thus, we can solve for ![]() .

.

for some integration constant ![]() . However, since M is symmetric about

. However, since M is symmetric about ![]() , we note that

, we note that ![]() . This fact follows directly from the boundary conditions,

. This fact follows directly from the boundary conditions, ![]() , so

, so ![]() by the symmetry of the hyperbolic cosine function. Thus, we simplify equation (4.8) and conclude

by the symmetry of the hyperbolic cosine function. Thus, we simplify equation (4.8) and conclude

![]()

Finally, we must find the value of C in equation (4.9) given that ![]() . Furthermore, according to equation (4.9), C must be the minimum value of

. Furthermore, according to equation (4.9), C must be the minimum value of ![]() , attained at

, attained at ![]() . This fact is because

. This fact is because ![]() for all

for all ![]() . Accordingly, we are brought to the definition of a catenoid.

. Accordingly, we are brought to the definition of a catenoid.

Definition 4.1 (Catenoid). A catenoid is the unique, non-planar minimal surface of revolution in ![]() given by the local parametrization

given by the local parametrization

![]()

for some real ![]() . This is equivalent to the initial conditions of two equiradial disks in Plateau’s Problem at the start of this section.

. This is equivalent to the initial conditions of two equiradial disks in Plateau’s Problem at the start of this section.

Note that in Definition (4.1), ![]() , where

, where ![]() , according to the boundary conditions. As such, define

, according to the boundary conditions. As such, define

![]()

Thus, a connected solution ![]() only exists if

only exists if ![]() has a positive root. Observe, however, that as

has a positive root. Observe, however, that as ![]() , we have

, we have ![]() . Furthermore, as

. Furthermore, as ![]() , we also have

, we also have ![]() . Thus, we know

. Thus, we know ![]() attains a global minimum when

attains a global minimum when ![]() .

. ![]() cannot have any local extrema because the hyperbolic cosine function is strictly concave everywhere; that is, since

cannot have any local extrema because the hyperbolic cosine function is strictly concave everywhere; that is, since ![]() everywhere, no local extrema (maxima) can occur, and so the only extrema is the global minimum.

everywhere, no local extrema (maxima) can occur, and so the only extrema is the global minimum.

This fact leads us to suspect that for certain separation distances between ![]() and

and ![]() ,

, ![]() , and there will consequently be no solutions for

, and there will consequently be no solutions for ![]() . To demonstrate this fact, we must first find the minimum of

. To demonstrate this fact, we must first find the minimum of ![]() .

.

![]()

![]()

![]()

![]()

where ![]() . Implicitly solving equation (4.11) for

. Implicitly solving equation (4.11) for ![]() , we arrive at one unique solution

, we arrive at one unique solution ![]() . Thus, for a given

. Thus, for a given ![]() , we have that

, we have that ![]() attains its minimum when

attains its minimum when ![]() .

.

However, also observe that when ![]() , then

, then ![]() . This is once again because

. This is once again because ![]() . However, because

. However, because ![]() is strictly positive, we have

is strictly positive, we have ![]() , so

, so ![]() . This fact, of course, conforms with our intuition, as

. This fact, of course, conforms with our intuition, as ![]() is the minimal radius from

is the minimal radius from ![]() to the

to the ![]() -axis, and as such must be strictly less than the boundary disk radius.

-axis, and as such must be strictly less than the boundary disk radius.

Now that we have ![]() , we substitute into equation (4.10) to find the minimum value of

, we substitute into equation (4.10) to find the minimum value of ![]() as

as

![]()

Thus, for connected solutions for M to exist, we want ![]() . Rearranging equation (4.12) to satisfy this inequality, we are left with

. Rearranging equation (4.12) to satisfy this inequality, we are left with

![]()

Consequently, when ![]() becomes too large (

becomes too large (![]() ), there are no solutions for the equation

), there are no solutions for the equation ![]() and thus no connected solutions for

and thus no connected solutions for ![]() , as we expect. As alluded to in the introduction, this can be seen with real experiments; soap ring bubbles form a catenoid until their separation distance exceeds a certain value, at which point the connecting bubble abruptly pops. See10,11 for more.

, as we expect. As alluded to in the introduction, this can be seen with real experiments; soap ring bubbles form a catenoid until their separation distance exceeds a certain value, at which point the connecting bubble abruptly pops. See10,11 for more.

When ![]() , one unique solution

, one unique solution ![]() exists. However, in the case when

exists. However, in the case when ![]() , it is clear that multiple solutions for

, it is clear that multiple solutions for ![]() exist; Figure 2 displays plots of

exist; Figure 2 displays plots of ![]() for different values of

for different values of ![]() . For

. For ![]() , there are two solutions:

, there are two solutions: ![]() and

and ![]() , where

, where ![]() . Since

. Since ![]() , we call

, we call ![]() and

and ![]() the inner and outer-radius catenoid, respectively. Figure 3 displays both catenoids,

the inner and outer-radius catenoid, respectively. Figure 3 displays both catenoids, ![]() and

and ![]() , corresponding to

, corresponding to ![]() and

and ![]() , respectively.

, respectively.

By inspection, we intuitively suspect that ![]() has a smaller surface area than

has a smaller surface area than ![]() ; however, this result must be proven rigorously. As such, we will prove Theorem (4.2), one of the main results of this paper; namely, that when

; however, this result must be proven rigorously. As such, we will prove Theorem (4.2), one of the main results of this paper; namely, that when ![]() the outer-radius catenoid

the outer-radius catenoid ![]() is the solution to Plateau’s Problem for the Dirichlet boundary conditions depicted in Figure 1, while the inner-radius catenoid

is the solution to Plateau’s Problem for the Dirichlet boundary conditions depicted in Figure 1, while the inner-radius catenoid ![]() is not.

is not.

Second Variation of Area.

Lemma 4.1. It should be stated that this Lemma is known as Weingarten’s Formula. For additional proof, see7. Let ![]() be a regular surface with local parametrization

be a regular surface with local parametrization ![]() , first fundamental form

, first fundamental form ![]() , second fundamental form

, second fundamental form ![]() , shape operator

, shape operator ![]() , and Gauss map

, and Gauss map ![]() . Then,

. Then,

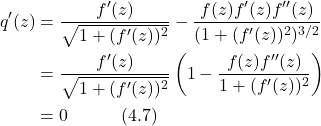

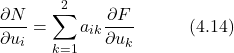

![Rendered by QuickLaTeX.com \[\frac{\partial N}{\partial u_i} = -\sum_{j=1}^2 S_{ij}\frac{\partial F}{\partial u_j}\]](https://nhsjs.com/wp-content/ql-cache/quicklatex.com-ab983a838186f3460c795126993449a0_l3.png)

Proof. Since ![]() , it follows that

, it follows that ![]() . Thus, we can write

. Thus, we can write

for some coefficients ![]() . Furthermore, we differentiate

. Furthermore, we differentiate ![]() with respect to

with respect to ![]() to find

to find

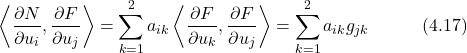

![]()

However, since ![]() by Definition (2.7), we simplify equation (4.15) and have

by Definition (2.7), we simplify equation (4.15) and have

![]()

But using equation (4.14), we also have

because ![]() , by Definition (2.4). Thus, we compare

, by Definition (2.4). Thus, we compare ![]() in equations (4.16) and (4.17) and conclude

in equations (4.16) and (4.17) and conclude

However, with respect to the coordinate basis ![]() , equation (4.18) expands to the matrix equation

, equation (4.18) expands to the matrix equation

![]()

![]()

for ![]() . By definition, equation (4.20) implies

. By definition, equation (4.20) implies

![]()

Thus, plugging equation (4.21) into equation (4.14), we get our desired result.

Lemma 4.2. Let ![]() be a family of regular surfaces with local parametrizations in the form of equation (3.1), first fundamental form

be a family of regular surfaces with local parametrizations in the form of equation (3.1), first fundamental form ![]() , and shape operator

, and shape operator ![]() , such that

, such that ![]() . Then,

. Then,

(a) ![]()

(b) ![]()

where ![]() is the surface gradient of

is the surface gradient of ![]() for smooth

for smooth ![]() .

.

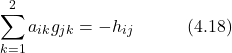

Proof. Since ![]() from equation (3.6), the result for (a) trivially follows. As for (b), first consider

from equation (3.6), the result for (a) trivially follows. As for (b), first consider ![]() . By equation (3.5), we have

. By equation (3.5), we have

![Rendered by QuickLaTeX.com \[g'(s)_{ij} = \left\langle \frac{\partial V}{\partial u_i},\frac{\partial \stackrel{\sim}F}{\partial u_j} \right\rangle + \left\langle \frac{\partial \stackrel{\sim}F}{\partial u_i},\frac{\partial V}{\partial u_j} \right\rangle\]](https://nhsjs.com/wp-content/ql-cache/quicklatex.com-751c79bbf83ba60cb9139d953c79eb31_l3.png)

for ![]() . Therefore, we compute

. Therefore, we compute ![]() as

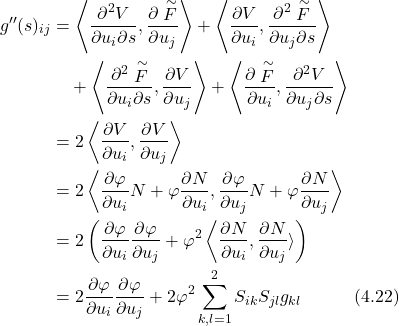

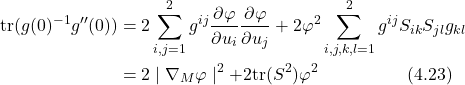

as

Thus, from equation (4.22), we conclude

as desired. Note that sign conventions depending on metric signature might flip ![]() trace sign in some contexts, but follows derivation above.

trace sign in some contexts, but follows derivation above.

For a minimal surface M to be the solution to Plateau’s Problem, we must confirm that M is indeed area-minimizing while satisfying the Minimal Surface Equation; this implies that the area of M would satisfy the “second derivative test.” As such, we are brought to the definition of the Second Variation of Area.

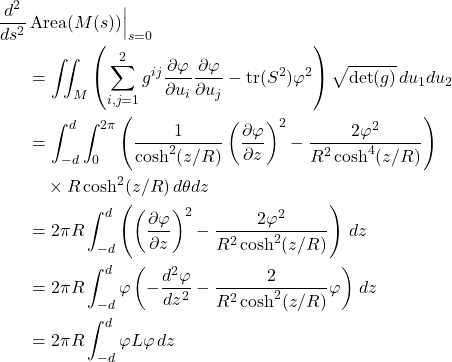

Theorem 4.1 (Second Variation of Area). Let ![]() be a family of regular surfaces with local parametrizations in the form of equation (3.1), first fundamental form

be a family of regular surfaces with local parametrizations in the form of equation (3.1), first fundamental form ![]() , and shape operator

, and shape operator ![]() , such that

, such that ![]() , where

, where ![]() is a minimal surface. Then, the Second Variation of Area of

is a minimal surface. Then, the Second Variation of Area of ![]() is given as

is given as

![]()

for all smooth ![]() such that

such that ![]() on

on ![]() .

.

Remark 4.1. A minimal surface ![]() is locally minimizing if

is locally minimizing if

![]()

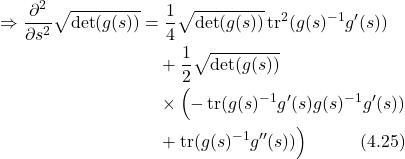

Proof. From (![]() ), we find

), we find

![]()

Thus, we must find ![]() . Recall from equation (3.4) that we have

. Recall from equation (3.4) that we have

![]()

This result comes simply from ![]() .

.

![]()

because ![]() since

since ![]() is a minimal surface. Finally, we substitute Lemmas (4.2, a) and (4.2, b) into equation 4.25 and obtain our desired result.

is a minimal surface. Finally, we substitute Lemmas (4.2, a) and (4.2, b) into equation 4.25 and obtain our desired result.

Now, we will prove the main result of this section, Theorem (4.2).

Outer-Radius Catenoid Stability

Theorem 4.2 (Outer-Radius Catenoid Stability). Consider Plateau’s Problem for the boundary curves ![]() consisting of two coaxial unit discs in

consisting of two coaxial unit discs in ![]() , as seen in Figure 1, such that

, as seen in Figure 1, such that ![]() for

for ![]() , where

, where ![]() is defined in equation (4.13). Consequently, there exist two catenoids

is defined in equation (4.13). Consequently, there exist two catenoids ![]() and

and ![]() such that

such that ![]() , and

, and ![]() . Then,

. Then, ![]() is stable, while

is stable, while ![]() is unstable; i.e.

is unstable; i.e. ![]() is the unique solution to Plateau’s Problem.

is the unique solution to Plateau’s Problem.

Proof. We present a self-contained proof of Theorem (4.2). However, for a more concise proof using Sturm-Liouville theory, see12. We will start by proving that Area(![]() ) < Area(

) < Area(![]() ) and then prove that

) and then prove that ![]() is indeed the unique solution to Plateau’s Problem by using Theorem (4.1).

is indeed the unique solution to Plateau’s Problem by using Theorem (4.1).

First, note that ![]() is parametrized by equation (4.1), where

is parametrized by equation (4.1), where

![]()

from equation (4.9). Further, by equation (4.2), the first fundamental form for ![]() is given by

is given by

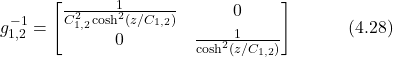

and its inverse as

Therefore, we also have

![]()

and

by equation (4.5). Thus, we compute

![]()

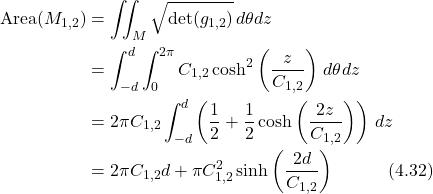

Now, by Remark (2.4), we calculate Area(![]() ) as

) as

Define ![]() and function

and function ![]() such that

such that

![]()

According to equation (4.10, we must have that

![]()

and consequently

![]()

These two equalities are because ![]() in equation (4.10). Therefore, we simplify

in equation (4.10). Therefore, we simplify ![]() as

as

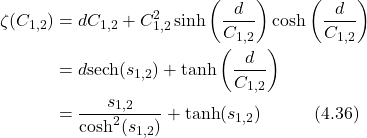

by equations (4.34) and (4.35). Define a function ![]() such that

such that

![]()

where

![]()

according to equation (4.35). Also note that equation (4.38) implies that

![]()

Thus, we find ![]() as

as

Hence, from equations (4.36) and (4.40), we have that ![]() . This implies that

. This implies that

![]()

since ![]() , where

, where ![]() . However, equation (4.41) implies that the difference between

. However, equation (4.41) implies that the difference between ![]() and

and ![]() , denoted as

, denoted as ![]() , is strictly decreasing as

, is strictly decreasing as ![]() increases. As such, we bound this difference by considering the endpoints in the interval

increases. As such, we bound this difference by considering the endpoints in the interval ![]() . Note that in equation (4.38), the maximum value of

. Note that in equation (4.38), the maximum value of ![]() occurs when

occurs when ![]() and thus when

and thus when ![]() . Therefore, at

. Therefore, at ![]() , we have that

, we have that ![]() and hence

and hence ![]() . However, in our interval for

. However, in our interval for ![]() , we have

, we have ![]() , and so

, and so ![]() by equation (4.41). As

by equation (4.41). As ![]() increases,

increases, ![]() decreases, so by the converse, as

decreases, so by the converse, as ![]() decreases,

decreases, ![]() increases. Thus, we conclude that

increases. Thus, we conclude that ![]() . However, by equation (4.32), we also note

. However, by equation (4.32), we also note

![]()

By equation (4.42), it therefore suffices to show that if ![]() is area-minimizing, it then must be the unique solution to Plateau’s Problem.

is area-minimizing, it then must be the unique solution to Plateau’s Problem.

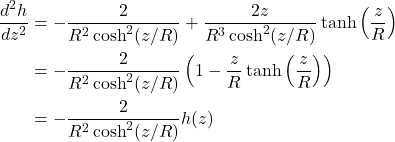

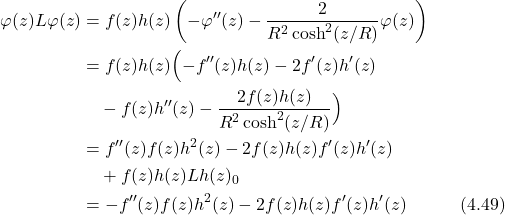

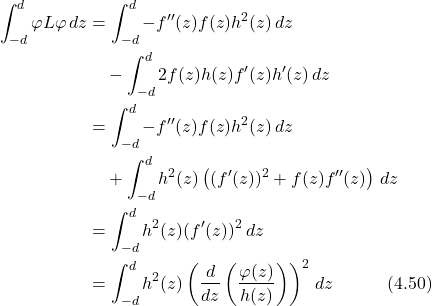

Let ![]() and

and ![]() . Now consider the Second Variation of Area for

. Now consider the Second Variation of Area for ![]() . Let

. Let ![]() be a variational function such that

be a variational function such that ![]() and

and ![]() . That is, we only consider axisymmetric variations and not rotational ones; this restriction is necessary, as rotational variations will not distinguish stability between the catenoids. We define a

. That is, we only consider axisymmetric variations and not rotational ones; this restriction is necessary, as rotational variations will not distinguish stability between the catenoids. We define a ![]() instead of

instead of ![]() since M is rotationally symmetrical and surface perturbations will therefore be independent of

since M is rotationally symmetrical and surface perturbations will therefore be independent of ![]() . Furthermore, non-axisymmetrical perturbations will result in apparent stability for both catenoids, which is unfavorable for our proof13.

. Furthermore, non-axisymmetrical perturbations will result in apparent stability for both catenoids, which is unfavorable for our proof13.

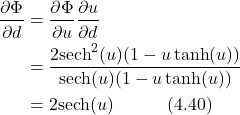

By Theorem (4.1), we therefore have

where ![]() is defined as

is defined as

![]()

In a similar manner to earlier, define ![]() . Now, consider the function

. Now, consider the function

![]()

Then we find the derivatives of ![]() as

as

![]()

Observe now that equation (4.47) implies that ![]() . Furthermore, on the interval

. Furthermore, on the interval ![]() , the function

, the function ![]() is strictly positive. This is because

is strictly positive. This is because ![]() for all

for all ![]() , and thus

, and thus ![]() at the unique solution

at the unique solution ![]() . However, since

. However, since ![]() , we have

, we have ![]() , so

, so ![]() for all

for all ![]() (since

(since ![]() ). This implies that

). This implies that ![]() . Thus, for some continuous function

. Thus, for some continuous function ![]() such that

such that ![]() , define

, define

![]()

Then, by equation (4.44), consider

Now we substitute equation (4.49) into equation (4.43).

Here we used integration by parts on ![]() . Therefore, by equation (4.50), we conclude that

. Therefore, by equation (4.50), we conclude that

![]()

and thus, by Remark (4.1), it follows that M is a solution to Plateau’s Problem. Furthermore, as we have proven in equation (4.42), ![]() , while satisfying the Minimal Surface Equation, cannot be a solution. A similar argument made in equation (4.50) cannot be made for

, while satisfying the Minimal Surface Equation, cannot be a solution. A similar argument made in equation (4.50) cannot be made for ![]() ; since

; since ![]() , the function

, the function ![]() has a root and thus

has a root and thus ![]() is not a continuous function. Therefore,

is not a continuous function. Therefore, ![]() is the unique solution; the outer-radius catenoid is stable and area-minimizing while the inner-radius catenoid is not.

is the unique solution; the outer-radius catenoid is stable and area-minimizing while the inner-radius catenoid is not.

Minimal Graphs

This section will introduce the necessary background into minimal graphs, a specific class of minimal surfaces; furthermore, we will prove the main result of the uniqueness of minimal (planar) graphs. However, we must first introduce the definition of minimal graphs.

Definition 5.1 (Minimal Graph). Let ![]() be a bounded domain. Then, a regular surface

be a bounded domain. Then, a regular surface ![]() is called a minimal graph if

is called a minimal graph if

![]()

is a minimal surface, where ![]() .

.

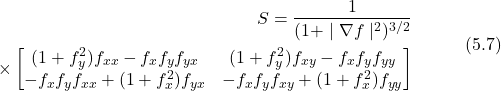

In a similar manner to the Minimal Surface Equation, we desire to find a generalized equation to determine if a regular surface M is a minimal graph; to do so, we must solve the Minimal Surface Equation for M. Let ![]() be a regular surface in the form of Definition (5.1). Then, the local parametrization of M is

be a regular surface in the form of Definition (5.1). Then, the local parametrization of M is

![]()

Thus, according to Definition (2.4), the first fundamental form of M is given as

![]()

and we also have

![]()

Therefore, we compute the inverse of g as

![]()

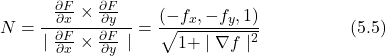

By Definition (2.5), find the Gauss map N as

and by Definition (2.7), the second fundamental form is then

![]()

Therefore, by Definition (2.8), we compute the shape operator as

Finally, by Definition (2.9), the mean curvature is then

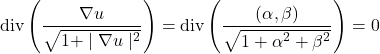

Now, by Theorem (3.1), we are motivated to define the Minimal Graph Equation.

Theorem 5.1. For a bounded domain ![]() , a regular surface

, a regular surface ![]() in the form of

in the form of

![]() is a minimal graph if

is a minimal graph if ![]() for a function

for a function ![]() .

.

Now that we have the Minimal Graph Equation defined, we will prove the main result of this section; specifically, we will conclude the uniqueness of minimal graphs, in Theorem (5.3), and the uniqueness of planar graphs for boundaries confined in a plane in ![]() , in Corollary (5.1).

, in Corollary (5.1).

First, consider Plateau’s Problem for the following:

Let ![]() be a bounded domain with smooth curve

be a bounded domain with smooth curve ![]() above

above ![]() such that

such that ![]()

Consider then a regular surface ![]() such that

such that ![]()

where ![]() . Then, we will prove in Theorem (5.3) that

. Then, we will prove in Theorem (5.3) that ![]() is unique. However, we will need to first explore the Maximum Principle for linear elliptic equations.

is unique. However, we will need to first explore the Maximum Principle for linear elliptic equations.

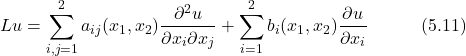

Maximum Principle. Let ![]() be a bounded domain. Define the function

be a bounded domain. Define the function ![]() and the linear operator

and the linear operator ![]() such that

such that

where ![]() are smooth functions. Then,

are smooth functions. Then, ![]() is called elliptic if the symmetric matrix

is called elliptic if the symmetric matrix

![]()

for all ![]() .

. ![]() is positive definite; i.e.,

is positive definite; i.e., ![]()

![]() . When we have

. When we have ![]() , we have an elliptic equation, and thus we can apply the Maximum Principle.

, we have an elliptic equation, and thus we can apply the Maximum Principle.

Theorem 5.2 (Maximum Principle). Note here that Theorem (5.2) is actually the weak Maximum Principle; for a proof of the strong version with a generalization to ![]() , see14. Let

, see14. Let ![]() be a bounded domain with the smooth function

be a bounded domain with the smooth function ![]() and operator

and operator ![]() as defined in equation (5.11). Then, if

as defined in equation (5.11). Then, if ![]() , we have

, we have

![]()

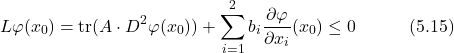

Proof. The following proof is adapted from Colding and Minicozzi15. We prove this by contradiction. Suppose a function ![]() reaches a global maximum inside

reaches a global maximum inside ![]() ; i.e., there exists a

; i.e., there exists a ![]() such that

such that ![]() attains a maximum. Then, by the first derivative test, we have

attains a maximum. Then, by the first derivative test, we have ![]() . Furthermore, by the second derivative text, we also have

. Furthermore, by the second derivative text, we also have

![]()

where ![]() denotes the Hessian matrix. Therefore, we find

denotes the Hessian matrix. Therefore, we find

where ![]() is defined in equation (5.12). In general, if we have an

is defined in equation (5.12). In general, if we have an ![]() symmetric positive definite matrix A and negative semidefinite matrix B, we have that tr(AB)

symmetric positive definite matrix A and negative semidefinite matrix B, we have that tr(AB) ![]() 0. The proof for this comes simply when we define a matrix

0. The proof for this comes simply when we define a matrix ![]() and consider the definiteness of C, where

and consider the definiteness of C, where ![]() for all

for all ![]() . Thus, from equation (5.11), we have

. Thus, from equation (5.11), we have

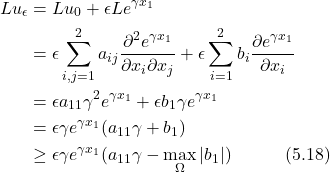

Now consider a function ![]() such that

such that ![]() . Define

. Define

![]()

where ![]() and

and ![]() are arbitrary real numbers. Then, suppose that

are arbitrary real numbers. Then, suppose that ![]() attains a maximum at

attains a maximum at ![]() . Then, by equation (5.15), we find

. Then, by equation (5.15), we find

![]()

However, we want to show that ![]() to arise at a contradiction; also consider

to arise at a contradiction; also consider

However, we also have

![]()

for some ![]() , since A is positive definite. Therefore, we use equation (5.19) and rewrite equation (5.18) as

, since A is positive definite. Therefore, we use equation (5.19) and rewrite equation (5.18) as

![]()

Now, choose ![]() such that

such that

![]()

so equation (5.20) becomes

![]()

However, we compare equations (5.22) and (5.17) and arise at a contradiction! As such, we conclude

![]()

However, also note that as ![]() in equation (5.23), we arrive at

in equation (5.23), we arrive at

![]()

as desired. To prove the same argument for the minimum, we instead choose ![]() . Also, then, note that

. Also, then, note that ![]() , so we have

, so we have ![]() . However, we then have

. However, we then have ![]() , which must then be strictly negative according to equation (5.22). Thus, we arrive at a contradiction and the result follows. For a more detailed proof, see16,17.

, which must then be strictly negative according to equation (5.22). Thus, we arrive at a contradiction and the result follows. For a more detailed proof, see16,17.

Uniqueness of Minimal Graphs

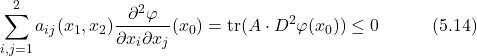

Lemma 5.1. Let the functions ![]() and

and ![]() satisfy the Minimal Graph Equation, with the function

satisfy the Minimal Graph Equation, with the function ![]() . Then,

. Then,

![]()

for some symmetric matrix ![]() in the form of equation (5.12).

in the form of equation (5.12).

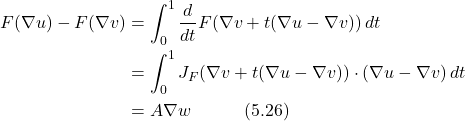

Proof. Define a map ![]() such that

such that

![]()

Then, since ![]() and

and ![]() are both minimal surfaces, we therefore have that

are both minimal surfaces, we therefore have that ![]() . Now, consider

. Now, consider

where A is defined as the matrix

![]()

Therefore, according to equations (5.26) and (5.27), we have

![]()

Now it suffices to show that ![]() is positive definite. To do so, we will prove that

is positive definite. To do so, we will prove that ![]() is positive definite. Let

is positive definite. Let ![]() where

where

![]()

Then, we compute ![]() as

as

Therefore, by equation (5.30), we calculate ![]() as

as

![]()

However, from equation (5.31), we have

![]()

and

![]()

By equations (5.32) and (5.33), it therefore follows that ![]() is positive definite. However, by equation (5.27), it also implies that A is positive definite. The integral in a strictly positive region of a positive definite matrix is also a positive definite matrix; for a brief proof, consider

is positive definite. However, by equation (5.27), it also implies that A is positive definite. The integral in a strictly positive region of a positive definite matrix is also a positive definite matrix; for a brief proof, consider ![]() . Thus, our desired result immediately follows from equation (5.28).

. Thus, our desired result immediately follows from equation (5.28).

With Lemma (5.1) proven, we can move on to the proof for the uniqueness of minimal graphs, the main result of this section.

Theorem 5.3 (Uniqueness of Minimal Graphs). Let ![]() be a bounded domain and curve

be a bounded domain and curve ![]() in the form of equation (5.9). If

in the form of equation (5.9). If ![]() is a minimal graph in the form of equation (5.10), then

is a minimal graph in the form of equation (5.10), then ![]() is unique.

is unique.

Proof. Let ![]() and

and ![]() be minimal graphs with corresponding functions

be minimal graphs with corresponding functions ![]() and

and ![]() , respectively. Then, define a function

, respectively. Then, define a function ![]() . By Lemma (5.1), we have

. By Lemma (5.1), we have

![]()

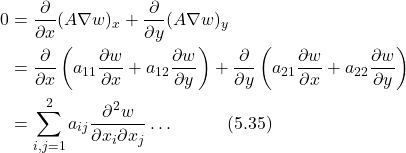

for some positive definite matrix ![]() . Expanding equation (5.34), we find

. Expanding equation (5.34), we find

where ![]() and

and ![]() . However, observe now from equation (5.35) that

. However, observe now from equation (5.35) that ![]() , where

, where ![]() is in the form of equation (5.11). Note here that the maximum principle invoked actually requires uniform ellipticity, a property which the minimal graph equation satisfies. On the compact domain in consideration, the gradient is bounded, ensuring uniform ellipticity. See18 for more.

is in the form of equation (5.11). Note here that the maximum principle invoked actually requires uniform ellipticity, a property which the minimal graph equation satisfies. On the compact domain in consideration, the gradient is bounded, ensuring uniform ellipticity. See18 for more.

Thus, according to Theorem (5.2), we have

![]()

But ![]() , so

, so ![]() for all

for all ![]() . Therefore, according to equation (5.36), we find

. Therefore, according to equation (5.36), we find

![]()

Hence, by equation (5.37), it follows that ![]() , so

, so ![]() , and thus

, and thus ![]() .

.

Note, however, that Theorem (5.3) only proves the uniqueness of minimal graphs and not the existence. For proof of existence in ![]() , see19. Nevertheless, for minimal planar graphs, we have that uniqueness and existence hold, according to Corollary (5.1).

, see19. Nevertheless, for minimal planar graphs, we have that uniqueness and existence hold, according to Corollary (5.1).

Corollary 5.1 (Uniqueness of Minimal Planar Graphs). If ![]() is a planar curve bounding a convex domain, then the associated minimal graph M lies in the same plane; i.e., only the trivial, planar solution for M exists.

is a planar curve bounding a convex domain, then the associated minimal graph M lies in the same plane; i.e., only the trivial, planar solution for M exists.

Proof. Suppose ![]() lies entirely in plane

lies entirely in plane ![]() , such that P is of the form

, such that P is of the form

![]()

for arbitrary coefficients ![]() , and

, and ![]() . Then, we have that a function

. Then, we have that a function ![]() lies entirely in

lies entirely in ![]() for certain

for certain ![]() , and

, and ![]() . Let

. Let ![]() correspond to the minimal surface

correspond to the minimal surface ![]() given in the form of equation (5.10). Then we test if

given in the form of equation (5.10). Then we test if ![]() satisfies the Minimal Graph Equation:

satisfies the Minimal Graph Equation:

Thus, by equation (5.38), M is a minimal graph. However, by Theorem (5.3), it follows that M is unique. Therefore, the only minimal planar graph is the trivial solution where ![]() .

.

Acknowledgments

The author would like to express sincere appreciation to his mentor Dr. Tz-Kiu Aaron Chow for presenting this research topic and motivation behind the proofs, guiding the overall research process, and settling any confusion in the abundance of questions regarding the subject.

References

- B. Lawson, Lectures on Minimal Submanifolds, Monografias de Matema´tica, No. 14, In-stituto de Matem´atica Pura e Aplicada (IMPA), Rio de Janeiro, 1973, https://impa.br/ wp-content/uploads/2017/04/Mon_14.pdf. [↩]

- F. Schwartz, Existence of outermost apparent horizons with product of spheres topology, Communications in Analysis and Geometry. Vol. 16, pg. 799–817, 2008. https://arxiv.org/abs/0704.2403. [↩]

- P.W. Bates, G.W. Wei, and S. Zhao, Minimal Molecular Surfaces and Their Applications, J. Comput. Chem. Vol.29, pg. 380–391, 2008, https://doi.org/10.1002/jcc.20796. [↩]

- M. Emmer, Minimal Surfaces and Architecture: New Forms, Nexus Network Journal. Vol. 15(2), 2012, https://core.ac.uk/download/pdf/204352959.pdf. [↩] [↩]

- M. Ito and T. Sato, In-situ observation of a soap film catenoid: A simple educational physics experiment, Eur. J. Phys. Vol. 31, no. 2, pg. 357–365, 2010. https://arxiv.org/pdf/0711.3256 [↩]

- R. E. Goldstein, A. I. Pesci, C. Raufaste, and J. D. Shemilt, Geometry of catenoidal soap film collapse induced by boundary deformation, Phys. Rev. E. Vol. 104, 035105, 2021, https://journals.aps.org/pre/pdf/10.1103/PhysRevE.104.035105. [↩]

- M. P. do Carmo, Differential Geometry of Curves and Surfaces, Prentice-Hall, Englewood Cliffs, NJ, 1976. [↩] [↩] [↩]

- M. Shiffman, On surfaces of stationary area bounded by two circles, or convex curves, in parallel planes, Ann. of Math. Vol. 63, pg. 77–90, 1956. https://www.jstor.org/stable/1969991 [↩]

- R. Schoen, Uniqueness, Symmetry, and Embeddedness of Minimal Surfaces, J. Differential Geom. Vol. 18, pg. 791–809, 1983. https://math.jhu.edu/~js/Math748/schoen.symmetry.pdf. [↩]

- M. Ito and T. Sato, In-situ observation of a soap film catenoid: A simple educational physics experiment, Eur. J. Phys. Vol. 31, no. 2, pg. 357–365, 2010. https://arxiv.org/pdf/0711.3256 [↩]

- R. E. Goldstein, A. I. Pesci, C. Raufaste, and J. D. Shemilt, Geometry of catenoidal soap film collapse induced by boundary deformation, Phys. Rev. E Vol. 104, 035105, 2021. [↩]

- J. Eggers and T. F. Dupont, Stability and Oscillations of a Catenoid Soap Film, American Journal of Physics. Vol. 49(4), pg. 334-343, 1981. https://jfuchs.hotell.kau.se/kurs/amek/prst/15_sofi.pdf [↩]

- S. Akbari, J.M. Hill, and F. van de Ven, Catenoid Stability with a Free Contact Line, SIAM Journal on Applied Mathematics. Vol. 75, pg. 2110-2127, 2015. https://doi.org/10.1137/151004677 [↩]

- P. Pucci and J. Serrin, The strong maximum principle revisited, J. Differential Equations. Vol. 196, pg.1–66, 2004. https://pucci.sites.dmi.unipg.it/lavori/grado.pdf [↩]

- T. H. Colding and W. P. Minicozzi II, A Course in Minimal Surfaces, Graduate Studies in Mathematics, Vol. 121, American Mathematical Society, Providence, RI, 2011. [↩]

- P. Pucci and J. Serrin, The strong maximum principle revisited, J. Differential Equations. Vol. 196, pg.1–66, 2004. https://pucci.sites.dmi.unipg.it/lavori/grado.pdf. [↩]

- T. H. Colding and W. P. Minicozzi II, A Course in Minimal Surfaces, Graduate Studies in Mathematics, Vol. 121, American Mathematical Society, Providence, RI, 2011. [↩]

- D. Gilbarg and N. S. Trudinger, Elliptic Partial Differential Equations of Second Order, 2nd ed., Springer, Berlin, 2001 [↩]

- H. Jenkins and J. Serrin, The Dirichlet problem for the minimal surface equation in higher dimensions, J. Reine Angew. Math. Vol. 229, pg. 170–187, 1968. https://eudml.org/doc/150841. [↩]