Abstract

As AI is increasingly used in surgery, understanding public attitudes is critical to facilitate its successful integration and adoption. Despite the rising use of robotic surgical techniques, the existing body of research within the literature includes some flaws in their methodology. These include that all data was collected in only one geographic nation, no comparative analyses were made between personal characteristics for any of the study participants at the same time period and that there were no distinctions drawn between robotic- or computer-assistance towards accomplishing surgical procedures or achieving overall patient success. These research shortcomings make it difficult to determine how factors such as gender, age and social economic standing, race and regional area of residency affect public acceptance of the use of robotic surgical methods. This study examined how demographic variables shape public comfort with and acceptance of fully autonomous AI surgery in a large online survey of 164 individuals from various continents – including gender, age, income, ethnicity, and geographic location. The attitudes and level of comfort of participants were quantitatively evaluated and analyzed across the designated demographic dimensions. The key findings revealed marked demographic disparities: men were 2.4 times more comfortable with AI surgery than women (41.38% of male respondents vs. 17.71% of female respondents), and more affluent individuals were more open. Geographic variation was substantial, with 67% of Asian-continent respondents reporting positive attitudes toward AI surgery as opposed to Europe’s comparatively reserved 44% favorable response rate. Age even had an impact, with more older respondents being optimistic about results but more fearful of complete automation. The cross-sectional design and convenience sampling via an online platform may limit generalizability to broader populations. The results of this study should be interpreted within the context of these limitations. These findings suggest that the adoption of AI surgery needs to take into account issues regarding demographics in order to ensure widespread acceptance.

Keywords: Artificial Intelligence Surgery, Autonomous Surgical Systems, Healthcare Technology Acceptance, Public Perception Demographics.

Introduction

Background and Context

The line of AI development indicates clear steps toward more autonomous functions and independence in operational matters. Artificial Intelligence (AI) has continued to grow and integrate into various fields such as education, finance, transportation, entertainment, healthcare, etc. As we see a trend of more research being done in AI, it is important to ensure the view of the public on these advancements. It also requires AI to be proficient in the tasks it is doing for us, as humans, to trust it. Theoretical Frameworks have pre-existing and pre-measured constructs that have been validated using standard measures to be used to test. By requiring pre-existing constructs and validated standard measures, it would restrict the purpose of this research study to conduct demographic exploration. No previous studies could find consent for a completely automated surgical AI system on other continents before the completion of this research study. Therefore, no pre-defined construct standards were used for the development of the instrument. An inductive descriptive method was used to develop a construct for this research. When one talks of AI in Surgery, AI needs to excel in several areas. The technical intricacy of these integrated subsystems challenges this simplification in the public imagination of a single “robot” performing surgery, and suggests that perception is likely to be linked with system-wide credibility1. It appears that autonomous surgical practice can represent several technological advances, not just a singular technology, but a complicated system of subsystems, each with its own weak points. There is empirical evidence to suggest that public trust for AI used in surgeries has been developed based upon people’s belief that they can rely on the technology as a means of controlling or managing (the overall surgical process) all aspects from pre-op through post-op surgical support. AI has been increasingly becoming autonomous and independent day by day. Provides advantages over human surgeons such as lower costs, eliminates tremor (human) effects on surgical procedure and decreases fatigue in the surgeon, and allows for an individualized approach to treating patients. Additionally, it also provides scalability across regions. Maintaining or improving the quality of care is vital as AI develops and lowers costs2.

Problem Statement and Rationale

While the potential benefits are staggering, effective integration of surgical AI relies fundamentally on public trust in the technology. Direct collection of perceptual data from the general population is necessary to assess and properly evaluate the public’s perception of this new and emerging technology.

Significance and Purpose

By supplying empirical, cross-continental data about the level of acceptance of fully In-skills AI by the public, this study fills an important void in the literature and lays the groundwork for developing equitable deployment strategies, policy formulation, and future research based on theory.

This research therefore establishes a preliminary demographic map for acceptance that provides future researchers with a base from which to conduct hypothesis-driven, framework-grounded research. This research will also provide actionable information to healthcare institutions, policy makers and developers of AI (AI) looking to create equitable, demographically-specific pathways for the integration of surgical AI systems into practice.

Objectives

The goal of this study is to find out what people think about AI-assisted surgery. The following research questions will help us reach this goal:

Three main areas of objectives are addressed in this research: (1) the total amount of comfort among the entire sample population when it comes to allowing robots to completely perform every aspect of surgical procedures autonomously; (2) the way different demographic characteristics (i.e., gender, age, income, race, and geographic region) interact to create variances in public attitude toward and acceptance of robotic surgical procedures being operated solely through machine-driven means; and (3) whether respondents who reportedly have a greater familiarity with, or interest in AI have a correspondingly higher degree of acceptance for employing robotic surgical assistance.

Scope and Limitations

This cross-sectional online survey was used to measure the public’s views at one given time. The public’s views may change over time as it relates to surgical AI becoming more commonplace, which this survey could not measure. Convenience sampling methods used through SurveySwap to gather survey participants will introduce bias in that all respondents volunteered themselves for the survey and would likely be more involved in technology than those who are not responding to the survey. There is an apparent regional lean towards one (Asia and North America) over other regions by having more people responding from those areas. Due to the disparity between different regions, comparing data across geographical regions will not be possible.. The English-only version of the survey may also produce differences in how all six regions viewed the concepts of comfort, trust, and autonomy. All data collected was self-reported; thus, an individual may have a different view of what those concepts mean to themselves based upon the cultural background they come from.

Methodological Overview

To address these objectives, an online cross-sectional survey was conducted using data from 164 people across six continents after approval from the IRB.

Problem Statement – A Second, Related Problem: Data Protocols

Problems such as no protocols placed on AI data collection and sharing in the healthcare industry are areas of concern3. As a case in point, both the Optimam Dataset and the Digital Database for Screening Mammography (DDSM) are extensive publicly available datasets of historically well-selected and well-annotated mammograms. They are used extensively to train and evaluate deep learning models4,5. The significance of these datasets is that they are celebrated exceptions; The existence of these types of data help support the case for sharing data from one jurisdiction to another while simultaneously highlighting the problem, as most medical fields lack such curated, consented, and standardized resources, leading to a patchwork and potentially biased foundation for building general-purpose surgical AI.

Literature Review

Current Status of AI in Healthcare

In recent years, AI-assisted healthcare algorithms have become more common for analyzing and interpreting medical imaging data (such as X Rays, MRIs and CT Scans) allowing for faster and more accurate diagnoses than ever before.

Drastic progress is being made in numerous fields of health care due to AI. AI is employed in medical diagnostics and imaging for analyzing medical images using deep learning (DL) and computer vision algorithms, improving diagnostic accuracy and speeding up the identification of ailments like cancer or fractures.

By utilizing machine learning and predictive analytics to accelerate the processes of identifying new drug candidates (de novo) and repurposing already approved drugs, the total time and cost involved in drug discovery has decreased greatly. This progression supports the creation of AI-driven preoperative pharmacologic plans in the context of surgery.

The potential for AI-based personalized medicine exists in having the ability to provide a personalized treatment plan based on a given patient’s individual genetic and medical history. This potential also has a direct correlation with the possibility of tailoring surgical approaches to the individual risk profile of each individual patient. Their genetic profile and medical history are then developed using data mining, genomic analysis, and machine learning, which has improved and targeted therapeutic outcomes.

Functions that.predict patient outcomes and risk for developing a specific disease after developing a prediction based on an individual plant are characterized as predictive analytics, as these outputs can be used to perform initial risk stratification for the treatment of an individual patient and to provide evidence-based decision support for surgery. Internet of Things (IoT) devices and Machine Learning are used in AI-based patient monitoring and care systems to track vital signs in real time, enhance patient safety, and identify issues early. For optimizing the accuracy of surgery and clinical outcome, AI in robotic surgery must be combined with robotics, computer vision, and machine learning. Big data analytics and epidemiological modeling are utilized for disease outbreak prediction to track and forecast disease spread and support timely public health planning and intervention. In addition, the utilization of data mining and natural language processing (NLP) in electronic health record (EHR) management retrieves clinical observations and enhances clinical decision-making and data accessibility. Virtual healthcare services and improved patient access and convenience are achieved through telemedicine and remote care platforms, which use NLP, ML, and video analytics. By assisting medical professionals in making evidence-based recommendations through the use of expert systems, machine learning, and natural language processing, clinical decision support systems enhance patient outcomes and the standard of care. Lastly, by utilizing NLP, ML, and conversational AI to provide health information and symptom checking, AI-powered health chatbots provide continuous patient engagement and lessen the workload for medical professionals6.

Preoperative, Intraoperative, Postoperative: Researchers believe that AI in automated surgery can be split into three domains: preoperative, intraoperative, and postoperative. Preoperative tasks include Population Screening Strategies, Early and Accurate Diagnosis, and Preoperative Risk Prediction, which are all done using Clinical Registries and Electronic Health Records. Intraoperative includes Intraoperative Guidance, Operative Robotics, and Education and Training using Operative Data and Sensors. Finally, postoperative tasks like AI-led follow-up, effective discharge planning, and predicting postoperative complications, all of which are done with imaging, biomarkers, and omics7

Public Perception of AI in Surgery

In a research study investigating public perceptions of autonomous robotic surgery, participants were solicited to estimate the percentage of a standard robotic surgery conducted autonomously, notwithstanding the lack of any FDA-approved autonomous surgical systems in the United States. The responses, which ranged from 0% to 100%, showed a clear overestimation of robotic surgery’s current capabilities. The median estimate was 31.5% and the mean was 38.4%.

This entire list is significant in that it disabuses the public of the idea that “robots do surgery” in their minds. It underscores that rather than one technology, autonomous surgery is a highly complex integration of subsystems with a single point of failure. The public’s view would likely be shaped by their level of belief that AI can oversee this complex workflow as a whole rather than a part thereof.

Regarding this study, the levels of comfort among respondents for robotic surgical procedures varied significantly. 18% of respondents felt completely comfortable, whereas, fairly close to 27% of respondents felt somewhat uncomfortable. The breakdown of the sample included % of these are somewhat comfortable (37%); neutral (9%); completely uncomfortable (10%).

As such, although many respondents appear familiar with robotic surgery, most have expressed some feelings discomfort with a sense of this combining to say: “Robotic surgery and automation in surgical care is somewhat acceptable among all US-based convenience samples.

(OR = …) measures the relationship between an exposure and an outcome by comparing the odds of the outcome occurring in an exposed group to the odds of it occurring in an unexposed group.

(p = …) signifies the chances of the results being random. A low p signifies low probability of random results, and vice versa.

Individuals who resided in an area with a greater proportion of broadband subscription (OR = 1.13, p = 0.032) and who were older (OR = 1.03, p = 0.0061) tended to feel entirely uncomfortable with robotic surgery, while those who resided in an area with a greater median income in zip codes tended to feel somewhat comfortable (OR = 1.03, p = 0.014) and less uncomfortable (OR = 0.57, p = 0.00078), based on multiple regressions. Individuals who held an associate’s degree (OR = 4.36, p = 0.037) or who were male (OR = 5.02, p = 0.0010) tended to feel fully comfortable, based on multiple regressions. However, what is particularly important about this study was that all observations were made while observing only those attending the Minnesota State Fair, placing limitations on the generalizability of these results across a larger, more heterogeneous population. Only a handful of individual demographic predictors were assessed individually (in isolation) and there was no assessment of individuals’ or groups’ attitudes toward completely autonomous fittings (as opposed to robotic-assisted fittings) to fill a critical gap in our understanding of how various, interrelated demographic factors affect willingness to accept devices that will be operated solely by means of autonomous technology. A positive correlation was found between comfort, as a continuous variable, and being male (p = 0.0017), having an associate’s degree (p = 0.041), or residing in an area where there was greater economic status (p = 0.0012); however, there was a negative correlation between comfort, as a continuous variable, and having any other racial or ethnic distribution than white (p = 0.014) or having an increased percentage of area residents with broadband subscription (p = 0.035)8

Ethical, Legal, and Financial Concerns in AI-Assisted Surgery

Emerging articles point to emerging ethical issues as AI and machine learning (ML) are more and more utilized in the operating room. One of the primary issues is that AI algorithms lack transparency; they are sometimes even called “black boxes,” so even experts are not fully aware of how decisions are being made9. Such inexplicability is not so much a technical limit; it poses a fundamental limit to public trust and informed consent. A patient cannot be expected to have confidence in a process that the controlling surgeon cannot fully explain.

“Transparency” is most likely a core variable in public acceptability, which this survey directly addresses. A neural network that had predicted surgical site infection, for instance, had offered predictions against prevailing clinical knowledge acceptable in practice, pushing such machines to their limits7. Accountability is also an issue in research; if an AI recommendation results in a complication, no one knows whether the developers or the surgeon is to blame. Secondly, the data used is critical in the fairness and validity of AI predictions. Deceptive results, particularly in vulnerable populations, can be attributed to imperfect or biased databases, even continuing existing healthcare disparities. But under proper use, studies indicate that AI could alleviate doctor bias. Privacy is also of concern when patient information is used by private entities for profit3. Lastly, experts caution that the implementation of AI trained on culturally embedded data, i.e., the Western concept of beauty, holds the risk of imposing values on members of other societies.

The moral issues are to be addressed so that AI can be used fairly and responsibly in surgery.

Legal and regulatory aspects are one of the major challenges in facilitating the complete integration of AI into surgery. Tools like SPIRIT-AI and CONSORT-AI were created to make it as transparent and standardized as possible when it comes to how AI is being tested in clinical trials10. Legal responsibility is the matter at hand – if the surgery done by AI goes wrong, it’s still unclear if the surgeon or the developers are liable. As capabilities with AI continue to evolve with their own internal research on new data, regulators like the FDA are lagging10. Others suggest a “surgeon-in-the-loop” to promote human control and limit liability. This regulatory model, which has been suggested, is highly relevant to this study.

It argues that public ease will not be a simple “yes” or “no” for AI but will be highest for a hybrid approach in which human experience maintains absolute control, something provided for by the questions in the survey, structured to investigate. To ensure AI is safely used in medicine, law, and hospital policy needs to advance hand in hand with the technology.

AI surgery is expensive initially, but valuable long-term benefits may be achieved through decreased manual labor and optimality. Machine learning and natural language processing are technologies that have the potential to be used to explain and mechanize the analysis of complicated data in an effort to save time and improve care. Overall, cost-benefit analysis is not forthcoming, however, especially with surgery. Some studies do suggest robot-assisted surgery saves in the form of shorter hospital stays, but others are conflicting9. Since funding is generally via institutions or individual scientists, gargantuan questions of who will fund the enormous use of AI continue. While emerging better with technology, the cost will decrease, but meanwhile, the financial risk continues to be an astronomical deterrent. Morris et al. address the ethical concerns about surgical AI from the perspective of institutions and practitioners and do not provide empirical evidence regarding how patients and/or the public (with respect to different income levels and geographic areas) view or weigh these ethical concerns. The lack of publicly available, demographically stratified data on ethical concern distributions is a direct gap that this research aims to address, as the institutional willingness to employ surgical AI versus the public willingness to accept surgical AI are two separate, potentially very different phenomena that span socioeconomic and cultural divides9. This economic reality is not merely an implementation issue; it is also a preview of one of the overarching themes of this research. The benefits of AI surgery will be inaccessible to poorer patients and hospitals, and issues that could be witnessed in the survey data of respondents from different socioeconomic groups.

These ethical considerations were instrumental in developing the survey tool for this research. The survey questions relating to data privacy, consent, and accountability were specifically developed to assess how the general population — as opposed to legal and medical professionals — view and allocate ethical responsibility among developers, surgeons, hospitals, and regulating authorities. The inclusion of a hypothetical situation of surgical failure in the survey was based on the accountability gap identified in the literature and will allow for empirical data collection regarding the distribution of public blame assigned to those who have developed autonomous systems that have resulted in negative consequences.

Regulation of Surgical AI Systems

Regulation of surgical AI systems requires a mature legal and ethical framework responsive to the speed of development of machine learning technology in high-risk clinical settings.

Trumbull explores legal and ethical concerns about autonomous weapons. Building on this, we can draw analogous conclusions for AI in surgery because of the high risk to human life. This is a powerful comparison because it raises the discussion from technical to ethical and legal. It frames the very center questions of this research in their most stark language: How do we assign blame for a life-altering decision made by a machine? Public opinion on these questions of responsibility, as canvassed here, is crucial to framing good future regulation.

Following analogies from Trumbull’s assessment of autonomous weapons, such as juridical pressures on decision-making, accountability, and standardization in international humanitarian law (IHL), surgical AI is also a force disruptive of traditional man-carried-out procedures. IHL requires human responsibility and accountability to follow humans even where lethal force is performed by autonomous systems upon deployment – a requirement that can likewise be met in medicine, too, where physicians must be able to retain final responsibility for the results despite work being subcontracted to algorithms.

Surgeon AI systems founded on reinforcement learning or on real-time context adaptation undermine conventional forms of regulation, such as FDA approval, which so far have resisted static, pre-coded machines. Similarly, Trumbull also identifies the concern with testing learning-enabled arms that develop beyond test contexts. With this, surgical AI instruments must be monitored around the clock to guarantee standard performance and the well-being of patients in untested clinical situations.

The “distributed knowledge” problem outlined in the war setting – whereby accountability is distributed among operators, planners, and programmers – is being translated wholesale to multi-stakeholder responsibility in surgical AI to include hospitals, software firms, device makers, and surgeons. This diffusion of responsibility creates a “labyrinth of blame” for the public. If no one is unequivocally held responsible, it can erode trust. This research investigates who the public holds responsible, an important finding for policymakers and hospitals seeking to build trustworthy AI systems.

The regulation regarding human oversight has greatly influenced the question on the level of automation surveyed in this questionnaire because it asked respondents to choose the highest level of AI autonomy in surgery that they would personally trust. In assessing public acceptance of automation as it exists along a spectrum of partial to complete autonomy, our research provides data that is empirically relevant to an identified ‘human-on-loop’ regulatory model, as well as evidence of a need for a public-facing perspective that is currently absent from the body of existing literature addressing legal and regulatory policy.

The “human-on-the-loop” control model of placing reliance on the sincerity of autonomous weapons is also one of the regulatory pillars that guarantees precise AI never embarks on irreversibility without means of human recovery intervention. Lastly, to prevent ethics and accountability loopholes of the sort warned against in autonomous weapons scholarship, regulators must evolve towards malleable legal principles that can deal with the technical imprecision and ethical subtlety of AI-supported surgery10.

Regulations around Surgical AI Systems

Governing surgical AI systems necessitates an advanced legal and ethical model attuned to the accelerated evolution of machine learning capabilities in high-risk clinical environments. Building on analogies from Trumbull’s analysis of autonomous weapons, including legal tensions over decision-making, blame, and conformity in international humanitarian law (IHL), surgical AI poses an equally disruptive force to conventional human-performed interventions.

IHL requires that human responsibility and accountability rest with humans even when lethal force is exercised by autonomous systems post-deployment – a norm that can be paralleled in medicine, where physicians must be able to have ultimate responsibility for the results despite delegated work to algorithms.

Surgical AI systems, particularly those involving reinforcement learning or real-time adaptation, pose difficulties to existing mechanisms of regulation, such as FDA approval, which hitherto have tested static, pre-programmed machines. Similarly, Trumbull highlights the difficulty in testing weapons with learning capacities that become more sophisticated than initial test settings. Given this, surgical AI tools must always be observed to make sure that performance is consistent and patients are safe in unpredictable clinical settings.

The “distributed knowledge” problem defined in the context of war, where responsibility is distributed among operators, strategists, and programmers, is being mapped straight onto multi-stakeholder liability in surgical AI to include hospitals, software firms, device makers, and surgeons.

The “human-on-the-loop” principle of oversight imposed on validating autonomous weapons can also be a regulatory cornerstone that guarantees surgical AI never undertakes irreversible actions without human recovery intervention modes. While these regulatory proposals are built upon sophisticated principles, they lack empirical support regarding what people think about accountability for, as well as accountability to, or blaming current or potential AI but are based only on the perceptions of legal and social scholars, engineers, and policy makers. In a recent study by Chalutz Ben-Gal, the authors findings indicate that in primary care settings, the sociodemographic characteristics of patients (including their ages or genders) did not predict patient acceptance of AI versus traditional health-care providers. The results suggest that the assumptions currently used in regulatory framework models warrant replacement with new models with meaningful policy frameworks that are developed from more representative data on the public’s beliefs about AI.

Lastly, to prevent ethical and accountability lacunae of the kind warned against in autonomous weapons literature, regulators must advance towards adaptive legal norms that can cope with both the technical uncertainty and moral complexity of AI-aided surgery10.

The previously examined literature presents an extensive body of empirical and conceptual support for the five demographic variables included in this study. Gender is implicated because of documented bias in AI-generated medical evaluations and evidence of disparate levels of trust concerning generative AI. Age is relevant to this research as there are contradictory findings across multiple studies regarding age-based differences in the acceptance of technology within healthcare settings. Income can also be implicated since there is extensive research documenting how socioeconomic status impacts access to, and use of, digital health services. Ethnicity is warranted through numerous studies documenting racially biased AI training datasets and evidence of racially disparate experiences in healthcare delivery systems. Last, but not least, geographic location is included because there is a dearth of comparative literature on trust within healthcare delivery systems and attitudes toward technology; however, those studies that do compare systems across countries suggest that there are significant variations between regions. The previous literature does not include any studies that examined all five of these variables together regarding fully autonomous rather than AI-assisted surgical systems; therefore, there exists a gap in the literature that this study seeks to fill.

Methodology

Research Design

This research utilized a quantitative, cross-sectional methodology. An IRB-approved, cross-sectional online survey was conducted through SurveySwap with a convenience sample of 164 individuals from diverse continents.

Participants or Sample

The final data included 164 individuals from various continents. The sample encompassed 8 distinct age categories, 11 educational levels, 6 income brackets, 3 specified genders, and 6 continents, facilitating a comprehensive analysis. The research evaluates public perceptions based on intersections of this demographic information, as will be described in the following sub-sections.

The convenience sample used through an online sample may be biased toward self-selection; therefore, participants are expected to be more technologically savvy and educated than the general population. Because of the uneven distribution of participants by continent, the cross-regional comparisons should also be interpreted with caution.

Although no formal power analysis was performed for this study (because it was exploratory), there are ample precedents in the literature showing that a sample of approximately 164 can provide descriptive information via cross-demographic comparisons across five demographic characteristics using exploratory survey designs that investigate public attitudes toward new medical technologies (i.e., higher than the minimum detectable effect size). The purpose of exploratory studies like this one is to provide an overall picture or “map” of the demographic distribution of acceptance; inferential estimates will be obtained from larger probability-based samples collected in future confirmatory studies.

Data Collection

An online survey was used to collect the data. The survey was designed to minimize response bias. Inspiration was taken from various other similar surveys cited in the Citations Section11,12. The first step involved determining how questions would be phrased so that they included the least bias in the phrasing, which would allow for the most accurate data. Additionally, modifications such as structuring most questions as multiple choice and including a consent form for minors helped maximize response accuracy and allowed the survey to be eligible for data from minors. The survey was distributed through SurveySwap.com, enabling recruitment of participants from diverse demographics and geographic backgrounds. The survey was closed after one month and the collected data were retained for analysis.

In order to provide sufficient information for the independent researcher to reproduce all analyses conducted in this research, we have provided enough methodological detail to allow a researcher, working independently, to replicate all analyses reported in this paper, should they wish to do so. The original survey data collected through SurveySwap was exported from SurveySwap and saved in a CSV file called ‘Research Survey.csv’. All analyses performed for the current study were conducted using Python boxes in Google Colaboratory using three libraries: pandas (version consistent with this study), numpy, and matplotlib for data manipulation and frequency analysis, numerical operations and handling missing values, and figure generation, respectively. Once the Research Survey.csv file was loaded as a pandas DataFrame using the command pd.read_csv(), the first four columns (which contained metadata associated with the SurveySwap platform such as respondents’ identification numbers and timestamps) were removed from the DataFrame using the command df = df.iloc[:,4:] in order to allow for a cleaner and more consistent analysis. The column originally titled ‘What is your age group?’ was renamed to ‘age’ for convenience in the analysis; this original column was also deleted to ensure there was no duplicate column.

Demographic subgroups were created by filtering the main DataFrame using simple boolean filters. For gender, df_men_all was created by filtering the main DataFrame by the column ‘What is your gender?’ for ‘Male’ values, resulting in 58 respondents. For the female subgroup, df_women_all, the filter was the same column, filtering for values that include ‘Female’ or ‘Short King.’ This was done because ‘Short King’ had some non-male values that matched the ‘Female’ subgroup values.

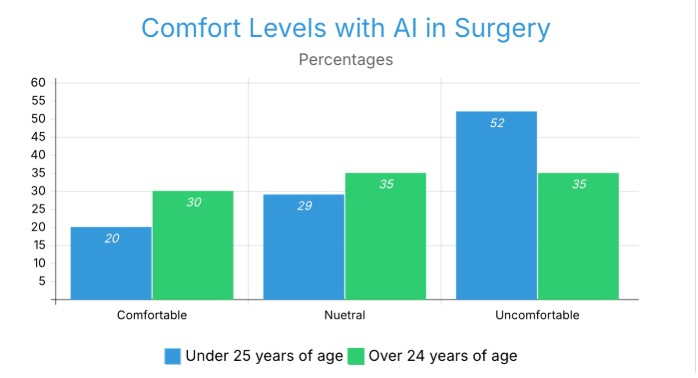

For the analysis by age, df_under_25 was created by filtering the main DataFrame by ‘Under 18′ or ’18-24′ values, resulting in 66 respondents, while df_over_24 was created by filtering the main DataFrame for ’25-34,’ ’35-44,’ ’45-54,’ ’55-64,’ and ’65+’ values, resulting in 97 respondents.

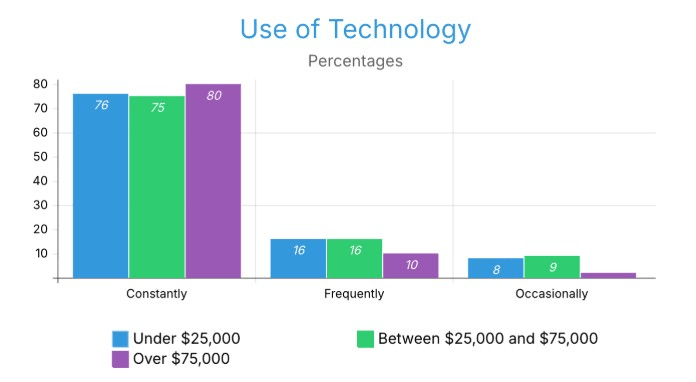

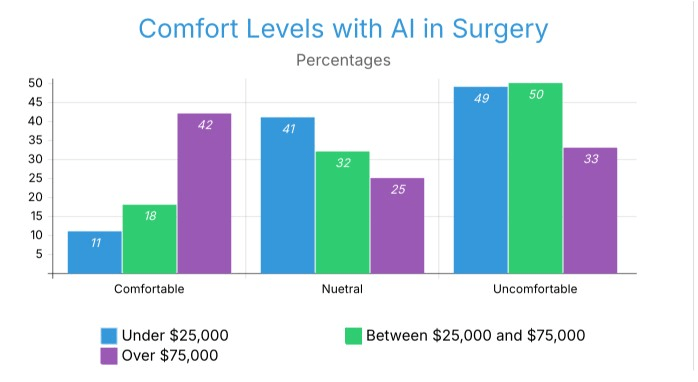

Income subgroups include df_under_25k, created by filtering the main DataFrame for ‘Under $25,000’ values, df_25k_to_74k, created by filtering the main DataFrame for the union of ‘$25,000 – $49,999’ and ‘$50,000 – $74,999’ values, and df_over_75k, created by filtering the main DataFrame for the union of ‘$75,000 – $99,999,’ ‘$100,000 – $149,999,’ and ‘$150,000 or more’ values.

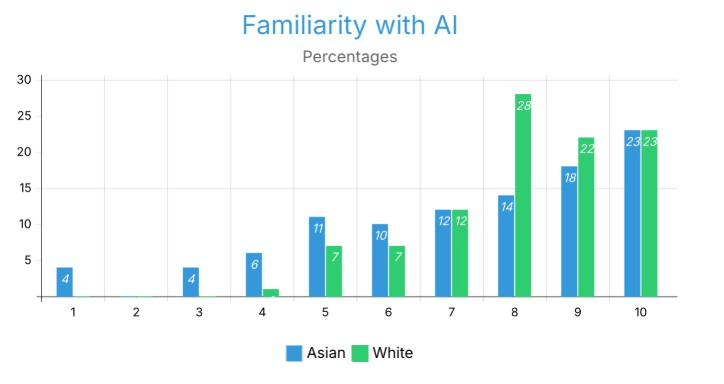

Finally, for ethnicity, df_asian_ethnicity was created by filtering the main DataFrame for values that include ‘Asian’ or ‘Asian (including the Indian-subcontinent)’ as well as ‘White;Asian (including the Indian-subcontinent)’ values, while df_white_ethnicity was created by filtering the main DataFrame for values that include ‘White’ as an ethnicity, regardless of other selections such as ‘White;Asian (including the Indian-subcontinent).

Responses for questions assessing AI familiarity and AI interest on a scale of 1–10 were processed by defining a lambda function to separate the string value at the semicolon if the value contained a semicolon. The first element was converted into an integer value; all responses that were not numbers were replaced by numpy NaN and excluded from the numeric aggregation. For the categorical response questions, (e.g., degree of comfort with AI, optimism related to AI, blame for job loss due to automation, the preference to have an automated job) the value_counts() and value_counts(normalize=True) * 100 methods were applied to each question’s column using the corresponding subgroup DataFrame. Therefore, the resulting output provided both a raw count of the number of respondents selecting a particular option, and percent distribution of respondents selecting each option, for each subgroup. Thus, all percentage values provided within the Results section of this report reflect the proportion of respondents within each subgroup that selected each response option, with the denominator used for calculating the percentage equal to the total number of valid responses in the subgroup for the specific question.

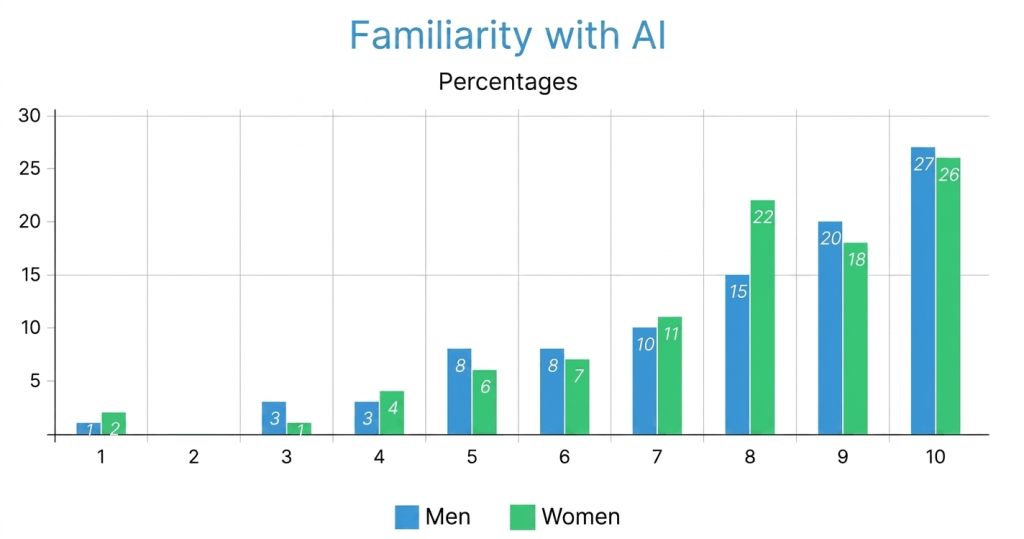

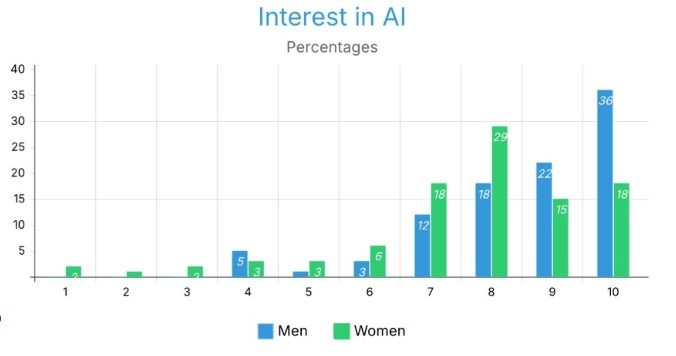

This is the mapping of all the figures to their corresponding subgroups, columns, and calculations: Figure 1 corresponds to all AI Familiarity Scores (1 through 10) by df_men_all and by df_women_all. AI Familiarity Scores are calculated with normalizing the counts of parsed numerical values counted divided by subgroup size as seen in Figure 1; Figure 2 corresponds to all AI Interest Scores (1 through 10) by df_men_all and df_women_all. AI Interest Scores are also calculated using the same method as AI Familiarity Scores as shown in Figure 2 and will appear the same way across both tables. Figure 7 corresponds to all AI Familiarity Scores for df_under_25 and df_over_24 using the same method, as described previously. Figure 9 corresponds to technology usage frequency categorical responses across df_under_25k, df_25k_to_74k, and df_over_75k. Figure 10 corresponds to AI surgery comfort categorical responses for each of the three income categories (Comfortable, Neutral, Uncomfortable) across the same three income groups. Figures 4.0 and 4.1 correspond to AI Familiarity and Interest Scores, respectively, for individuals with an Asian Ethnic background compared to individuals with a White Ethnic background. Finally, Figures 5.0-5.6 correspond to attitudinal and comfort variables for Asia, Europe, and North America through continental subgroups that were created from the DataFrame’s ‘Where do you live?’ column. All DataFrame groupings were created using the .copy() function to ensure that none of the master DataFrames were modified in any unintended way by additional analysis.

Variables and Measurement

The independent variables comprised the demographic characteristics of the participants, including gender, age, income, ethnicity, and geographic location. The main things that depended on the participants were their attitudes, level of comfort, and views on autonomous AI surgery. These were quantitatively assessed and analyzed across the specified demographic dimensions. A threshold was employed to binary-code respondents for the analysis of self-reported familiarity and interest in AI. Responses of 7 or lower on the 10-point scale were omitted in specific comparative data (e.g., Figures 1.0, 1.1, 2.0, 3.0, 3.1). This threshold was established to differentiate respondents exhibiting high familiarity or interest (scores 8-10) from those with moderate to low familiarity or interest, facilitating a clearer comparison of attitudes within the most engaged subgroups.

A new survey instrument was created because there is no validated scale to measure public acceptance of fully autonomous surgical AI. The literature on trust in automation provided guidance on how to frame questions around comfort, trust and blame attributions. While the lack of a validated instrument is a limitation, the new nature of the construct (fully autonomous surgical AI acceptance across demographic groups) did not allow for the direct use of existing scales which were created for lower risk automation. Future research should focus on creating and validating a scale for this area. In addition, it is accepted that the way questions were framed could affect individuals’ responses and that the hypothetical questions in this survey, while intended to minimize leading language, will not accurately describe the complex decision making individuals would undergo in an actual clinical environment.

There were several potential confounding variables that were not controlled for in this analysis. Although education levels were resource collected; they have not been systematically implemented as a control variable for all demographic comparisons. However, there is a great deal of evidence to demonstrate that higher education and technology acceptance are correlated. Individual experience with surgery also plays a role in shaping the individual’s comfort level with the use of AI within the surgical environment, regardless of the demographic classifications. In addition, the self-reported experiences of familiarity and interest in AI will also be additional confounding variables due to the correlation between these variables and both the demographics of the individuals and the outcome variables of interest. As a result, the confounding relationship of these variables will be viewed as a limitation of the current exploratory design and will need to be included in future confirmatory studies as appropriate statistical controls (i.e., multivariate regression including education, surgical experience, and AI familiarity as covariates) to isolate the independent contribution of the demographic variables to the acceptance of the use of AI in surgery.

Procedure

I developed an online survey to learn how the general public views different demographics and how those perceptions relate to factors like age, gender, familiarity with AI, and educational attainment.

SurveySwap.com was used to distribute the survey.

After a month, the survey was closed and retained for analysis.

I analyzed the data using Google Colab, which uses Python. The RESULTS section contains the analysis.

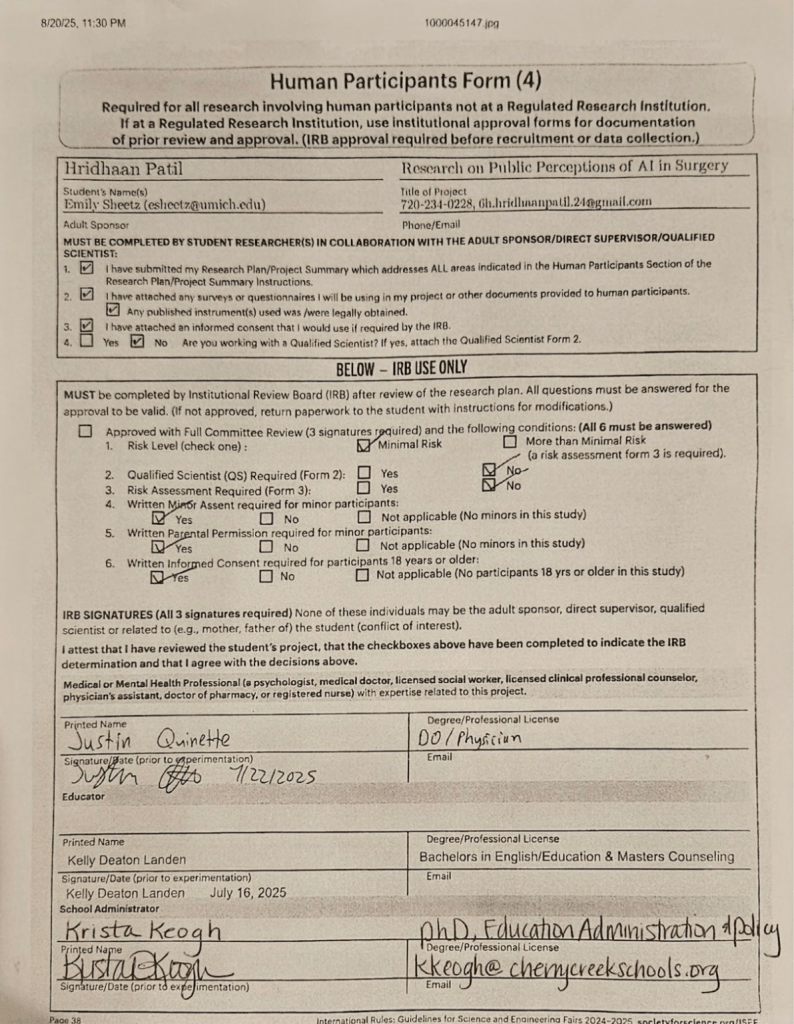

Ethical Considerations

Approval from an Institutional Review Board (IRB), consisting of an authorized medical provider, school administrator and educator, was obtained to carry out this research due to the nature of collecting data from individuals who are under the age of majority. Documentation of the IRB’s endorsement is included in Appendix B. In addition to the approval, every participant was presented with their own informed consent prior to participating, and the anonymity and confidentiality of all participants were guaranteed throughout the course of the project. Under no circumstances were any names or other identifiable information collected from any of the participants.

Results

Overview

The target sample size was Figure 3.4150 records, I exceeded the data collection to 164 records. The final data included 8 different age phases/groups, 11 various levels of education, 6 levels of income, 3 types of genders specified, and 6 continents, which aids the analysis in being detailed. The research evaluates public perceptions based on intersections of this demographic information, as will be described in the following sub-sections.

Since this study was exploratory and descriptive in nature, no formal methods of multiple testing correction (e.g., Bonferroni correction or false discovery rate adjustment) could be applied. The researchers recognize that making multiple demographic comparisons across five variables increases the chance of finding false patterns due to chance (type I error). Therefore, all results should be viewed with caution and no single difference in the results should be construed as definitive until further analyzed in a larger confirmatory study using a pre-specified hypothesis and applying appropriate corrections for multiple comparisons.

This research is solely descriptive and frequency based; all response distributions for the various demographic subgroups are counted as percentage proportions of the respective subgroups based on normalized count values. All responses were forwarded in the formal manner outlined above with no inference (95% CI, no “p value”, etc.); thus, no chi-square or t-tests etc., were conducted because the exploratory nature did not require testing. The differences observed between subgroups should be interpreted as descriptive unless stated otherwise. A “difference,” refers to the numerical difference between the percentage distributions of the subgroups within a sample of 164 respondents (i.e., convenience sample).

Primary Demographics

Key Findings

Gender

The results for gender and age are descriptive and observational; therefore, no inference could potentially be made about a cause-and-effect relationship based on these results.

Both genders had equal amounts of familiarity with AI, with males typically being classified as being more acquainted than females; with most men identifying in the range of 7-10 and the men displaying a higher percentage of ratings in the high range than female counterparts, nearly 30% of the men indicated that they are highly aware of AI (10) as opposed to only 26% of the women did. Males made up the bulk of the percentage distribution for ratings of 9 (21%), whereas 22% of the women rated their level of familiarity at 8. Differences among the 2 distributions exist across the full range of familiarity ratings (1-10) with women being greater proportioned than men in the 4-7 rating range, The findings suggest that both the female and male groups were similar with regard to the relative levels of awareness and familiarity they had concerning technology use with AI overall that females were more proportioned for the lower score versus males who were higher in total count.

Both males and females have expressed very high levels of interest in AI; however, men exhibit a much stronger presence at the very high end of the scale. For example, men reported their highest level of interest at a score of 10 (36.21%) followed by a score of 9 (22.41%) and a score of 8 (18.97%). In contrast, females’ highest concentration was at a score of 8 (29.17%), and both scores of 7 and 10 were reported equally (18.75% each), showing a wider range of values across both high and mid-range levels. With regard to those individuals who rated their level of interest to be at a score of 10, there were almost twice as many men (36.21%) as females (18.75%), and the same trend held true for those who rated their level of interest as 9 (22.41% of men vs. 16.67% of females). However, at scores of 7 and 8, females actually reported higher percentages than males and showed a slightly higher percentage of responses in the lower range (1-6). These findings suggest that while there is a shared enthusiasm for the future of AI among both genders, females tend to have more modestly distributed responses to extreme levels of interest than males do. While this distribution suggests differences between male and female enthusiasm levels (i.e., males tend to exhibit higher levels of enthusiasm than females), a causal relationship cannot be drawn from the recency of this result and/or that females are more cautious in their responses.

Male respondents reported higher mean interest in AI. This observed difference may be associated with broader patterns of female underrepresentation in the AI sector, though a causal relationship cannot be established from this data alone; however, we cannot say this is a result of this data alone11.

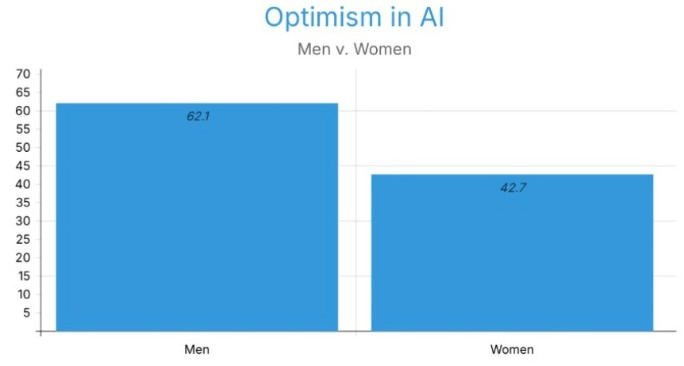

To assess the use of technology daily, both genders showed similar percentages regarding their usage. With 79.3% and 81.25% voting “constantly” for men and women, respectively. While women show a slightly higher usage, it creates insignificant bias. Male respondents more frequently reported a positive attitude towards the application of AI in surgery, with 62.1% of them saying they feel “positive” and 29.3% of them leaning towards the middle with “mediocre”. More women indicated that they had a negative or neutral attitude, with only 42.71% feeling positive (Figure 3). A major gap of 19.39% can be seen between the two genders.

Male respondents more frequently identified developers as responsible for the AI system, mainly, with 60.34% of men voting. Then, the surgeon/human operator with 53.45%. On the other hand,female respondents more frequently identified the surgeon/human operator as responsible, with 52.08% of them siding with them, then the AI system developers (20.83%). These data suggest that men identified developers (i.e., individuals developing technology) earlier than women, while women identified human operators (i.e., those operating technology) earlier than men.

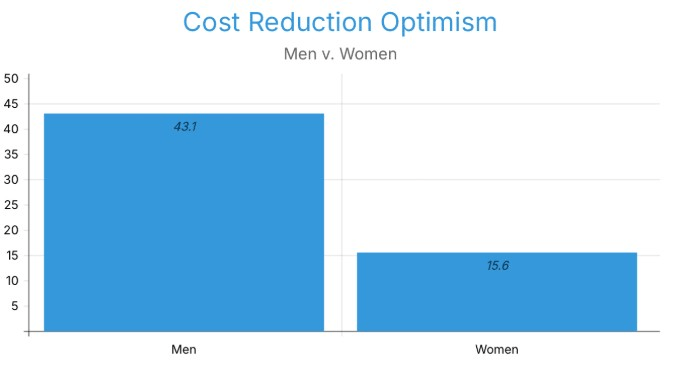

Now, hypothetically, if AI were to take over surgeons, then the most favored option for both was that surgeons could switch to supervising/validating AI surgeries, with 74.14% of men and 76.04% of women. Additionally, both also agree with patient counseling/diagnostics as the second alternative, with 18.97% of men and 12.50% of women. As integration of AI and healthcare might seem feasible, male respondents were roughly three times more likely than female respondents to believe that costs will decrease. 43.10% of men believe that costs will go down, while 15.62% of women believe that costs will reduce (Figure 4).

When it comes to surgery accessibility in underserved areas with the help of AI, both men and women equally showed optimism, with 37.93% of men and 38.54% of women believing that it is possible with infrastructural investments.

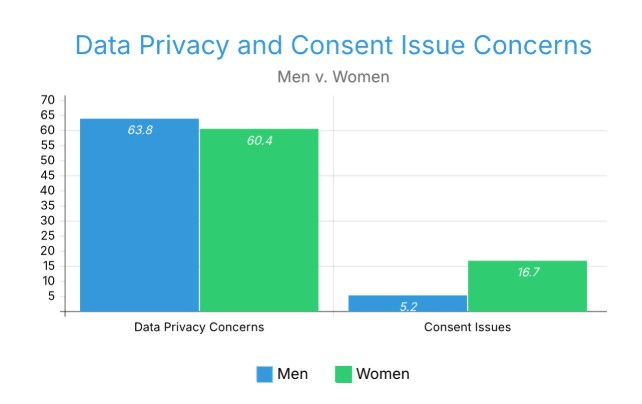

In terms of Data Privacy Concerns, the majority of both men and women opted for the need for better transparency, with 63.79% and 60.42% for men and women, respectively. But women are three times more concerned about consent issues, with 16.67% of women and 5.17% of men.

Male respondents more frequently selected increased automation levels, with conditional automation levels at 34.48%. The most preferred level of automation amongst female respondents was partial automation, with 31.25%. The most common scenarios where both male and female respondents believed human surgeons were still needed were for emergency surgeries and ethical considerations, but women selected the view that human surgeons will ‘always’ be needed at 1.7 times the rate of men, with 15.52% of men and 26.04% of women.

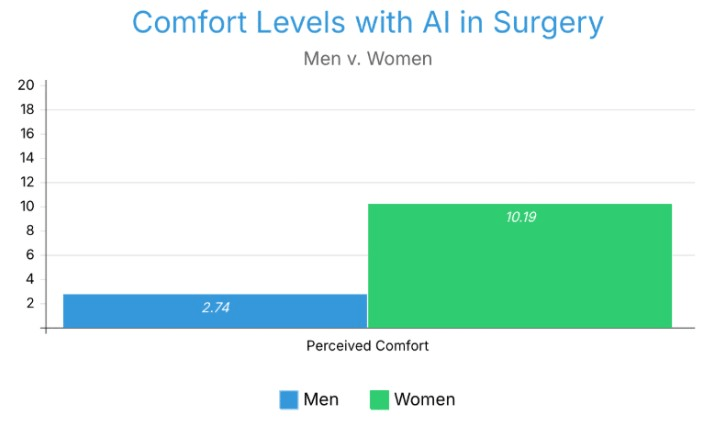

We see a really significant curve between men and women when it comes to comfort with fully autonomous AI in surgery. Women were significantly less accepting than men of AI surgical systems as fully autonomous and that the way gender modifies how much we accept an AI system also determines what we believe an acceptable AI system will be.

Excluded data: positive to moderate perception of data privacy and consent issues of ai in surgery

Male respondents were observed at 2.4 times the rate of comfort, female respondents at 2.1 times the rate of discomfort. Male respondents were 1.8 times more frequently associated with expecting ‘better’ outcomes from AI surgery, with 74.14% saying better outcomes compared to 41.67% of women (Figure 6). Women expect worse privacy outcomes, with 57.3% of women compared to 37.93% of men.

3.7 times greater the likelihood that female respondents were to select that they were not comfortable with AI in any type of surgery. (10.19% to 2.74%).

Figure 1.5 displays the percentage of respondents uncomfortable with AI surgery being performed on themselves, by gender.

Excluded data: positive levels of comfort (Data shown represents percentage of individuals who are uncomfortable with ai surgery being performed on them)

A plurality of both male and female respondents believed AI would reduce racial bias.Men and women regarding this research have had nearly equal amounts of experiences with surgery, so there is no bias regarding previous analyses. Women and men both trust healthcare providers most of the time, 48.28% to 51.04%.

Age Groups

Now that we have evaluated men and women, we are moving on to age groups. We investigate age groups, and we consider two broad groups of people over and under 24.

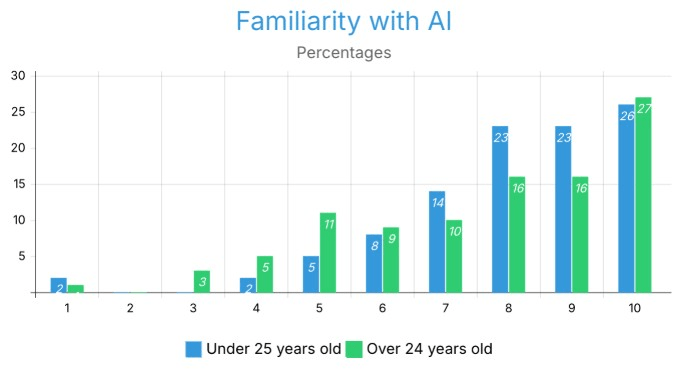

Figure 7 presents AI familiarity scores across the under-25 and 25-and-older age groups.

When it comes to familiarity with AI, respondents in both age groups (under 25 and 25 and older) show high levels of familiarity overall, with the majority of responses scoring a 9 (representing 67% of responses for both groups) or a 10 (25% of responses) for their familiarity with AI. For those aged under 25, 72% of respondents report a score of 8–10, while only 64% report scores in this range for those aged over 25. The primary difference between age groups is that respondents aged under 25 reported substantially higher levels of familiarity with AI than did respondents aged over 25, particularly at the 9 th score (23% vs. 16%). In addition, the age group aged over 25 had a larger proportion of respondents reporting lower levels of familiarity than did the younger age group at the mid-range level (7th or 5th score) than at higher-level familiarity scores (8–10). The reporting of very low levels of familiarity (1–4) in both age groups was relatively uncommon across both groups of respondents, generally falling somewhere between 0 and 4%. The difference in scores illustrates that while both age groups appear to report a high familiarity with AI, many more people under age 25 report very high familiarity, leading to a highly concentrated response to levels of familiarity (8–10), whereas people over age 25 provide a wider range of familiarity with AI, with quite a few people reporting mid-points (7 and 5 scores). The reasons for the difference in the distribution of reported AI familiarity scores between respondents under and over 25 may be attributed to generational differences in confidence or frequency of exposure to and engagement with AI.

Regarding optimism of AI in surgery. The most common response for individuals under age 24 was ‘Mediocre,’ while that for those over age 24 was Positive. A larger percentage of older people reported a positive attitude. 40.91% to 49.48%.

If something goes wrong in the operating room, the most selected blame among young people was the surgeon/human operators (42.42%), and also the AI system Developers with 42.42% and 31.82%. On the other hand, older adults have really nuanced responses, with 13.40% of the responses saying multiple parties equally. Following with AI system developers and surgeons/developers for 9.28% each.

Both age groups agree with infrastructure investments as a way to improve surgery access, using the help of AI as their leading belief. A larger percentage of young adults believed these areas couldn’t afford the technology, 22.73% of them saying underprivileged areas can’t afford technology compared to 11.34% of individuals above 25 years of age.

While the most common privacy concern for individuals under 25 was the need for more transparency, with 59.09% of them saying they need better transparency, multiple complex combinations can be seen regarding people over 24.

A larger percentage of older individuals selected that human surgeons would ‘always’ be needed (22.69% vs 19.70% “Always needed”). A larger percentage of youth stated they would feel ‘uncomfortable’ with 51.52% voting compared to the 35.05% of older adults.

Older respondents believed the likelihood of surgical success using AI would be greater than younger respondents. Younger respondents expressed concern about how their data and actions may be captured and used if an AI system was used to perform surgery on them. Participants over 24 rejected all types of AI surgery at three times the rate of younger respondents (18.56% vs 5.88%) (Figure 8). Figure 7 displays the percentage of respondents comfortable with AI surgery being performed on them, by age group.

The comfort level of respondents with AI surgery indicated a contrary pattern amongst different age groups. Those aged over 25 years reported a significantly greater comfort level (30%) than those under 25 years of age (20%). The under-25 group also reported an extremely greater level of discomfort at 52% in comparison to the 35% reported by the over-25 group. There were also a greater number of neutral responses amongst the over-25 group (35% compared to 29% for under-25s). This opposition between the level of youth/discomfort is contrary to the traditional view that younger, more technologically savvy generations will be more comfortable with AI technology being used in Medical Surgery. It highlights that familiarity with technology used on an everyday basis does not equate to comfort when using AI technologies in terms of Life and Death high-pressure environments.

A higher percentage of young adults believed that AI would reduce bias, with 57.58% to 50.52%. Both age groups trust health care providers equally.

Income Level Groups

Now let’s shift our focus to the income status with three different groups separated for this study: people with an income under $25,000 (Group 1), people with an income $25,000 to $75,000 (Group 2), and people with an income of more than $75,000 (Group 3).

Interestingly, the mean self-reported familiarity score fell slightly as income increased, with 32.43%, 22.73%, and 27.08% for 10, 10, and 9 on the 10-rated scale for Groups 1, 2, and 3, respectively (Figure 9). The mean self-reported interest score increased as income increased, with 27.03%, 22.03%, and 27.08% for 8, 8, and 10 on the 10 rated scale for Groups 1, 2, and 3, respectively (Figure 10).

All three income groups reported a high frequency of technology use and the majority of respondents reported that they used several hours of technology each day. The under-$25,000 and $25,000-$74,999 income groups reported nearly identical use rates with 76% for the under-$25,000 group and 75% for the $25,000-$74,999 group, while the over-$75,000 income group had a significantly higher amount of constant users at 88%. The groups that reported frequent usage were also similar with both lower income groups reporting at 16% and the upper income group only had 10% of respondents reporting use more than once a day which indicates that upper-income individuals that are not constant technology users are much less likely to use it Frequently, they instead are using their technology virtually all the time. Occasional users (using two to three times per week) were also similar with 8% and 9% of lower income groups reporting occasional use, but only 2% of the upper-income group used technology on an occasional basis. In summary, Data across income brackets suggest that technology use was equally high among respondents, there is an observable positive relationship between increasing income levels and an increase in the intensity and consistency of the technology used.

Figure 9 displays technology usage frequency across the three income groups.

Although there may be a correlation between access to technology, work requirements or demands, and social and economic status, data cannot determine the causal relationship(s) between the observed trends.

The comfort level of those with higher incomes in relation to AI surgery also increases. The comfort level at which an individual is willing to use AI technology in the performance of surgery and the degree to which someone is willing to accept an AI system as a fully autonomous actor will vary based on that individual’s income and the relative level of income of individuals who live in close proximity to them. Those who are not comfortable are similar in both income brackets of < $25k and $25k–$74,999, both with 49%, and those in the highest income bracket with 33%. Those who are neutral about AI surgery also show a declining pattern in their percentages: 41%, 32%, and 25% for each income bracket. This suggests that those in higher income brackets more frequently expressed stronger opinions about AI surgery. As income levels of households rise, their comfort level with AI surgical procedures also rises. The observed patterns of technology access, health information exposure, and trust in electronic health technologies have been higher among higher-income respondents compared with other income groups, which can also vary by socioeconomic status.

Figure 10 displays comfort levels with AI surgery across the three income groups.

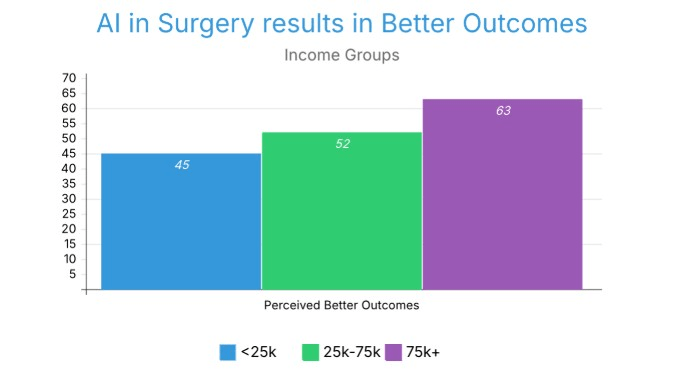

All three groups have “Better” as their primary response for whether AI integration would lead to better outcomes, with 45%, 52%, and 63% for Groups 1, 2, and 3, respectively (Figure 11). This indicates a slight rise in the expectation of better outcomes with higher income.

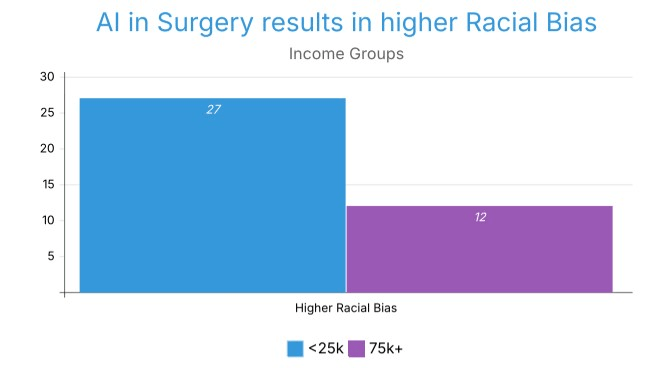

27.03% of Group 1 believes that AI in the surgical field would increase racial bias, while only 12% of participants in Group 3 believe the same (Figure 12). This direction suggests that lower-income respondents were more prone to expect an increase in racial bias and that higher-income respondents more frequently anticipated a decrease.

Figure 12 displays the perceived impact of AI surgery on racial bias across income groups.

There was also evidence of a significant socioeconomic gradient in the level of comfort with robotic-assisted surgery observed in this study, which is consistent with the findings of Larnyo et al. (2025) and Yao et al. (2022), who provided evidence of income-based disparities in access to and use of digital health. The findings from this study indicate that these disparities likely exist not only with regard to access but also in relation to attitudes; in fact, it is possible that the deployment of surgical AI (without understanding the importance of equity-focused policies) will contribute to the reproduction and perpetuation of existing inequities within the healthcare system, rather than resolving these inequities.

We see three full differences concerning blame if mishaps happen during surgery, with 16.22%, 15.91%, and 12.50% of Group 1, 2, and 3 voting for The AI System, All Parties and Regulatory Bodies, and the Surgeon/Developers, respectively. We see a contrast between Group 1 and Group 3, with Group 1 believing that costs will go up if AI is integrated into surgery (19%), and Group 3 believing that it will decrease prices (23%).

ETHNIC Groups (Asian vs. White)

Due to a high number of participants from two ethnicities: Asian (n=84) and White (n=68), I have decided to analyze these two groups.

Respondents from both White and Asian backgrounds had a high level of familiarity with AI, with responses being primarily in the 7-10 range across both groups. The most common familiarity level for Asian respondents was 10 (23%) followed by 9 (18%) and 8 (14%). White respondents exhibited their greatest frequency at level 8 (28%), with their next largest frequencies being 10 and 9 (23% and 22% respectively). At level 10, familiarity was the same for both groups at 23%. The largest discrepancy was observed at level 8, where White respondents exhibited nearly double the frequency to that of Asian respondents (28% vs. 14%), which indicates a greater level of clustering at mid to high levels of familiarity for White respondents. Asian respondents had a somewhat broader spread of distributions in the mid to lower levels (1-6), with some representation at levels 1 (4%), 3 (4%), and 4 (6%); there were no White respondents at these levels. These comparisons suggest that though both White and Asian respondents have a general level of familiarity with AI, White respondents are clustered tightly at mid to high levels of familiarity, while Asian respondents are somewhat more dispersed across the full range of familiarity.

AI system developers were the most commonly selected party for blame by Asian respondents, if anything goes wrong, with 17%. Whereas, Whites tend to have a bit more of a spread when it comes to their primary blame, with the data spanning across the AI system, The Developers, the Surgeons, the Regulatory Bodies, etc.

Partial Automation was the highest selected level of automation by White respondents, but by Asian respondents, it was Conditional Automation, with 20% of them saying so, compared to Whites. Most Whites believe that the qualifying stage for an AI system is Partial Automation (31%), whereas most Asians believe that it is Conditional (30%).

Both ethnicities have similar trust in healthcare providers, experience of surgeries, and amount of surgeries, providing for no bias regarding questions around that.

Demographic Groups

The following regional comparisons are strictly observational. No cultural, political, or societal explanations are inferred from the data, as continental subgroup sizes are insufficient to support culturally generalizable conclusions and the convenience sampling methodology precludes any causal or interpretive claims about regional differences.

Observed demographic distributions varied across continental subgroups in this sample. In Asia, 85% of respondents were Asian, while in Europe, 69% were White and 20% Asian. North America showed a more distributed ethnic composition, with 57% Asian and 40% White respondents.

Education also differed: in Europe (41% Bachelor’s, 36% Master’s) and Asia (43% Bachelor’s, 35% Master’s) there was close concentration at higher levels of education, while there was greater spreading in North America with 26% Bachelor’s, 15% Master’s, and much larger 26% only high school and 33% “other.”

Family incomes provided the steepest contrast as 43% of the North American respondents had incomes over $150k, but much lower numbers were exhibited in Asia (24% under $25k, 24% $25k-49k, and 33% refused to answer) and Europe (31% under $25k, 22% $25k-49k, and 20% refused).

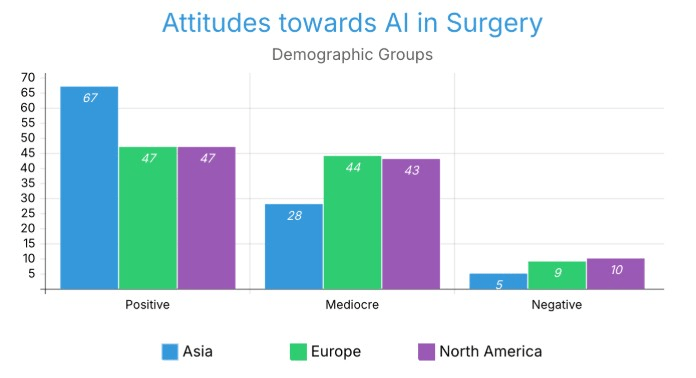

Attitudes among AI in surgery also differed. Geographical patterns of comfort level acceptance indicate that respondents from Asian countries felt most positively about artificial intelligence systems performing surgeries, while European respondents were most apprehensive, and North American respondents were not particularly supportive or opposed. However, these differences cannot be generalised for numerous reasons (Figure 5.2).

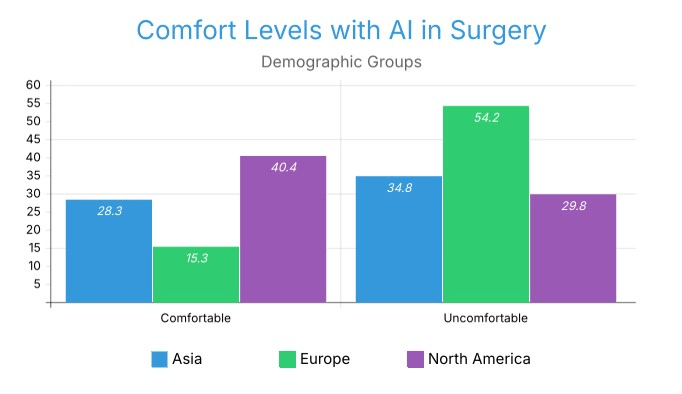

Comfort measures followed suit: 40.4% of North Americans felt comfortable with AI surgery compared to only 15.3% in Europe and 28.3% in Asia (Figure 5.5). But instead, 54.2% of Europeans felt uncomfortable, compared to 34.8% in Asia and 29.8% in North America (Figure 5.4).

This distribution suggests that European respondents displayed the most conservative pattern, the most comfortable were the North American respondents, and the most in the middle were the Asian respondents (37%).

Other results revealed universal concerns but with regional twists. Across all three, most respondents believed AI would worsen data privacy: 61% in Asia, 54.2% in Europe, and 53.2% in North America.

Healthcare trust was highest in Asia (71.7% trusted most or all), slightly lower in Europe (67.8%), and lowest in North America (61.7%), where 6.4% reported little or none (Figure 5.6).

Age also impacted responses: Europe was most youth-dominated, with 83% 18–34, while North America had 27.7% below 18, and Asia was more mid-range split, with 26.1% 35–44 and 17.4% 45–54. These observed differences across continental subgroups suggest that attitudes toward AI in surgery co-vary with demographic composition, income distribution, and self-reported healthcare trust within this sample, though no causal or cultural interpretation can be drawn from these patterns.

Detailed Findings

Combined with variations

Age Groups + Other Factors

While using two target age groups: Under 18 to 34, and 35 and above, we can find some differences between perceptions when Age is split. Target Group 1 = 35+ years old, Bachelor’s education and above, positive surgery experience (n=13). Target Group 2 = Under 18 to 34 years old, Some college or less, minimal surgery experience (n=14)

There’s a difference between optimism and comfort. Target Group 1 (older, more experienced with surgery) is significantly more comfortable with surgery being performed on them (61.54%) and feels extremely optimistic about better outcomes related to fully autonomous AI surgery (92.31%). Target Group 2 (younger, less experienced in life and surgery) is the majority uncomfortable with AI surgery being performed on them (57.14%).

Human Surgeon Blamers Vs. AI System Blamers

Using two Target Groups: One group that would blame the AI system, and the other that would blame the Human Surgeon/Operator in case of a fault. AI System blamers (n=116); Human Blamers (n=34).

Discussion

Restatement of Key Findings

Demographics are an important part of evaluating how people feel about the use of fully automated surgical artificial intelligence (AI). There are a number of different demographic characteristics (e.g., age, gender) that contribute to how people feel about this type of technology (e.g., the difference in comfort level between men and women is 2.4; between people with lower income levels vs. those with higher income levels, the difference is nearly 4; and how much people are accepting of surgical AI varies between Asia and Europe).

Although these findings are valid on their own, combined together they tell one important story – if surgical AI is introduced into an undifferentiated society and the society is not approached in a demographic-sensitive manner, healthcare inequalities and distrust of healthcare will be established rather than resolved. The differences between how men and women attribute blame for problems/risks associated with surgical AI (i.e., men blame developers of surgical AI and women blame humans running the surgical AI system) indicate a fundamental difference between how the two sexes frame risk in relation to surgical AI, which has repercussions in terms of how surgical AI will need to be built (i.e., transparency and accountability frameworks).

The results of this study align with the previous work of Buslón et al. (2023) and Ho et al. (2025), and reveal significant differences between genders regarding their level of comfort using robotic-assisted surgery. The previously documented disparity provides evidence for a pattern in which women tend to demonstrate more caution with high-stakes autonomous systems than men (that is, greater levels of discomfort). Thus, there is a very real possibility that the lower levels of representation of women in robotics technology will have implications for the level of comfort experienced by women in regards to using these technologies. Furthermore, this study provides evidence that gender differences in trust extend beyond the use of general AI tools; in fact, gender differences in trust seem likely to be exacerbated in situations where AI systems exert complete operational control over surgical procedures that are considered to be life-threatening.

Implications and Significance

The gendered disparities revealed in this study are consistent with evidence-based biases in the healthcare AI systems13. Biomedicine has in the past been dominated by and rooted in men, and the addition of AI to the practice in recent times has subsequently justified the practice as discriminatory against other genders and sexes, most notably women. The finding that men are 2.4 times more comfortable with autonomous AI surgery is just one more instance of this broad trend of male-directed medical AI design.

Stai et al. (2020) report that gender (OR = 5.02, p = 0.0010) was one of the strongest predictors of being comfortable with robotic surgery in their study conducted in the USA. The current study showed that the comfort ratio for males was 2.4 times greater than for females, which is directionally consistent with Stai et al., but the difference in magnitude may be due to the broader, more demographically diverse sample used in this study or because Stai et al. measured comfort with fully autonomous (not robotic-assisted) robotic surgery.

This research builds on previous literature through analyzing the potential for repeated instances of differential trust among genders when discussing new technology incorporating Autonomous Surgical systems. Existing literature has thus far not focused specifically on Autonomous Surgical systems, and so we are taking a unique design perspective with the goal of establishing a broader understanding of how gender-differentiated trust might emerge from interactions with Autonomous Surgery Systems versus other digital health solutions identifying limitations of existing research on gender and AI have faced.

Women have more concerns regarding consent and privacy, which are three times higher than those of men, which aligns with the general observation that women have different levels of trust in AI systems, as evidenced by the fact that women use AI less and have higher privacy concerns than men14. Studies reveal that psychiatric disorder has the potential to influence GPT-4 coronary artery disease risk prediction for women and men, proof of systematic gender bias in AI clinical assessments15. AI technology and chatbots can also record gender bias through means of inbuilt flaws in training data, algorithms, and user feedback loops, providing concrete evidence for women’s justified suspicion of AI bias in medical applications16.

The previous section identified how gender impacts comfort levels & risk attribution series; this section reviews the intersection of Socioeconomic status with Gender — while gender impacts access to resources & institutional trust, socioeconomic status operates through an entirely different mechanism for resource access & institutional trust.

Aside from gender, the results suggest an increased likelihood of compounding the already-existing health inequities. The comfort income gradient is ill-omened for healthcare equity and has implications that are in agreement with evidence-based digital health disparities research. Digital technologies pervasively underpin the provision of health care, yet there remain ongoing disparities in access and utilization17. Our findings add fuel to this concern about accessibility to an acceptance trend, where individuals in higher income groups have 41.67% comfort with AI surgery compared to ~10-18% in all lower income groups.

Residing in a zip code with high median income was similarly associated with feeling (somewhat) comfortable with robotic surgery according to Stai et al. (2020) (OR = 1.03, p = 0.014). The current study, conducted in a more diverse context, found a much steeper gradient in comfort across different income levels (nearly 4 times as much difference in comfort for high versus low-income individuals), indicating that the relationship between income and acceptance of robotic surgery may be more pronounced for fully autonomous rather than supervised robotic systems.

The potential for gender-differentiated trust interactions with AI in a Surgical context can help us think about new policies and programs focused on equity within Digital Health. In relation to this work addressing disparities associated with Income when accessing Digital Health as identified by the authors Larnyo et al. (2025) and Yao et al. (2022), we can make the argument about how Equity-based policies will likely be required to identify how to reduce inequities across all levels of the health care system, which will only exacerbate from the inequitably located population segments that have historically experienced discrimination based upon their income level.

Socioeconomic status-defined health inequalities are normal in society, and digital health technology is accessed in greater measure by the socially more privileged18. The relationship between the observed patterns indicates that gender-sensitive transparency mechanisms and outreach efforts stratified by income may add to the level of acceptance of surgical AI when used in practice; however, these interventions would need to be tested through empirical research prior to developing firm policy recommendations.

The combined results regarding Gender, Age and Income revealed that the shaping of Acceptance arises from the intersection of several individual level factors. There is some complexity added by “Regional Context,” where there is variation in healthcare trust and institutional confidence across continents, thus adding a structural component to how demographics look within an overall landscape.

The combined results regarding Gender, Age and Income revealed that the shaping of Acceptance arises from the intersection of several individual level factors. There is some complexity added by “Regional Context,” where there is variation in healthcare trust and institutional confidence across continents, thus adding a structural component to how demographics look within an overall landscape.

While the variations found across Asia, Europe, and North America were exploratory in nature and restricted because of small subgroup sizes, the results are informally consistent with the general findings of the large research base on cross-national research demonstrating that the level of trust in the healthcare system and the level of trust in institutions can significantly differ in countries in different geographical contexts. The patterns observed should be considered strictly hypothesis-generating and require future studies to have an appropriate mix of samples from each continent.

Connection to Objectives

The findings effectively respond to the research objective by demonstrating that demographic variables play a pivotal role in understanding acceptance but also challenges existing technology adoption models.

Age provides another layer in examining the differences in attitudes towards technology for young & old consumers; beyond how age affects attitudes towards technology, it alters the fundamental differences among consumers based on gender & income brackets (which has been studied independent of each other).

The age paradox – older generations have higher optimism levels for the impact of AI but also higher rejection levels for complete automation – goes against the current generation technology adoption expectations. Acceptance of AI among family medicine is mediated by patients’ health literacy, age, and gender, but what our findings tell us is that knowledge of technology does not necessarily equate to familiarity with high-stakes medical applications of AI, and the suggestion is that trust building in Healthcare may not be subject to the same patterns as General Technology Adoption19.

Age did not significantly predict acceptance of AI in primary care (Chalutz Ben-Gal, 2023), diverging from the age paradox reported in this study for surgical settings. The data may reflect that AI acceptance may differ by clinical and domain contexts, and by the level of autonomy and stakes associated with the assessment being conducted.

What does the age Paradox observed tell us about the existing literature? In addition to having greater optimism towards technology results, older respondents rejected full automation. Existing literature defines age use a linear predictor of technology resistance. This response to support older respondents differentiating between supporting Automation as an Additional or Alternative Source of Support provides an opportunity for researchers to further understand and confirm through qualitative methodologies to develop a better model of the AI experience through older respondents.