Abstract

As the International Baccalaureate’s Middle Years Program (MYP) gains popularity, its holistic yet subjective framework poses challenges for effective implementation. This study investigates the experiences of 66 middle and high school students in California, offering critical insights into program successes and limitations. Our study is one of the first to investigate students’ perspectives on the effectiveness of the MYP Personal Project and the success of the MYP program. Through quantitative and qualitative survey feedback, we identify key areas for improvement: communication, rubric clarity, and course design. Findings highlight a demand for enhanced student-advisor interaction, clearer assessment criteria, and more academically rigorous options to meet diverse student needs. The Personal Project component requires clearer rubric guidelines and sustained, structured support. By addressing these gaps, this study provides actionable recommendations to optimize the MYP’s implementation. This study also contributes to the broader discussion on innovative educational frameworks and the extent to which they adequately prepare students for the demands of the modern world.

Keywords: Middle Years Program (MYP), student feedback, Personal Project, holistic learning, curriculum design, educational outcomes

Introduction

Education is constantly evolving to meet the demands of a globalized world. The MYP program, developed by the International Baccalaureate (IB), is one such initiative1.

Background

The MYP program is a curriculum framework2 that aims to (1) develop students’ research, creative and critical thinking, communication, collaboration, and self-management skills (collectively called Approaches to Learning or ATL); (2) help students explore big ideas and raise students’ awareness on global issues. The program runs for 5 years, starting in grade 6 to grade 10. Each year, the students are required to complete at least 80 hours of learning on each of the eight subject groups, ranging from language acquisition and mathematics to science and design. The MYP program culminates in a student-driven Personal Project component where students propose a project topic of interest, and the school provides support to help students engage in practical explorations and implementations of their projects through inquiry, action, and reflection. The MYP Personal Project is mandatory for students to receive an MYP certificate.

The MYP program was first launched in 1994 and re-launched in 20141‘3, and it is now offered by 751 U.S. schools and many schools worldwide4‘5‘6‘7. Many research works have reported the success of the MYP programs8‘6‘9. However, the MYP’s project-based approach and subjective grading rubric have also raised questions about its outcome validity10‘6.

Implementation of the MYP program at surveyed schools

This research paper evaluates the effectiveness of the MYP program at a middle and a high school in a California school district. The program was fully launched during the 2024-2025 school year after a year of partial implementation in the 2023-2024 school year. In June 2024, a survey was conducted to collect students’ feedback on their learning experiences and challenges with the MYP Personal Project and MYP program. By analyzing quantitative data and qualitative feedback from students, this study seeks to provide a comprehensive assessment of the impact and effectiveness of the MYP program in its first year of implementation.

The surveyed high school experienced a discontinuation of Honors Freshman and Sophomore English courses, replaced with non-accelerated MYP classes. The Personal Project was hosted on Canvas, a web-based Learning Management System used for accessing course materials and assignments. The Personal Project involved three components: structured planning assignments, regular meetings with assigned teacher advisors, and a final 5–15-page paper plus presentation evaluated by a rubric.

Research questions

Our study aims to answer the following research questions:

- RQ1: How do students perceive the effectiveness of the MYP Personal Project in improving their personal growth and self-confidence?

- RQ2: What challenges do students face in completing their MYP Personal Project?

- RQ3: To what extent has the MYP program achieved its curriculum goals as perceived by the students?

To the best of our knowledge, our study is one of the first to examine students’ perspectives on the effectiveness of the MYP Personal Project and the overall MYP program. It fills in the gap of existing research, which has been largely focused on teachers’ and school management’s points of view6‘11. Furthermore, based on students’ feedback, our study suggests ways of improving the Personal Project and MYP programs to address the current limitations.

The rest of the paper is organized as follows. Section Literature Review and Significance discusses existing works and the significance of our study. Section Methodology describes the survey design, participants, data collection, and survey validation. Section Discussions presents the findings from the survey responses and answers to the research questions. Section Discussions discusses strategies to improve the MYP program based on the findings in Section Findings. Finally, Section Conclusion and Future Directions concludes the paper and suggests future directions.

Literature Review and Significance of Our Study

MYP program: The MYP program is a key part of the International Baccalaureate (IB) program1, which aims to develop lifelong, inquiry-driven learners who are equipped with the knowledge and skills to solve complex real-world problems. The MYP program builds on the IB Primary Year Program (PYP) and plays a key role in preparing students for the IB Diploma Program (DP) and IB Career-related Program (CP).

However, despite its importance, there has been little research examining the effectiveness of the MYP program12‘9‘13. For example, a recent study12 was only able to identify 8 studies that address the challenges of implementing MYP, and only two of the 8 studies were about schools in the U.S. We further expanded the search and found a few more research efforts on the MYP program in recent years.

Notably, Wright and Keung7 focused on studying the correlation of MYP Personal Project with students’ performance in their DP programs. Walker and Bunnell14 presented the experiences and challenges faced by high-school teachers who transitioned from traditional curricula to teach new MYP courses. Lloyd-Peay11 investigated how teachers’ perspectives on the MYP program affect its implementation.

Student feedback can provide unique insights into curriculum strengths and weaknesses, and it is key to enhancing curriculum design15. However, there has been little research on collecting and incorporating students’ feedback in improving the MYP program16‘17. Kelly16 interviewed 9 MYP students in grade 7 to explore their experiences of evaluating teachers. Storz and Hoffman17 interviewed 16 students from 7th and 8th grades during the first year of their MYP program. The students appreciated the global aspect of the IB curriculum but expressed concerns about the elimination of their gifted programs and the shift to heterogeneous grouping, which they felt limited their learning opportunities. As we will discuss in our findings, the students in our study raised similar concerns on the removal of honors courses, student placement, and cohort structure (Table 5).

Existing research highlights several positive MYP outcomes, but most from the perspectives of instructors and management6‘17. For instance, Dickson et al.6 investigated the perceptions of teachers and school leaders on the effectiveness of the MYP program. It found that the perceived benefits include high academic achievement and skill development through MYP’s inquiry-driven learning and real-world relevance. Similarly, Sperandio9 also found that MYP increases learning relevance and student engagement, with teachers and leaders agreeing on its benefits across different socioeconomic contexts.

However, challenges remain, such as insufficient training and resources for teachers, as well as the mindset that inquiry-based learning may not work for all students. These factors have led to program discontinuation in some schools4‘6‘18. Additionally, curriculum changes pose challenges for teachers, particularly with the MYP rubric and conceptual focus19. Many teachers find MYP jargon confusing, leading to difficulties in grading consistency6. Rubric criteria are described as “wishy-washy” and difficult to standardize across subjects20.

MYP vs AP: Although our study is focused on the MYP program, we note that many schools, including our surveyed schools, have also offered AP (Advanced Placement) courses. While the inquiry-driven MYP program focuses more on how students learn, the AP program prioritizes what students know21. In the AP program, students demonstrate their readiness for college by taking AP courses and standardized AP tests in a variety of subjects, such as calculus and physics. In comparison, the MYP program is more of a curricular framework where different schools might have different implementations, and the assessment (e.g., on the MYP Personal Project) is done internally by the schools. Therefore, as our findings show (Section 4), it is important to communicate with the students clearly about the structure and expectations of the program and provide sufficient support and guidance throughout the program. Since many MYP students are also taking AP courses, it is essential to help students develop a coordinated strategy to effectively balance MYP requirements with AP coursework and extracurricular commitments.

Significance of our study: Our study stands out as one of the first to directly gather and analyze student responses on the MYP program, filling a gap in existing research, which has mostly focused on teachers and program organizers14‘5‘6‘11‘17, as discussed above.

Research on the MYP Personal Project is also limited22‘7, particularly in practical guidance on implementation, which is one of the main focuses of this paper. Existing studies7 show that Personal Project scores can predict performance in the DP program but do not offer insights into creating a quality student experience. Our study addresses this gap by offering strategies for project guidance and timelines.

Our case study methodology generates insights into the MYP Personal Project, exploring “how” and “why” questions in real-world contexts23. Using an inductive approach, we gather comprehensive data without the constraints of a specific theoretical framework24. While our findings may not be generalizable to all schools, they offer valuable insights for decision-making and further research on MYP20.

Methodology

In this section, we describe the design of the survey, survey participants, data collection, survey validation, and data analysis methods. The findings of our study will be presented in the next section.

Survey design: The administrators of the survey school were responsible for the design and the initial (face) validation of the survey. The complete survey questionnaire is attached in Appendix A. The survey consists of four parts:

- Demographics: student name, email, and grade level.

- MYP Personal Project: Table 1 lists the questions soliciting students’ feedback on the guidance and support they have received during the implementation of their Personal Project (questions 2.a-d); students’ perception on the contributions of the Personal Project to their personal growth and self-confidence (2.e); and challenges faced in completing the project (2.f).

- MYP program: Table 2 lists the questions used to gauge students’ perceptions on the effectiveness of the school’s MYP program in achieving the curriculum goals, ranging from improving critical thinking skills, global awareness, to collaboration and teamwork (questions 3.a-g). The last two questions in this part ask students to indicate the most engaging courses or projects in the 2023-2024 school year.

- MYP program feedback: The last part of the survey has two questions: question 4.a asks students for their suggestions in improving the program, and question 4.b asks students to rate their overall experiences in the MYP program that culminates in the 10th grade.

Note that all questions in part 2 and question 4.b are targeted at the students in their 10th grade, while the rest of the questions are for all students in the MYP program. Note also that all the Likert-type questions in the survey use the four-point Likert scale: from “strongly disagree” (with a score of 1) to “strongly agree” (a score of 4).

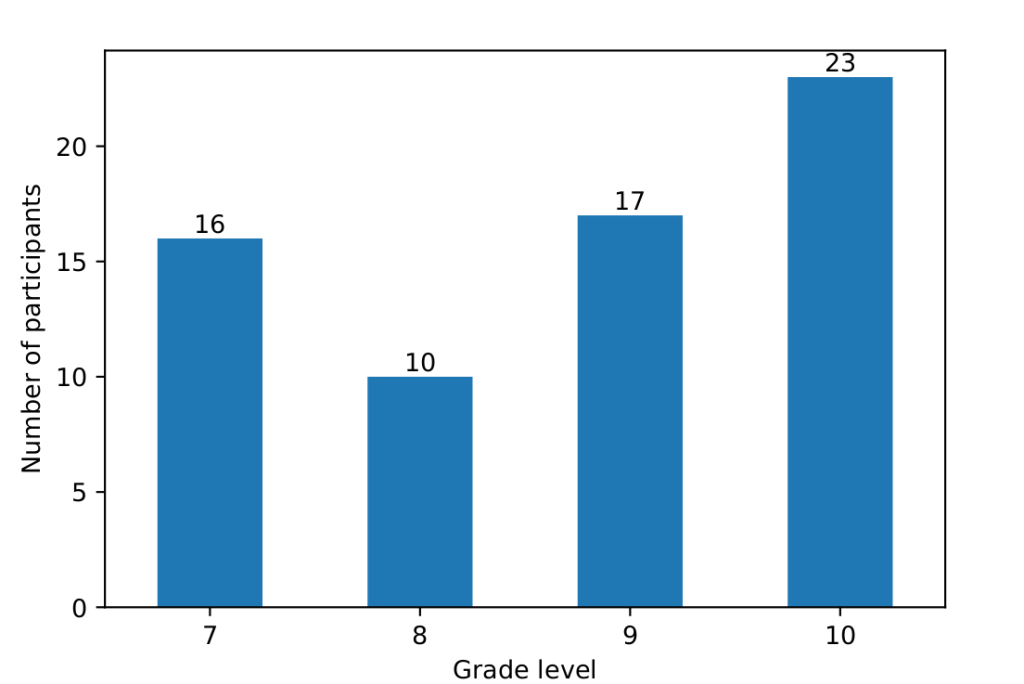

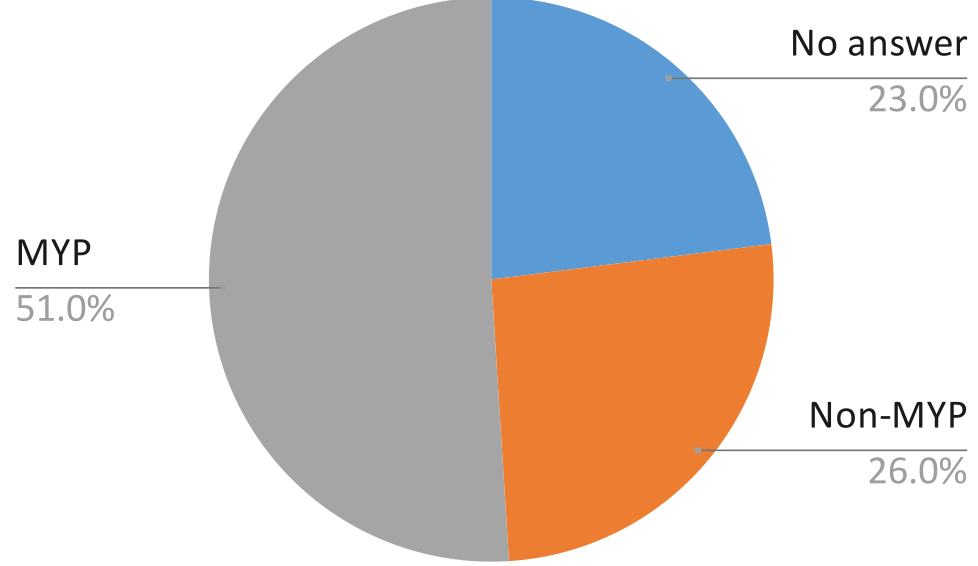

Participants & data collection: All students in the MYP program were invited to participate in the survey. The survey was distributed via Canvas, Remind (messaging software), and emailed to all students enrolled in the MYP program on June 10th, 2024. In the 2023-2024 school year, 260 7th graders, 143 8th, 150 9th, and 85 10th graders were enrolled in the program. The survey collected 66 responses. Figure 1 shows the distribution of participants by their grade levels. For example, 23 (about 1/3) of the participants were in 10th grade. The response rates for 7th, 8th, 9th, 10th graders are 6%, 7%, 11%, and 27% respectively.

| # | Question | Type |

| 2.a | I had an effective amount of guidance and support provided by my Personal Project advisor while completing the Personal Project. | Likert |

| What suggestions do you have to increase guidance and support? | Open-ended | |

| 2.b | In what area of the Personal Project completion do you think the school should provide more support? | Multiple-choice |

| Explain how the school can provide more support to you in the area(s) you identified above. | Open-ended | |

| 2.c | What forms of communication did you utilize in the completion of the Personal Project? | Multiple-choice |

| 2.d | The Canvas MYP Course is easy to navigate. | Likert |

| What recommendations would you make the Canvas Course more user friendly? | Open-ended | |

| 2.e | The Personal Project has contributed to my personal growth and self-confidence. | Likert |

| 2.f | What were the biggest challenges you faced while completing your Personal Project? | Open-ended |

Survey validation: Part 3 of the survey consists of 7 questions designed to measure students’ perception of the effectiveness of the MYP program in achieving different curriculum goals. We use factor analysis25 to identify the latent factor(s) underlying the student responses and use Cronbach’s alpha26 to measure the internal consistency of the questions in the factor.

| # | Question | Type |

| 3.a | The MYP program has helped me develop critical thinking skills. | Likert |

| 3.b | The MYP program has prepared me for future academic challenges. | Likert |

| 3.c | The subjects and topics covered in the IB MYP program are relevant and applicable to real-world situations. | Likert |

| 3.d | The IB MYP has broadened my understanding of global issues and diverse cultures. | Likert |

| 3.e | How well do you feel the IB MYP addresses your individual learning needs and interests? | Poorly (1) to Very Well (4) |

| 3.f | How would you rate the level of collaboration and teamwork among students in the IB MYP? | Poor (1) to Excellent (4) |

| 3.g | To what extent do you feel engaged and challenged by the IB MYP curriculum and learning activities? | 1 to 4 |

| 3.h | What curriculum/projects were you most engaged in during the 23-24 school year? (From any of your classes) | Open-ended |

| 3.i | What curriculum/projects were you least engaged in during the 23-24 school year? (From any of your classes) | Open-ended |

Data analysis methods: (1) For open-ended questions, we perform thematic analysis by first identifying recurring ideas, suggestions, or topics, and then grouping similar responses based on these themes. (2) For multiple-choice questions, we gather and obtain descriptive statistics on the distribution of choices. (3) For the responses to the questions in the Likert scale, we first convert them into numeric values (e.g., 1 for “strongly disagree” and 4 for “strongly agree”) and analyze their distributions. (4) For the responses with numeric values (e.g., ratings), we directly examine their statistics. (5) We also perform Pearson’s correlation analysis27 to examine the correlation between students’ satisfaction level on the specific curriculum goal of the MYP program with their ratings on the overall effectiveness of the program.

Findings

In this section, we first validate the 7 questions designed to solicit students’ feedback on the effectiveness of the MYP program. We then present our findings from analyzing the survey data and provide answers to the three research questions posed in the Introduction.

Survey validation

We validate the survey questions 3.a-g via factor analysis. First, we check to make sure that the factor analysis can be performed on the sample data and that the size of the sample is large enough to draw statistically significant results. We then determine the number of latent factors underlying the questions and the loading of questions on the factors. Finally, we compute Cronbach’s alpha to verify the internal consistency of questions in the scale. Results are as follows.

- Bartlett’s Test of Sphericity28 on the questions reports a p-value of 0, which shows that factor analysis is appropriate. Kaiser-Meyer-Olkin (KMO) Test29 for sampling adequacy reports a value of .87, which is much above the acceptable threshold of .6. This shows that the obtained sample data from the survey are sufficient for analysis.

- Factor analysis (using the Python FactorAnalyzer package) suggests that there is only a single factor underlying the questions (one factor with eigenvalue = 4.6, all other factors have eigenvalues < 1). Except for question 3.f (on the collaboration level and teamwork among MYP students), whose loading score is .59, all other questions are loaded on the single factor with a score ranging from .73 (question 3.g) to .86 (question 3.a). According to Hair30, with a sample size of 66, the threshold for significant loading score is about .67. The relatively lower loading score of question 3.f is likely due to the fact that it is the only question addressing teamwork, while all other questions focus on students’ independent experiences (the focus of the latent factor).

- Cronbach’s alpha of questions in the (single) factor is .91, with the 95% confidence interval of [.87, .94]. This shows the excellent internal consistency of the questions in the factor.

Evaluating the MYP personal project

The findings in this section are based on the twenty-three 10th graders’ responses to the questions in part 2 (MYP Personal Project) of the survey.

Answering RQ1 (How do students perceive the effectiveness of the MYP Personal Project in improving their personal growth and self-confidence?)

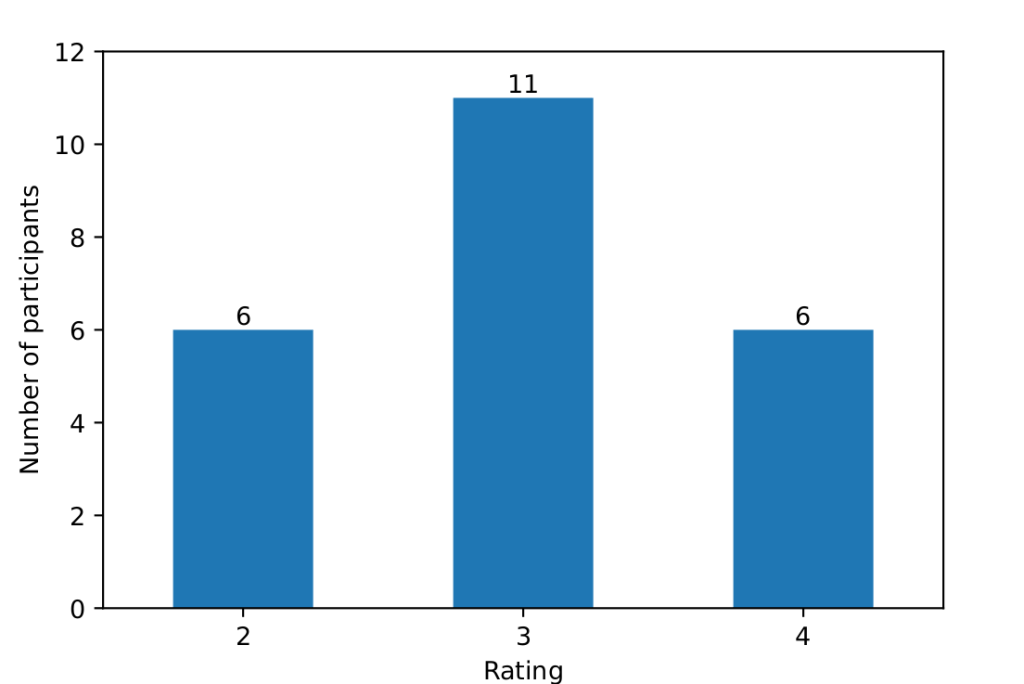

To answer the research question RQ1, we analyzed students’ responses to question 2.e (“The Personal Project has contributed to my personal growth and self-confidence.” Table 1). Figure 2 shows the distribution of ratings of the 10th graders on the effectiveness of the project in improving their growth and confidence. We see that the majority (17/23 = 74%) of the students gave a rating of at least 3 (i.e., agree or strongly agree), while there are still 26% (6/23) of the students who disagreed.

To further understand the reasons behind the low ratings, we need to investigate the challenges that the students might have faced in completing their personal projects.

| Theme 1 | Time & Self-Management (13) |

| Representative excerpts | “Time management alone, with many personal commitments.” “Very little time over the summer and school year.” “Time management and facing procrastination.” |

| Theme 2 | Clarity & Organization (11) |

| Representative excerpts | “Unclear rubrics, formats, and expectations.” “Requirements changed while I was writing.” “The process felt confusing and unorganized.” |

| Theme 3 | Creating & Presenting the Work (9) |

| Representative excerpts | “Writing the paper was the biggest challenge.” “Preparing the presentation and final product.” “Learning how to edit videos for the project.” |

Answering RQ2 (What challenges do students face in completing their MYP Personal Project?)

Table 3 shows the major themes and excerpts extracted from the challenges students stated in the survey (Table 1, question 2.f). We can see that student challenges clustered into three primary themes: Time and Self-Management (13 responses), reflecting difficulties balancing personal commitments and procrastination; Clarity and Organization (11 responses), highlighting confusion caused by unclear rubrics, changing requirements, and unstructured processes; and Creating and Presenting the Work (9 responses), involving challenges with academic writing, presentations, and technical skills such as video editing.

To address these challenges, it would be helpful to provide students with clear and stable project guidelines early, including detailed rubrics, timelines, and example projects; incorporate structured milestones to support time management; and offer targeted instruction or resources for writing, presentation, and technical production skills. We will further discuss this in Section Discussion.

Additionally, the Personal Project Canvas course received mixed feedback (Table 1, question 2.d), with an average rating of 2.6, indicating a neutral to slightly negative experience. Common issues expressed by students included poor course design, such as unclickable links, the lack of published example projects, and difficulty finding existing resources. Students emphasized the need for a more accessible timeline with clearly outlined deadlines and expectations. However, multiple communication channels were utilized effectively. Responses to “What forms of communication did you utilize in the completion of the Personal Project?” (Table 1, question 2.c) show that all students accessed at least one form of communication, with most using multiple forms of communication. This is a success that should be maintained.

Evaluating the MYP program

The findings in this section are based on students’ responses to the questions in parts 3 (MYP program) and 4 (MYP program feedback) of the survey.

Answering RQ3 (To what extent has the MYP program achieved its curriculum goals as perceived by the students?):

- Table 4 shows the statistics of students’ ratings on the specific curriculum goals and their overall ratings on the program. We can see that the individual ratings range from 2.35 (on critical thinking skills) to 2.68 (on the relevance of MYP subjects to real-world situations). The average overall rating is 2.24 (out of 4). This shows the program still has a lot of room to improve.

| Item | Statistics | Correlation with overall rating | ||

| Avg | Std | r | p-value | |

| Development of critical thinking skills | 2.35 | 0.97 | 0.63 | 1.35E-08 |

| Preparation for future academic challenges | 2.42 | 1.07 | 0.65 | 3.30E-09 |

| Relevance of MYP subjects to real-world situations | 2.68 | 0.88 | 0.59 | 1.75E-07 |

| Broadening understanding of global issues & diverse cultures | 2.5 | 1.04 | 0.54 | 2.95E-06 |

| Fulfillment of individual learning needs and interests | 2.42 | 1.02 | 0.68 | 3.85E-10 |

| Collaboration and teamwork among students | 2.42 | 0.91 | 0.46 | 9.72E-05 |

| Engagement and challenge by the MYP curriculum | 2.45 | 1.04 | 0.52 | 6.82E-06 |

| Overall experiences in the 10-th grade MYP program (4.b) | 2.24 | 1.07 | / | / |

- Table 4 also shows Pearson’s correlation coefficient (r and p-value, the last two columns) of the ratings on specific goals with the overall rating on the program (question 4.b). We see that all correlations are determined to be significant (with a p-value of less than .001). “Fulfillment of individual learning needs and interests” is most strongly correlated, while “collaboration and teamwork” is least correlated. This echoes our earlier finding in factor analysis, where question 3.f (on the collaboration and teamwork) has the smallest loading on the latent factor (which focuses on students’ independent work).

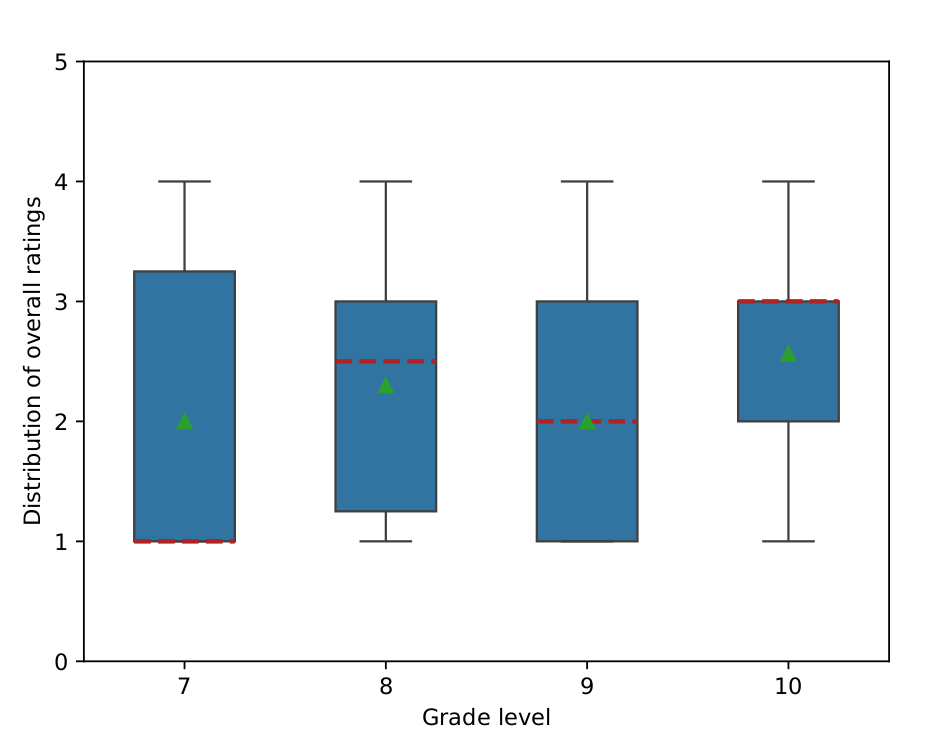

- Figure 3 illustrates student satisfaction across grades 7-10, based on students’ ratings on their “overall experience in the 10th-grade MYP program” (question 4.b). 10th graders report the highest level of satisfaction, with a median of 3 and a narrow interquartile range, indicating consistent responses. Conversely, 7th graders exhibit the lowest satisfaction levels, with a median of 1 and a broader interquartile range, reflecting greater variability. Both 8th and 9th graders show similar satisfaction levels. Satisfaction generally increases with grade level.

Analyzing student feedback

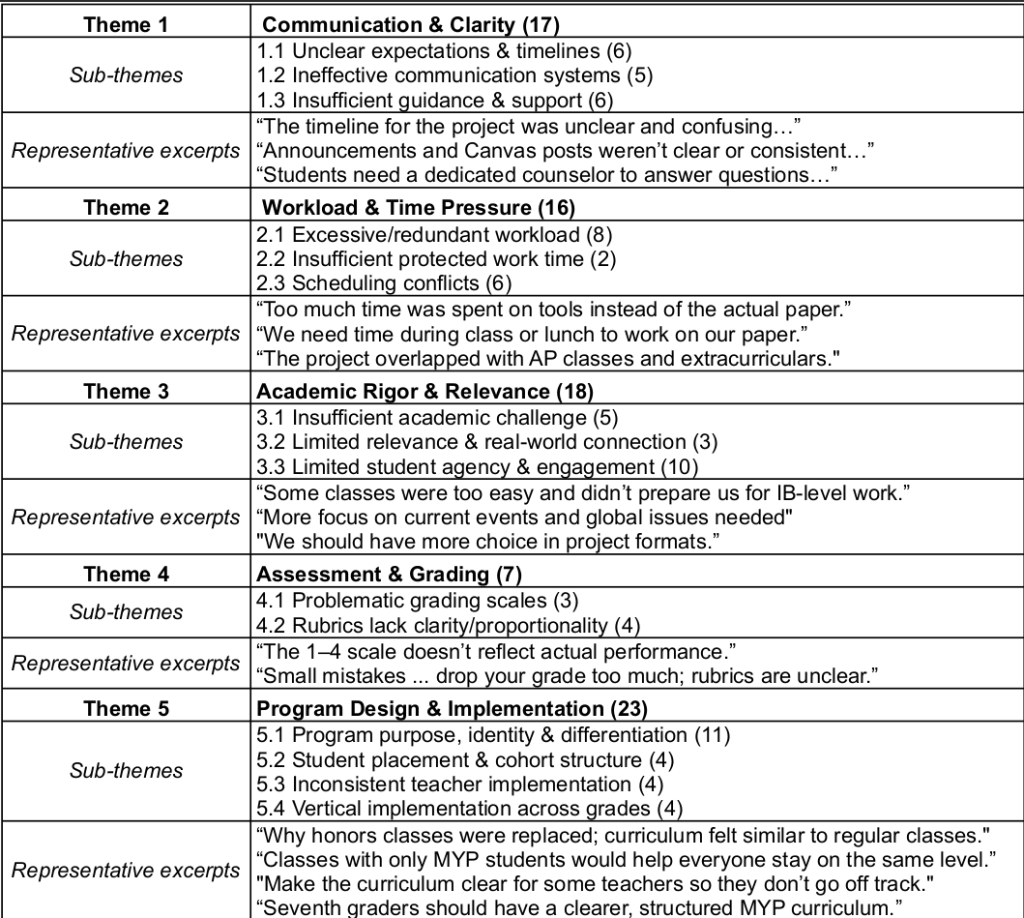

As described, question 4.a of the survey solicits students’ recommendations in improving the MYP program. After removing responses that do not make any concrete suggestions (e.g., “no suggestions”, “none”, “I don’t know”), we retained 57 responses. We then performed thematic analysis on these responses. Table 5 shows five major themes, their sub-themes, and representative excerpts extracted from the responses. Note that a response may be assigned to multiple themes or sub-themes. For example, a student’s response: “More clarity on the timeline, decrease the amount of tools and busy work focus on the project more” was assigned to sub-themes 1.1 (Unclear expectations & timelines) and 2.1 (Excessive/redundant workloads).

- Theme 1 (Communication & Clarity): Students consistently reported confusion about expectations, timelines, and requirements. Communication was perceived as inconsistent across platforms, and many students felt they lacked reliable access to guidance when questions arose. This uncertainty increased stress and reduced student confidence in navigating the program.

- Theme 2 (Workload & Time Pressure): Students described the workload as overwhelming, particularly due to perceived redundancy in tools and documentation. Time pressure was intensified by conflicts with AP classes, extracurriculars, and other academic demands, with insufficient protected time to complete work during the school day.

- Theme 3 (Academic Rigor & Relevance): While some students appreciated the creative aspects of MYP, many felt the academic rigor was inconsistent and sometimes insufficient. Others noted that curriculum relevance and real-world connections varied widely, affecting engagement and perceived value.

- Theme 4 (Assessment & Grading): Assessment practices were a source of frustration, particularly grading scales and rubrics that students found vague or disproportionate. Many students struggled to understand how to improve or what constituted high-quality work.

- Theme 5 (Program Design & Implementation): Students expressed uncertainty about the purpose and identity of the MYP program, particularly how it differs from regular or honors coursework. Inconsistent implementation across teachers and grades further diluted program coherence and student buy-in.

To address these challenges, we recommend that the school take the following actions.

- Clearly articulate and communicate the MYP value proposition to students and families.

- Strengthen program differentiation from non-MYP classes.

- Group MYP students where possible to create cohesive learning cohorts.

- Align expectations and training to ensure consistent implementation across teachers and grade levels.

- Strengthen vertical alignment so skills and expectations build year to year.

In summary, student feedback suggests the MYP framework is valued in principle, but its implementation requires clearer communication, better pacing, stronger rigor, and more transparent assessment. Addressing these themes systematically would significantly improve student experience, academic challenge, and program credibility. We will provide additional detailed recommendations in Section Discution to address these issues.

Curriculum and project engagement

We further examined students’ responses to question 3.i in Table 2 (What curriculum/projects were you most engaged in during the 23-24 school year?). Figure 4 highlights that a majority of non-engaging curricula were MYP-related, with a lack of academic challenge being the primary reason cited by respondents. One student noted, “In general, I did not feel challenged in my MYP classes. I felt that there was better collaboration in my AP classes this year and honors classes last year.” Another commented, “MYP English, and MYP history, I feel like getting rid of levels of classes makes it harder to focus and be engaged since a lot of people don’t care about school. Honors made it easier to stay focused.” Combined with findings from Table 4 suggesting that preparation for future academic challenges is an important factor, the removal of honors courses likely had a negative impact on students’ overall satisfaction with the program.

Discussions

Evaluating the program using data in Section Findings offers insights into how educators can effectively implement the MYP. Here we provide further recommendations to improve the overall student learning experience and suggest strategies to streamline the program.

- Better communication: The predominant area of improvement identified in the survey was communication. The three critical components of communication are trust, transparency, and active listening31. In the context of MYP, that translates to more student-advisor interactions in the Personal Project. Oversight on this front can easily lead to students quitting the program or losing motivation, as students reported in their responses to question 4.a (on students’ suggestions to improve the program, see Section Methodology and Appendix A). For example, a student stated: “The idea itself is good, but the timeline and rubrics were just unclear this year, which led to some frustration among students and some students quitting. This is a promising program though and I hope to see it improve drastically in the future.”

Thus, we suggest advisors track students’ statuses (e.g., On Track, Ahead, Needs Assistance) using a simple spreadsheet to prevent students from claiming insufficient outreach. Increasing advisor meeting frequency and mandating occasional meetings will allow for better progress assessment and address the communication issues highlighted by many students. These recommendations apply to the middle-school level, too, as many of the middle-school students surveyed expressed that a lack of communication was an issue.

- Clear expectations. Unclear rubrics and timelines were the main culprits of confusion. As discussed in Section Literature Review and Significance of Our Study, MYP’s desire for holisticity creates subjectivity10‘20. Absent clear expectations for meeting the criteria, students may opt to do a simpler project they believe would satisfy the MYP grader’s idea of quality work, sacrificing creativity. Surveyed students expressed a strong desire for more consistent, clear, and concise rubrics. This can be addressed through task-specific clarifications (TSCs) and command terms (CTs)19. TSCs clarify expectations for a specific assignment, narrowing down rubric generalities. CTs are words in the criteria description that denote a suggested level of thinking; these have already been incorporated into the MYP rubric (see the attached grading rubric on the MYP Personal Project in Appendix B). For example, the questions, “state the learning goal”, versus, “explain the learning goal” imply two different levels of thinking. Creating a database of example answers for each CT will help clarify rubric interpretation. A database of past Personal Projects with the score they received and the rationale behind that score would also aid in students’ understanding of project expectations. Explicit scoring systems increase grading credibility because they boost student-teacher transparency32. In their absence, grading becomes impressionistic, harming students’ learning. A combination of holistic marks-based grading and analytical criteria-based assessment offers a useful perspective on overall performance33.

In addition, a main concern expressed in the data was that there was insufficient time to complete the final paper and presentation of the Personal Project. Creating a detailed timeline with actionable deadlines, advisor meetings, project peer checks, etc., will make the project feel more approachable to students and ensure that there is enough time to complete the final paper and presentation. It is also important to ensure high accessibility to the timeline. For the schools surveyed, students expressed that many links to the resources in the Canvas course were broken or unclickable. Simple problems that result from basic oversights can be mitigated by having students test the Canvas and provide feedback before it is published.

- Student-oriented curriculum. Students surveyed expressed the desire for a more relevant and engaging MYP curriculum. Incorporating student feedback is essential for ongoing improvement. Involving alumni or survey responders in reviewing the rubric, assignments, and other aspects of the program before implementation may enhance the student experience. This part of the MYP experience should not be underemphasized, as Table 4 shows that critical thinking skills and the relevance of MYP subjects to the real world were both strongly correlated to overall satisfaction with the MYP program. As cited by respondents, engaging projects such as the “Family Tree” assignment in middle school Spanish classes are noted for their academic and creative impact. Conversely, a student criticized that the “happiness unit in English didn’t necessarily teach us any English,” indicating a disconnect between the unit’s content and the expected learning outcomes (Table 2, question 3.i). We recommend that teachers briefly survey students after each MYP project to gauge sentiment and share findings with district MYP coordinators for future curriculum planning. Academic challenge and critical thinking skills, as shown in Table 4, are closely linked to student satisfaction. Therefore, ongoing assessment and enhancement of the curriculum are essential. For the schools surveyed, it is also recommended that further research be conducted into the current curriculum to identify and address the most significant gaps, particularly in English and History, where dissatisfaction was most prevalent. The MYP curriculum should be revised to offer more challenge and relevance to real-world issues. Additionally, survey responses indicate a strong interest in offering more challenging content through honors courses. Research supports that honors students benefit from tailored environments with similarly academically driven peers34‘35.

- Increased Personal Project engagement. To further support real-world engagement, MYP coordinators should offer opportunities for community involvement. This can be done by creating a database of local institutions open to partnering with students for their Personal Projects. Encouraging civic engagement has multiple benefits, including increased civic participation36.

Additionally, fostering a collaborative learning environment is moderately correlated with overall satisfaction with MYP, as shown in Table 4. Incorporating more frequent peer checks in the Personal Project timeline and self-assessments will help facilitate collaboration while also increasing the quality of work. Peer learning leads students to take a more active role in their education37.

Conclusion and Future Directions

This evaluation of the MYP program is one of the first to directly evaluate student responses to the program.

Increasing the frequency of student-advisor interactions through more advisor meetings fosters communication. Creating a student progress tracking system to monitor progress will also prove effective in ensuring that no students are left with inadequate help. We identified subjective rubrics as a major problem in the MYP system and suggest creating a database of answers for each CT and providing TSCs to give both teachers and students a clear picture of what quality work is. Additionally, crafting a clear and easily accessible timeline will help students stay on track. Overall, a key characteristic of MYP is its holistic curriculum that aims to be relevant to the real world. However, the effectiveness of the curriculum heavily varies from school to school, so creating a committee of MYP students and alumni to give feedback on the curriculum will provide valuable insight into which lessons or class projects succeeded or failed. We also heavily warn against abolishing accelerated course options in favor of the MYP program. If there is any means to offer accelerated or honors courses in conjunction with MYP, we suggest taking that course of action. No matter how holistic or engaging the MYP curriculum is, surrounding students with other academically driven peers fosters rigorous academic experiences34‘38‘35.

Future research should examine comparative analyses between MYP and other educational frameworks, relationships between teacher training and program success, success rates of various implementation methods, and long-term student outcomes.

This paper gives a novel perspective on the MYP. A program serving students must cater to student perspectives. Absent student input, MYP educators will not be able to adequately assess their programs, and that undermines the effectiveness of the MYP framework. By addressing communication gaps, clarifying rubrics, and improving curriculum integration, schools can better support student success and engagement while meeting the program’s holistic goals.

Appendix A: The survey questionnaire

The survey consists of 4 sections: demographics, MYP personal project, MYP program, and MYP program feedback. Note that questions 2.a, 2.d, 2.f, 3.a–d, 4.b are in the 4-point Likert scale. In questions 3.e–f, students indicate their responses also on a four-point scale, with 4 being the highest (positive) extent or rating. The rest of the questions are either multiple-choice or open-ended.

- Demographics

- What are your email, first, and last names?

- What grade are you in? (Select from 7, 8, 9, or 10)

- MYP personal project

- I had an effective amount of guidance and support provided by my Personal Project advisor while completing the Personal Project. (4-point Likert scale: strongly disagree or 1, disagree or 2, agree or 3, and strongly agree or 4)

Students who responded in the prior question with a 1 or 2 please answer this question.

- What suggestions do you have to increase guidance and support?

b. In what area of the Personal Project completion do you think the school should provide more support? Check all that apply.

- Beginning Development of Your Personal Project (Building Ideas)

- Research

- Creating Success Criteria

- Approaches to Learning

- Global Context

- Time Management

- Personal Project Presentation

- Personal Project Showcase

- None

- Explain how the school can provide more support to you in the area(s) you identified above.

c. What forms of communication did you utilize in the completion of the Personal Project? Check all that apply.

- Remind

- Canvas Announcements

- Canvas Email

- School Email

- Delivered Notes to Class

d. The Canvas MYP Course is easy to navigate. (4-point Likert scale)

- What recommendations would you make the Canvas Course more user friendly?

e. The Personal Project has contributed to my personal growth and self-confidence. (4-point Likert scale)

f. What were the biggest challenges you faced while completing your Personal Project?

3. MYP program

- The MYP program has helped me develop critical thinking skills. (4-point Likert scale)

- The MYP program has prepared me for future academic challenges. (4-point Likert scale)

- The subjects and topics covered in the IB MYP program are relevant and applicable to real-world situations. (4-point Likert scale)

- The IB MYP has broadened my understanding of global issues and diverse cultures. (4-point Likert scale)

- How well do you feel the IB MYP addresses your individual learning needs and interests? (Select from “poorly” or 1 to “very well” or 4)

- How would you rate the level of collaboration and teamwork among students in the IB MYP? (Select from “poor” or 1 to “excellent” or 4)

- To what extent do you feel engaged and challenged by the IB MYP curriculum and learning activities? (Select from “not very engaged and challenged” or 1 to “very engaged and challenged” or 4)

- What curriculum/projects were you most engaged in during the 23-24 school year? (From any of your classes)

- What curriculum/projects were you least engaged in during the 23-24 school year? (From any of your classes)

4. MYP program feedback

a. What suggestions do you have for improving the MYP program?

b. How would you rate your overall experience in the 10th grade

MYP program? (4-point Likert scale)

Appendix B: The grading rubric on the MYP Personal Project

The grading rubric on the MYP Personal project used by the surveyed schools consists of three parts: project planning, applications of skills, and reflection. Each part is further divided into several strands. For example, the following table shows the first strand of the rubric for project planning. The table is intended to provide additional context for the discussions in Section Discussions (3: clear expectations), where we suggested that providing students with sample projects and their grading results would improve the clarity of the expectations on the projects. Due to space constraints, we omit the rest of the rubric.

| Criterion A- Planning | ||

| Strand i: State a learning goal for the project and explain how a personal interest led to that goal. | ||

| Level | Strand descriptor | Task-specific clarification |

| 0 | The student does not achieve a standard described by any of the descriptors below. | |

| 1-2 | states a learning goal | The student states a learning goal that is simplistic or shallow and does not have a clear connection to personal interests. |

| 3-4 | states a learning goal and outlines the connection between personal interest(s) and that goal | The student outlines a simple or easily-achievable learning goal that provides a brief connection to personal interests |

| 5-6 | states a learning goal and describes the connection between personal interest(s) and that goal | Based on personal interest, the student develops a clear learning goal that gives a detailed account of the connection to personal interests. |

| 7-8 | states a learning goal and explains the connection between personal interest(s) and that goal | Based on personal interest, the student develops a clear learning goal that gives a detailed account of the connection to personal interests including reasons or causes. |

| Definitions | ||

| Learning Goal | What students want to learn as a result of doing the personal project. | |

| Product | What students will create for their personal project. | |

| Presents | Offer for display, observation, examination or consideration. | |

| State | Give a specific name, value or other brief answer without explanation or calculation. | |

| Outline | Give a brief account or summary. | |

| Describe | Give a detailed account or picture of a situation, event, pattern or process. | |

| Explain | Give a detailed account including reasons or causes. | |

References

- T. Bunnell. The International Baccalaureate middle years programme after 30 years: A critical inquiry. Journal of Research in International Education, 10(3), pp.261-274, 2011. [↩] [↩] [↩]

- IBO (International Baccalaureate Organization). Middle Years Programme Curriculum, 2025 [↩]

- E. Wright, M. Lee, H. Tang, G. Tsui. Why offer the International Baccalaureate Middle Years Programme? A comparison between schools in Asia-Pacific and other regions. Journal of Research in International Education, 15 (1), pp.3-17, 2016. [↩]

- T. Bunnell. The rise and decline of the International Baccalaureate Diploma Programme in the United Kingdom. Oxford Review of Education, 41(3), pp.387-403, 2015. [↩] [↩]

- H. Chiang. Challenges of Implementing IBMYP Courses in a Junior High School: A Critical Case Study (Master’s thesis, National Taiwan Normal University, Taiwan), 2024. [↩] [↩]

- A. Dickson, L. Perry, S. Ledger. Challenges impacting student learning in the international baccalaureate middle years programme. Journal of Research in International Education, 19(3), pp.183-201, 2020. [↩] [↩] [↩] [↩] [↩] [↩] [↩] [↩] [↩]

- E. Wright, C. Keung. What is the International Baccalaureate for? Exploring educational purpose through the Middle Years Programme’s Personal Project. Educational Review, 77(5), 2025. [↩] [↩] [↩] [↩]

- A. Christoff. Global citizenship perceptions and practices within the International Baccalaureate Middle Years Programme. Journal of International Social Studies, 11(1), pp.103-118, 2021. [↩]

- J. Sperandio. School program selection: Why schools worldwide choose the international baccalaureate middle years program. Journal of School Choice, 4(2), pp.137-148, 2010. [↩] [↩] [↩]

- K. Burton. Designing criterion-referenced assessment. Journal of Learning Design, 1(2), pp.73-82, 2006, https://eric.ed.gov/?id=EJ1066490. [↩] [↩]

- L. Lloyd-Peay. Teacher Perception of International Baccalaureate Middle Years Programme Implementation (Doctoral dissertation, University of South Carolina), 2024. [↩] [↩] [↩]

- A. Dickson, L. Perry, S. Ledger. Challenges of the International Baccalaureate Middle Years Programme: Insights for school leaders and policy makers. Education Policy Analysis Archives, 29(137), 2021, https://eric.ed.gov/?id=EJ1338601. [↩] [↩]

- L. Tan, Y. Bibby. PYP and MYP student performance on the International Schools’ Assessment (ISA). Australian Council for Educational Research, 2010. [↩]

- V. Walker, T. Bunnell. Becoming a new type of teacher: The case of experienced British-trained educators transitioning to the International Baccalaureate Middle Years Programme abroad. Journal of Research in International Education, 23(2), pp.191-204, 2024. [↩] [↩]

- M. McCuddy, M. Pinar, E. Gingerich. Using student feedback in designing student‐focused curricula. International Journal of Educational Management, 22(7), pp.611-637, 2008. [↩]

- M. Kelly. International Middle School Students’ Experiences and Views on their Evaluation of Teachers. International Journal for Cross-Disciplinary Subjects in Education (IJCDSE), Volume 13, Issue 1, 2022. [↩] [↩]

- M. Storz, A. Hoffman. Becoming an international baccalaureate middle years program: Perspectives of teachers, students, and administrators. Journal of Advanced Academics, 29(3), pp.216-248, 2018. [↩] [↩] [↩] [↩]

- V. Twigg. Teachers’ practices, values and beliefs for successful inquiry-based teaching in the International Baccalaureate Primary Years Programme. Journal of Research in International Education, 9(1), pp.40-65, 2010. [↩]

- P. Poddar. Teachers’ perceptions of criteria-based assessment model of the International Baccalaureate Middle Years Program. Education Journal Magazine, 3(2), 43–48, 2023. [↩] [↩]

- A. Dickson, L. Perry, S. Ledger. Letting go of the Middle Years Programme: Three schools’ rationales for discontinuing an International Baccalaureate Program. Journal of Advanced Academics, 31(1), pp.35-60, 2020. [↩] [↩] [↩]

- G. Moore, J. Slate. Who’s taking the advanced placement courses and how are they doing: A statewide two-year study. The High School Journal, 92(1), pp.56-67, 2008. [↩]

- M. Muller. Understanding how the Middle Years Programme (MYP) for music works within an International School and Expressive Arts Faculty. International Journal of Social Science and Humanities Research, 6(4), pp.370-381, 2018. [↩]

- K. Robert. Case study research and applications: Design and methods. Sage publication, 2013. [↩]

- R. Bogdan, S. Biklen. Qualitative research for education, Vol. 368, 1997. [↩]

- P. Kline. An easy guide to factor analysis. Routledge, 2014. [↩]

- M. Tavakol, R. Dennick. Making sense of Cronbach’s alpha.International journal of medical education,2, p.53, 2011. [↩]

- J. Benesty, J. Chen, Y. Huang, L. Cohen. Pearson correlation coefficient. In Noise reduction in speech processing (pp. 1-4), 2009. [↩]

- S. Tobias, J. Carlson. Brief report: Bartlett’s test of sphericity and chance findings in factor analysis. Multivariate behavioral research, 4(3), pp.375-377, 1969. [↩]

- N. Shrestha. Factor analysis as a tool for survey analysis. American Journal of Applied Mathematics and Statistics, 9(1), pp.4-11, 2021. [↩]

- J. Hair, W. Black, B. Babin, and R. Anderson. Multivariate Data Analysis. “Seventh”. Prentice Hall, Upper Saddle River, New Jersey, 2009. [↩]

- T. Salamondra. Effective communication in schools. BU Journal of Graduate Studies in Education, 13(1), pp.22-26, 2021, https://eric.ed.gov/?id=EJ1303981. [↩]

- Z. Chan, S. Ho. Good and bad practices in rubrics: the perspectives of students and educators. Assessment & Evaluation in Higher Education, 44(4), pp.533-545, 2019. [↩]

- C. Tomas, E. Whitt, R. Lavelle-Hill, K. Severn. Modeling holistic marks with analytic rubrics. In Frontiers in Education, Vol. 4, p. 89, 2019. [↩]

- R. Geiger. The competition for high-ability students: Universities in a key marketplace. The future of the city of intellect: The changing American university, pp.82-106, 2002. [↩] [↩]

- M. Svinicki. McKeachie’s Teaching tips: Strategies, research, and theory for college and. Wadsworth, Cengage Learning, 2014. [↩] [↩]

- J. Chittum, K. Enke, A. Finley. The Effects of Community-Based and Civic Engagement in Higher Education: What We Know and Questions That Remain. American Association of Colleges and Universities, 2022, https://eric.ed.gov/?id=ED625877. [↩]

- S. Williamson, L. Paulsen-Becejac. The impact of peer learning within a group of international post-graduate students–a pilot study. Athens Journal of Education, 5(1), pp.7-27, 2017. [↩]

- E. Pascarella, P. Terenzini. How college affects students: Findings and insights from twenty years of research, 1991, https://eric.ed.gov/?id=ED330287. [↩]