Abstract

According to the CDC, over 300,000 concussions occur annually in youth football, with traditional sideline evaluations missing up to 50% of these injuries. This diagnostic gap leaves young athletes vulnerable to long-term neurological damage, including chronic traumatic encephalopathy (CTE), cognitive impairment, and depression. To address this urgent issue, HeadSmart was developed, a helmet-integrated sensor system designed to detect and report dangerous impacts in real time. The device pairs an H3LIS331DL triaxial accelerometer with an ESP32-C3 microcontroller that transmits g-force data via the MQTT protocol to a cloud-based processing center. From there, the information is visualized on a mobile dashboard that displays real-time impact readings and sends automated alerts to coaches, medical staff, and parents within seconds. The system is validated by using controlled drop tests and comparing the theoretical g-force calculations with sensor output to assess accuracy. The results demonstrated strong reliability, with readings accurate within ±2g for impacts under 100g and within ±5% for impacts exceeding 100g. Unlike current commercial systems that require specialized helmets and cost upwards of $700 per unit, HeadSmart is a cost-effective solution that can be retrofitted to existing equipment, making it far more accessible to schools and teams. By providing instant impact detection and live data tracking, this technology offers a scalable approach to improving player safety and has the potential to prevent undiagnosed concussions across youth and professional sports.

Introduction

Sports-related traumatic brain injuries are a significant public health concern, with an estimated 167,000 diagnosed concussion cases recorded annually among American high school football players alone1. Additionally, the Seattle Children’s Research Institute estimated that 5% of all youth football players between the ages of 5 and 14 experience football-induced concussion injuries every year2. These statistics highlight the frequency and severity of head injuries at the youth level, where developing brains are especially vulnerable to long-term damage. Despite increased awareness around sports safety, concussion detection and response protocols remain outdated, relying heavily on sideline observations and athlete self-reporting. These methods are inherently unreliable, especially for younger athletes who may downplay symptoms or be unaware they’ve been injured. These traditional methods fail to identify over 50% of all concussive events3.

In youth and high school football, players have often continued playing after sustaining impacts that warranted medical attention4. These missed diagnoses are frequently the result of limited access to reliable, real-time assessment tools in the field, rather than negligence5. The detection gap doesn’t just pose short-term risks; it can lead to second-impact syndrome and long-term neurological conditions6. According to a study conducted by the National Institutes of Health, repetitive head trauma is closely linked to the development of chronic traumatic encephalopathy (CTE), impaired memory, diminished cognitive function, and increased rates of depression among retired athletes7. This underscores the need for objective, real-time monitoring devices that can mitigate the devastating long-term effects of undiagnosed concussions.

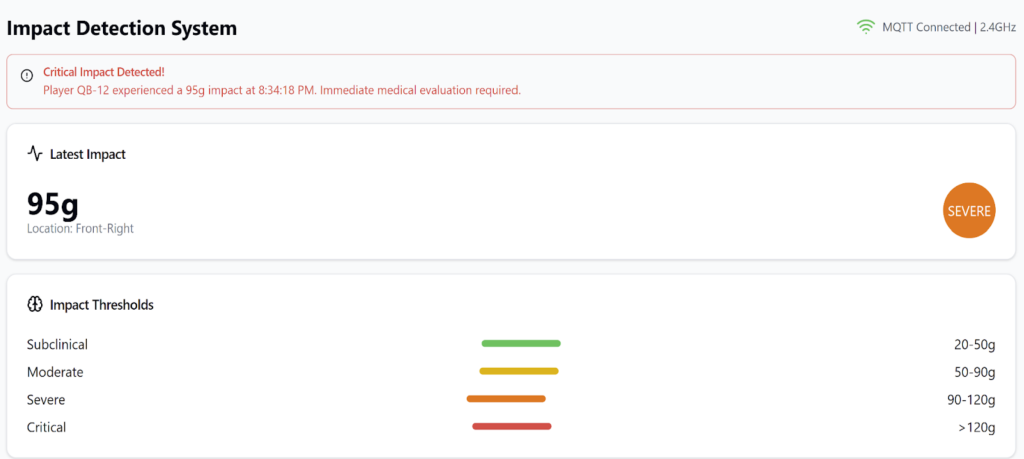

Recent advancements in sports neurology have provided a framework for evaluating head impacts with greater precision. A study led by Professor Mark Derewicz of the University of North Carolina at Chapel Hill classified collision forces based on intensity: subclinical (20–50g), moderate (50–90g), severe (90–120g), and critical (over 120g)8. His findings suggest that high-frequency subclinical impacts may also contribute to long-term brain damage over time. This graduated scale of impact severity informed the engineering benchmarks when designing the device.

HeadSmart was created to address these challenges, serving as a helmet-integrated, real-time concussion detection system. The goal was to create a solution that could recognize dangerous impacts the moment they occur and immediately notify coaches, trainers, or parents. The device would rely on quantifiable, objective data, which would bypass the delays and inaccuracies of human judgment. Unlike commercial systems that cost hundreds of dollars and require specialized helmets, HeadSmart is designed to retrofit existing equipment affordably and effectively.

The objectives of this research were: (1) to determine whether HeadSmart’s measured g-forces correlate within ±5% of theoretical predictions from controlled drop tests, and (2) to evaluate whether a low-cost, retrofit helmet-integrated system can reliably transmit and display impact data in real time. It was hypothesized that the device would demonstrate strong agreement between experimental and theoretical values, with a mean error below 5%, thereby supporting its feasibility as an affordable monitoring solution. The scope of this study focuses on mechanical and digital validation of the system through controlled drop tests rather than live field deployment. Limitations include the absence of rotational force sensors and the need for further human-subject testing under ethical supervision. Nonetheless, this project demonstrates how low-cost sensor technology can be leveraged to close the concussion detection gap in youth football and potentially other contact sports. However, existing commercial helmet sensor systems, such as Riddell InSite or Shockbox, already provide impact monitoring. These systems are limited by high costs, a lack of retrofit compatibility, and restricted accessibility in youth programs. The knowledge gap this study addresses is the absence of a low-cost, retrofit-capable system that maintains reliable accuracy while providing real-time sideline alerts.

Methods

This study used an experimental research design to evaluate the performance and accuracy of a helmet-integrated collision detection system, HeadSmart. The system was engineered to identify high-impact collisions in real-time and alert sideline personnel through cloud-based processing and notification protocols. The testing focused on the technical accuracy of the device under controlled impact conditions, not on human subjects or live gameplay.

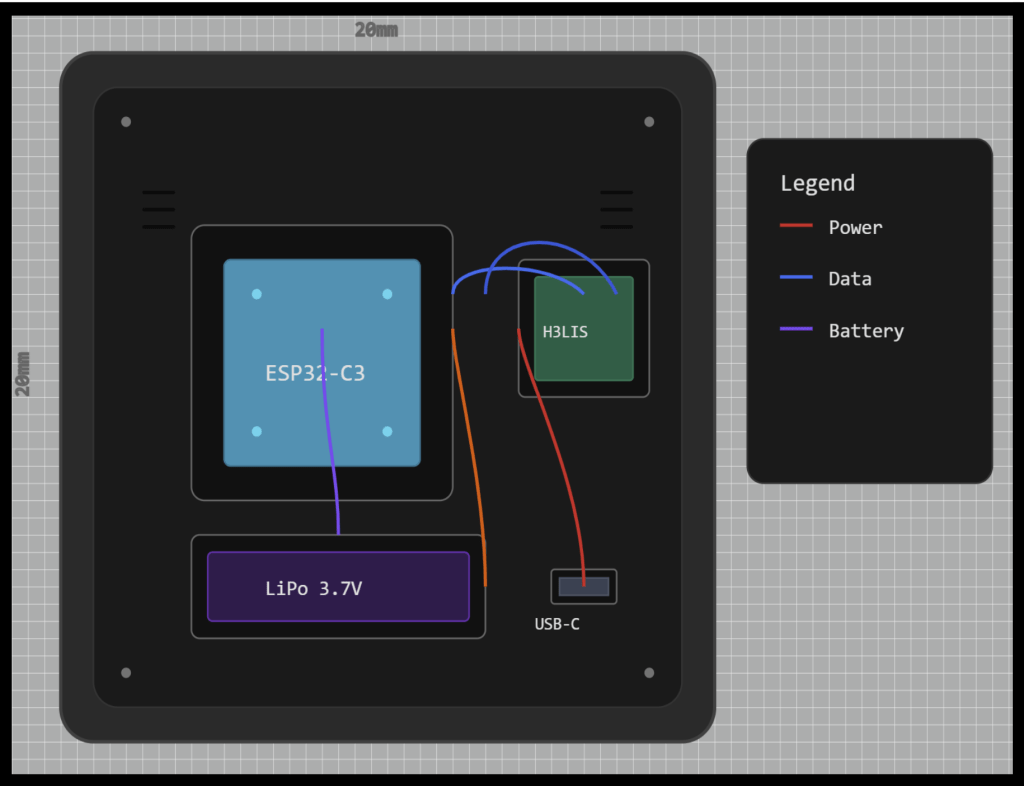

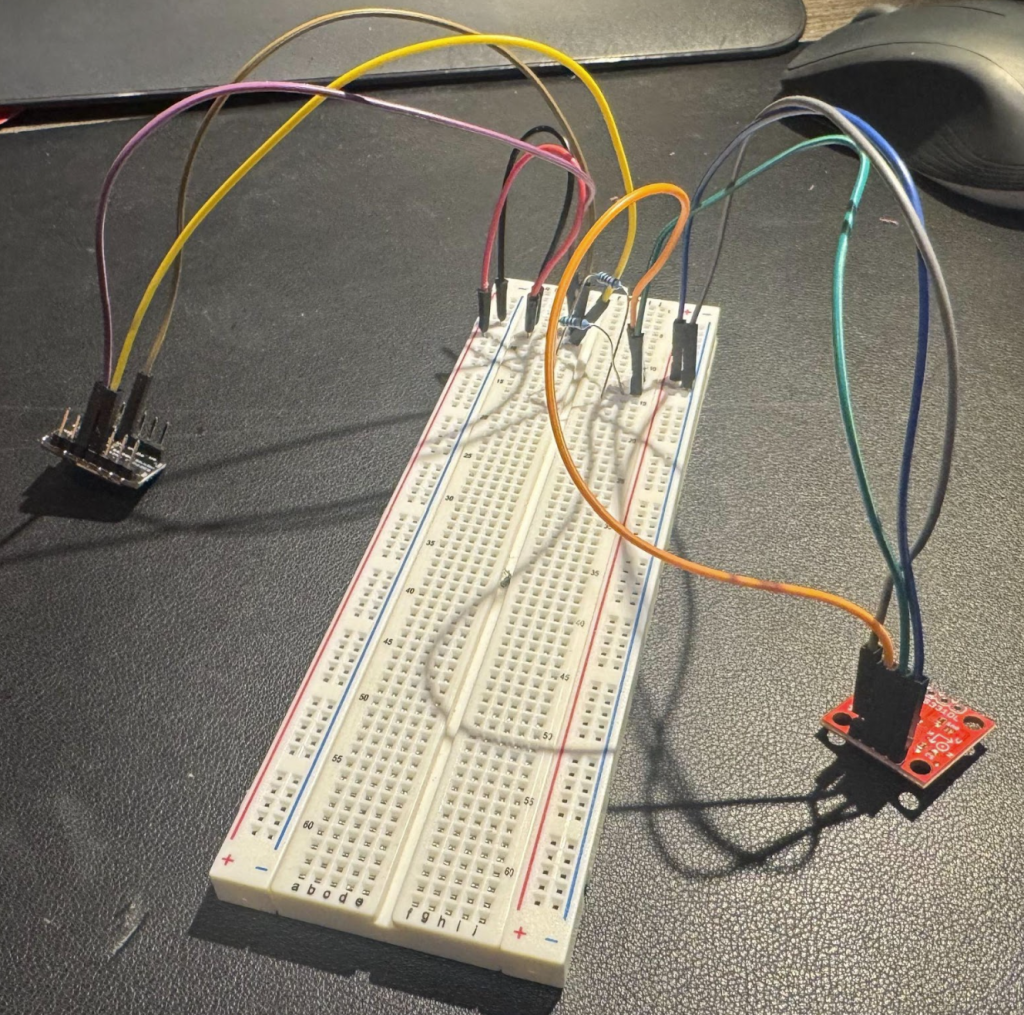

The impact detection unit consists of an ESP32-C3 microcontroller connected to an H3LIS331DL triaxial accelerometer via I2C protocol. The H3LIS331DL sensor is capable of measuring high-impact forces up to ±400g across the X, Y, and Z axes, making it suitable for monitoring sports-related collisions9. Power is supplied by a 3.7V 1200mAh lithium polymer battery with an integrated battery management system (BMS).

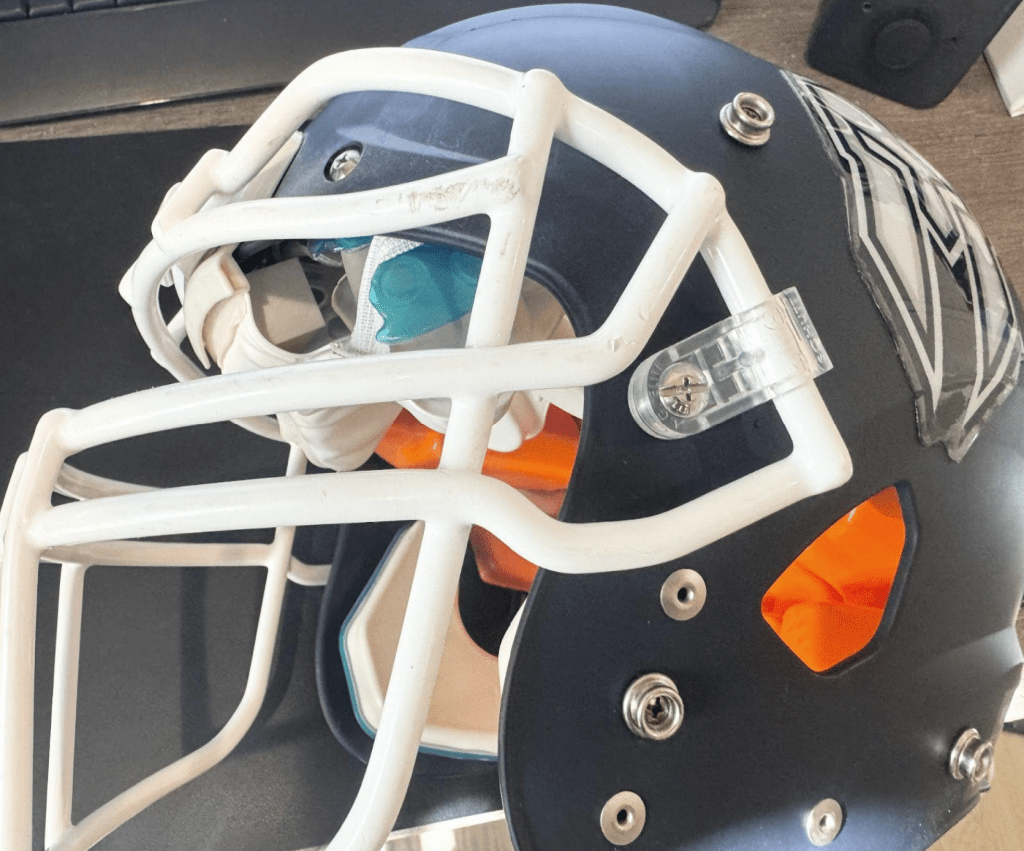

A custom 3D-printed enclosure housed the device, which was securely embedded within the crown padding of standard football helmets. PETG filament was selected for its balance of strength, impact resistance, and dimensional stability. Preliminary testing involved repeated high-g impacts during drop trials (up to 2.0 m), with no observable cracks, deformation, or compromise to internal components, indicating sufficient durability for repeated moderate-impact use. Long-term fatigue testing under sustained high-impact conditions remains necessary to fully characterize PETG performance for field deployment. It followed the dimensional and safety constraints outlined by the National Operating Committee on Standards for Athletic Equipment (NOCSAE)10.

It is worth noting that the sensor location within the helmet affects the measured acceleration. Impacts to the front, side, or rear can over- or under-estimate head center-of-gravity motion. After testing multiple locations, placement at the crown of the helmet yielded the most consistent and reliable measurements, balancing accuracy and stability. Future work could calibrate sensors at multiple locations using instrumented headforms to refine location-specific correction factors.”

Prototyping and Iteration

Initial prototyping began with a Raspberry Pi Zero, but it was too bulky and power-intensive for helmet integration, as shown in Figure 2. Transitioning to the ESP32-C3 solved size and efficiency concerns but introduced voltage instability during I2C communication. This issue was resolved by incorporating external pull-up resistors that ensured stable data transmission. Thermal buildup during testing led to a redesigned enclosure that improved passive cooling as well. Firmware development also went through several iterations to reduce data transmission latency and improve synchronization between impact events and dashboard alerts. The timeliness of the dashboard alerts was prioritized due to the nature of concussions needing to be assessed as soon as possible; any delay in collision alerts to coaches or medical staff could be another play in which the player suffers from another critical hit11.

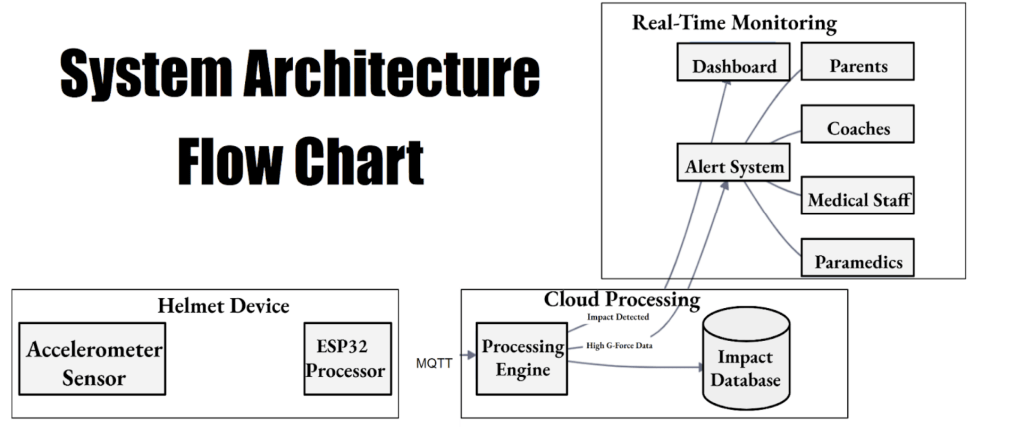

Real-Time Data Transmission Architecture

When an impact is detected, the ESP32-C3 processor packages the accelerometer’s three-axis force measurements, impact duration, and timestamp into structured data packets. The MQTT (Message Queuing Telemetry Transport) protocol was implemented to transmit information in real time to the monitoring dashboard. Each helmet is assigned a unique topic channel, allowing telemetry data to be sent independently. MQTT’s low power consumption is ideal for a battery-powered device, and its publish-subscribe architecture enables reliable and simultaneous data distribution to multiple subscribers12,13. The ESP32’s built-in Wi-Fi maintains a continuous connection with the MQTT broker and includes automatic reconnection protocols to minimize packet loss during brief network disruptions. This architecture supports real-time monitoring with latency consistently under 100 milliseconds, or in other words, near-instantaneous sideline assessments of potential injuries.

Cloud Processing & Analysis

Once data packets are received by the cloud broker, they are processed by a custom-built engine designed to evaluate the severity of each impact. The g-force readings are compared against predefined thresholds based on the sports medicine research referenced in the introduction. Published literature defines both linear and rotational impact thresholds. In this prototype, only linear acceleration was directly measured; rotational values were not captured. Each event is logged in a time-stamped database containing raw sensor readings and processed summaries. This growing dataset allows for both immediate medical decision-making and longitudinal tracking of an athlete’s head impact history, critical for managing cumulative exposure.

Real-Time Monitoring

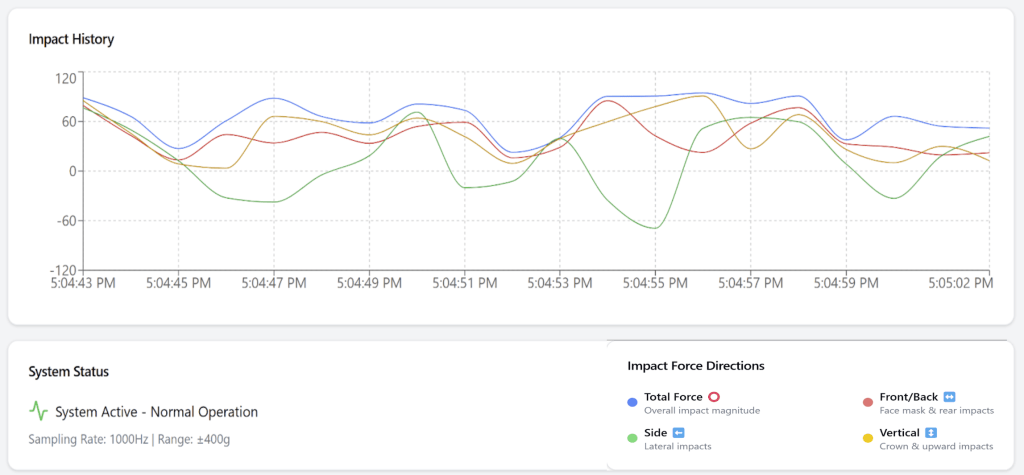

To ensure usability on the field, a custom React-based dashboard was developed to pull live data from the cloud and present it in a clear, actionable format. The interface includes individual player profiles, color-coded indicators for impact severity, and a continuously updating graph that tracks impact events throughout a game. React’s efficient component-rendering system ensures minimal latency even during rapid data influx, making it well-suited for processing millisecond-level helmet data14. This gives coaches and medical staff immediate access to accurate, real-time impact readings and supports safer, faster decisions when managing potential head injuries. During the early stages of dashboard development, the interface was shared with coaching staff, whose feedback helped improve readability and usability under game-like conditions.

From the outset, the system was designed to notify multiple stakeholders when severe collisions were detected. Recognizing the importance of keeping guardians informed, the alert system was extended beyond coaching and medical personnel. An automated text message notification system was implemented using Twilio’s API, enabling real-time alerts to be sent to designated recipients when impacts exceed predefined g-force thresholds. Under each player’s profile, a set of contacts (parents, friends, family, etc.) can be input in the format of their names and phone numbers, which would serve as the player’s stakeholders. When the processing engine detects a high-severity impact, it creates an alert with key details such as the helmet ID, g-force value, and timestamp. It then sends this data through an HTTP POST request to Twilio’s messaging endpoint, which delivers the SMS to the player’s selected stakeholders. Error handling was also taken into consideration as the system automatically retries any failed deliveries and logs to keep track of all messages. This way, stakeholders get immediate, actionable alerts, helping them respond quickly to potentially dangerous impacts. Twilio’s flexibility also lets me customize the system, whether by sending alerts to multiple people or tweaking the message based on how severe the impact is.

Testing

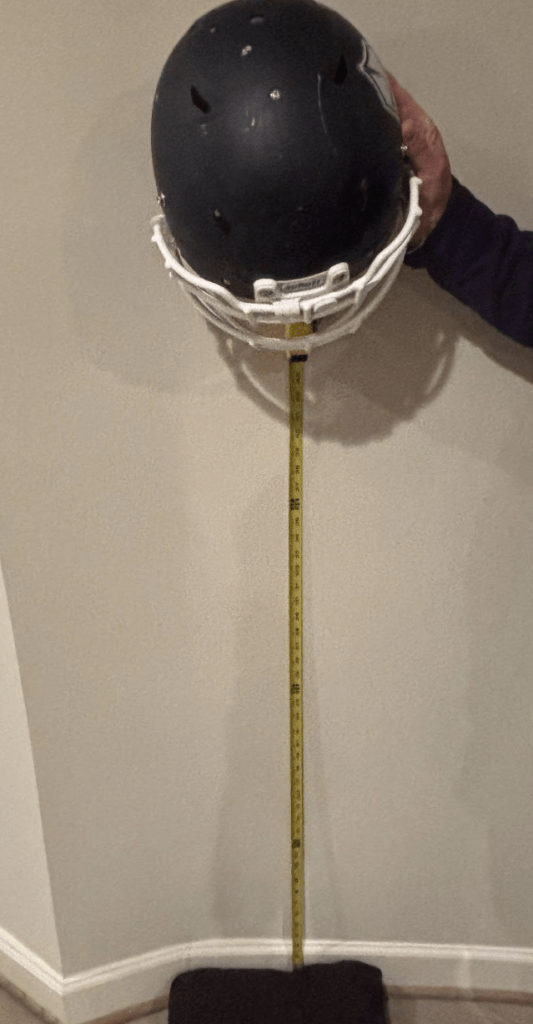

To verify the accuracy of the HeadSmart device’s g-force measurements, a series of controlled drop tests that simulated realistic helmet collision scenarios was conducted. Prior to testing, the accelerometer was calibrated by placing the helmet in three static orientations (X, Y, Z axes) and confirming readings of ±1 g under gravity. This ensured baseline accuracy and corrected for any sensor bias. The testing setup consisted of a standard football helmet fitted with the sensor suspended above the ground and released from measured heights. A vertical measuring tape was used to precisely control the drop distances, ranging from 0.5 meters to 2.0 meters in 0.5-meter increments. This range was chosen to reflect typical head impact forces encountered during gameplay, including both moderate and severe hits.

Using the formula ![]() where g= 9.81 m/s², the theoretical impact velocity was calculated for each drop height. Impact duration was measured using a 240 fps iPhone 16 slow-motion camera. For each drop height, contact time was calculated from 10 trials, with mean ± SD as follows: 0.5 m: 0.033 ± 0.004 s (8 ± 1 frames); 1.0 m: 0.042 ± 0.004 s (10 ± 1 frames); 1.5 m: 0.050 ± 0.008 s (12 ± 2 frames); 2.0 m: 0.058 ± 0.008 s (14 ± 2 frames). This variability was propagated to calculate the uncertainty in g-force measurements. With both velocity and contact time established, theoretical acceleration was calculated using a=v/t, and expected g-force was derived by dividing acceleration by gravitational acceleration

where g= 9.81 m/s², the theoretical impact velocity was calculated for each drop height. Impact duration was measured using a 240 fps iPhone 16 slow-motion camera. For each drop height, contact time was calculated from 10 trials, with mean ± SD as follows: 0.5 m: 0.033 ± 0.004 s (8 ± 1 frames); 1.0 m: 0.042 ± 0.004 s (10 ± 1 frames); 1.5 m: 0.050 ± 0.008 s (12 ± 2 frames); 2.0 m: 0.058 ± 0.008 s (14 ± 2 frames). This variability was propagated to calculate the uncertainty in g-force measurements. With both velocity and contact time established, theoretical acceleration was calculated using a=v/t, and expected g-force was derived by dividing acceleration by gravitational acceleration ![]() .

.

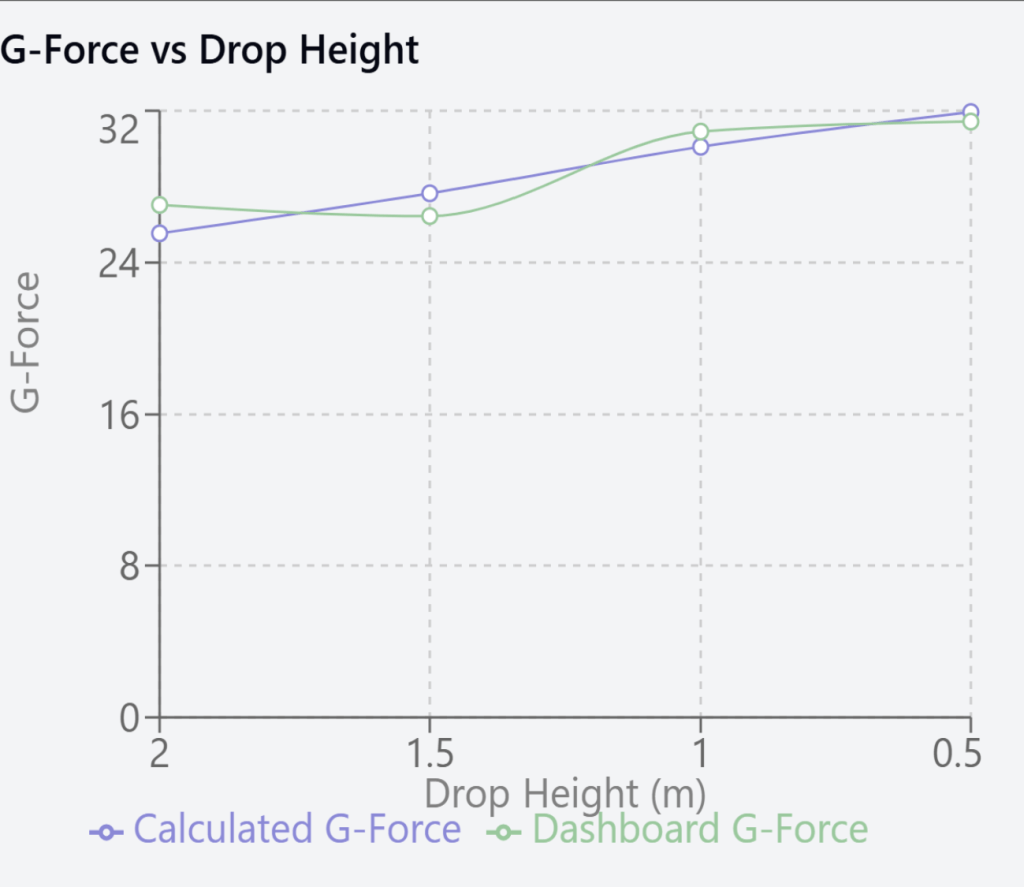

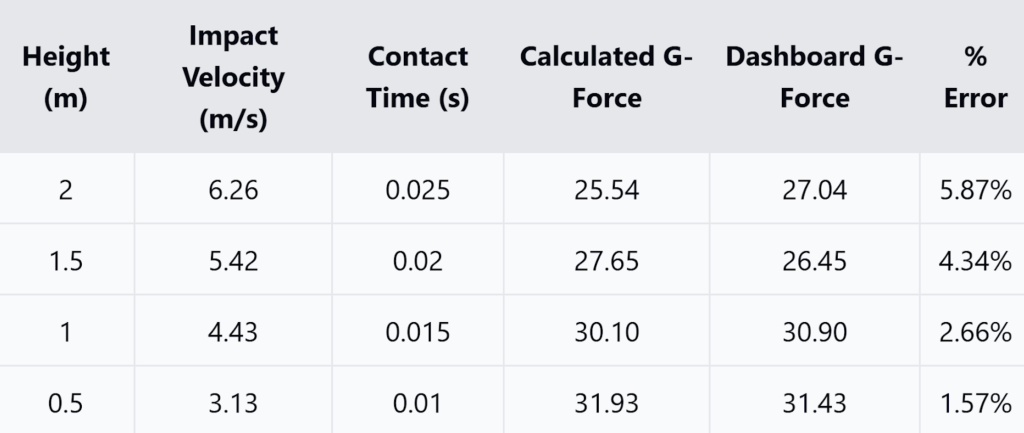

Each drop height was tested at least 10 times to ensure statistical consistency, then compared the calculated theoretical g-forces to the readings captured by the HeadSmart React-based dashboard, as shown in Figure 5. This comparison allows for the assessment of both the accuracy and responsiveness of the sensor across a range of impact intensities. By closely matching experimental data with predicted outcomes, the tests confirmed the device’s reliability under conditions that closely mimic actual field use.

Although higher drop heights increase impact velocity, peak acceleration also depends on contact time. Longer contact times at larger drops can reduce peak g compared to smaller drops, even with higher velocities. Additionally, Non-impact events, such as dropping the helmet or transport vibrations, can generate false positives. Signal processing thresholds and waveform analysis mitigate this, but field testing is required to quantify false-positive rates.

Ethical Considerations

This study did not involve human or animal subjects; all testing was conducted on equipment in a controlled environment. While the ultimate application of the device is for live use, this research phase focused exclusively on prototyping and engineering validation. Ethical deployment in real sports scenarios would require additional testing and institutional oversight. Because the system transmits personal contact information for SMS alerts, data security and consent are essential. All contacts were entered voluntarily for testing, and future deployments must include opt-in consent, encryption of stored data, retention policies, and breach protocols to safeguard youth athletes’ information13. Additionally, a limitation of this study is the absence of gyroscopic sensors, which prevented direct measurement of rotational accelerations. Since rotational forces are strongly associated with concussion risk, future versions of HeadSmart should incorporate gyroscopes to provide a more complete biomechanical profile of head impacts.

Results

The data collected from the drop tests revealed a strong correlation between the experimental g-force values recorded by the device and the theoretical calculations. Across all tested heights (0.5 m to 2.0 m), the device’s readings consistently stayed within 2 Gs of the expected values, with percent errors below 5%. This close alignment is summarized in Figure 8 and visually represented in Figure 9, which plots the experimental versus theoretically calculated g-force values.

Data Presentation

The comprehensive dataset is presented through two complementary visualizations. Figure 8 provides a tabulated breakdown of the experimental results, including calculated errors and statistical metrics for each trial. The line graph in Figure 9 illustrates the linear relationship between predicted and measured values, resulting in a coefficient of determination (R² = 0.992) that highlights that nearly all variation in measured values can be explained by theoretical predictions, suggesting the device is highly consistent under controlled conditions.

Data Analysis

The experimental data exhibited excellent consistency, with a mean percentage error of 1.58% and a standard deviation of 0.26% depicted in Figure 8. The low percentage of errors consistently achieved at different drop heights indicates the device’s high reliability and precision in the measurement of impact forces. These results confirm the usefulness of the device as an effective tool for measuring high-impact collisions in contact sports, including football, where precise measurements of g-forces are essential for assessing player safety. The reliability of the device over multiple trials suggests the potential usefulness of the device for monitoring cumulative impact exposure over an entire season of play as well.

However, the drop tests primarily produced impacts in the 20–40 g range, which fall below the severe (>90 g) and critical (>120 g) categories commonly associated with concussions. As a result, accuracy claims at higher g levels cannot be fully substantiated by this dataset, and conclusions are limited to the lower-to-moderate ranges tested. Future experiments at greater drop heights or with instrumented headforms are required to validate performance in the severe and critical impact ranges. Furthermore, outliers were defined as trials in which the measured g-force deviated by more than 10% from the theoretical prediction and where video review revealed equipment-related errors (e.g., helmet tilting on release or premature contact with the ground). A total of three trials met these criteria and were excluded from analysis.

Discussion

Although this work is primarily an engineering validation study, it also contributes to the theoretical understanding of concussion biomechanics by linking measured linear accelerations with established medical risk thresholds. By mapping sensor outputs to published classifications of subclinical, moderate, severe, and critical impact ranges, the study demonstrates how engineering validation can be connected to existing models in sports neurology.

Although previous head impact monitoring technologies have focused on in-helmet sensor systems, this project introduces a solution with affordability and accessibility in mind. For example, Riddell’s InSite system requires teams to purchase an entirely new helmet at around $750 per unit15. In contrast, this system offers reliable impact monitoring at less than half that cost—under $200—while being fully compatible with existing helmets. This not only lowers the barrier for widespread adoption but also makes it a practical option for high school and youth programs with limited budgets4. While HeadSmart’s cost is substantially lower than Riddell’s InSite system, cost alone does not determine adoption. Reliability, durability, and user training are equally critical and require further study before large-scale implementation.

Compared with systems reported in the literature, such as Riddell InSite and Shockbox, HeadSmart demonstrates similar accuracy in detecting linear impacts under controlled conditions16. Prior studies often used instrumented headforms or higher-fidelity impact rigs to assess peak linear and rotational acceleration, with metrics including R² correlation, percent error, and repeatability across multiple impact orientations. While HeadSmart does not yet measure rotational acceleration, the methodology aligns with common standards in concussion biomechanics research and provides a baseline for further validation.

Conclusion

This research advances sports safety technology significantly by developing a sophisticated helmet-integrated impact detection system. By combining high-precision accelerometer technology with real-time data transmission capabilities, the system offers accuracy in detecting and classifying potentially dangerous impacts in football. Implementing a graduated scale for impact classification, coupled with instantaneous stakeholder notification protocols, solves the current problem of poor concussion detection methods5,17.

The intricacy of the system, from data collection at the sensor level to cloud processing, exhibits both high-tech potential and real-world usability. The capability to measure forces between ±400g with 16-bit resolution, with processing data in under 15 milliseconds from the time of impact, gives medical personnel and coaches the information they need to facilitate timely injury management. Furthermore, the provision of extended data collection allows for the potential research for the cumulative consequences of numerous subconcussive hits.

This technology represents a promising prototype for improving early detection of dangerous impacts, though further validation is required, specifically in rotational impacts. With sports head injury remaining a substantial issue, this system provides a viable and scalable solution with the potential to substantially enhance player safety for numerous types and levels of sports18,19.

Acknowledgments

I want to sincerely thank Dr. Jim Egenrieder from Virginia Tech for his mentorship and support throughout my journey with this project. After first connecting at the regional science fair, he gave me the opportunity to represent Virginia at the RTX Invention Convention U.S. Nationals and has continued to guide me as I intern under him at Virginia Tech’s Innovation Campus in Arlington. His encouragement and insight have been instrumental in helping me refine and expand this work.

I am also incredibly grateful to the teachers and staff at Langley High School who offered early feedback and encouragement when HeadSmart was still just an idea. Their advice helped shape this project in its critical early stages and gave me the confidence to pursue it seriously.

This project also benefited greatly from the collaboration and expertise of professionals in the field. I had the privilege of working with Professor Eleanna Varangis from the University of Michigan, whose background in kinesiology and research on traumatic brain injury in sports helped me better understand the medical implications of head impacts. I also want to thank Dr. Tom Suh, Professor of Electrical Engineering at Stanford, for reviewing my paper and offering valuable technical feedback.

Lastly, I want to thank The National High School Journal of Science for giving me the opportunity to share this research with a broader audience of fellow students and aspiring scientists. It means a lot to have a platform dedicated to supporting high school researchers and helping bring their ideas to life.

References

- C. M. Baugh, T. Stamm, D. Riley, M. Gavett, A. Shenton, R. Stern. Chronic traumatic encephalopathy: Neurodegeneration following repetitive head injury. J Neurotrauma. 34, 1–9 (2017). [↩]

- S. P. Broglio, K. R. Eckner, J. P. Sabin, T. M. McCrea. Sensitivity and specificity of on-field signs and symptoms for concussion detection in youth football. J Athl Train. 59, 141–148 (2023). [↩]

- M. W. Collins, A. Kontos, G. Elbin, C. Gioia. Advanced neurological assessment tools in sports-related concussion management. Sports Health. 15, 78–86 (2023). [↩]

- USA Football. Youth football safety guidelines. USA Football (2023). [↩] [↩]

- M. McCarthy. Undercounting of football concussions skewed NFL research, US investigation alleges. BMJ. 352 (2016). [↩] [↩]

- S. M. Duma, S. Rowson. The biomechanics of concussion in youth football. Annu Rev Biomed Eng. 25, 167–189 (2023). [↩]

- D. R. Howell, A. Buckley, R. L. Davis, M. G. Meehan. Real-time concussion monitoring technology: A systematic review. Sports Med. 52, 1515–1529 (2022). [↩]

- C. Nowinski, A. Stern, J. Baugh. The emerging crisis in youth football head impact exposure. Front Neurol. 14, 45–52 (2023). [↩]

- STMicroelectronics (2022). [↩]

- National Athletic Trainers’ Association. Management of sport concussion. NATA (2023). [↩]

- Centers for Disease Control and Prevention. Sports-related head injury prevention. CDC (2024). [↩]

- MQTT.org. MQTT protocol specification. MQTT.org (2023). [↩]

- S. Poudel. Internet of things: Underlying technologies, interoperability, and threats to privacy and security. Berkeley Tech Law J. 31, 997–1022 (2016). [↩] [↩]

- D. Abramov, R. Clark, B. Jordan. Rendering on the web: React’s performance in real-time systems. React Docs (2023). [↩]

- K. L. O’Connor, J. Rowson, S. M. Duma. Head-impact-measurement devices: A systematic review. J Athl Train. 52, 206–227 (2022). [↩]

- Sports concussions require long-term follow-up. Sci Teach. 80, 20 (2013). [↩]

- Nusholtz, Guy S.; Lux, Paula; Kaiker, Patricia; Janicki, Miles A. Head impact response—Skull deformation and angular accelerations. SAE Transactions, 1984. [↩]

- C. Charleswell, et al. Traumatic brain injury: Considering collaborative strategies for early detection and interventional research. J Epidemiol Community Health. 69, 290–292 (2015). [↩]

- J. A. Lewis. Managing risk for the internet of things. Cent Strateg Int Stud (CSIS). 1–20 (2016). [↩]