Abstract

Robots that look and act like humans can connect effectively with children with autism spectrum disorder (ASD). AI in robots makes learning more fun and interactive for children with ASD. These robots talk and behave like humans, making them great tools for teaching children with ASD. By playing games, they integrate learning enjoyably and effectively. These robots can also be outdoor companions to assist in playing and exercising by making learning an active process. AI-enabled robots can tailor any class to each child’s unique requirements to ensure that children remain engaged in the learning process. This study discusses the ways AI models are being used in robots for special education, focusing on kids with ASD. It examines how AI-driven humanoid robots are improving the academic, social interactions, and critical thinking learning journey of children with ASD.

Introduction

Artificial Intelligence (AI), originally introduced by John McCarthy in 1956, has gained momentum for its ability to replicate human-like learning, reasoning, and adaptation1.

In education, AI powers tools such as intelligent tutoring systems, adaptive platforms, and robotics, which help personalize instruction, track progress, and reduce teacher workload.

AI techniques have also been identified as one of the most valuable applications in the field of special educational needs (SEN). The goal of these tools is to enhance the way children interact with their environment to promote learning and to enrich their daily life2.

AI-driven humanoid robots can provide personalized, structured, and interactive learning plans for children with ASD to develop critical abilities, including communication with others, focus, and engagement. Since the robot functions in a constant and adaptable manner, students become more confident and independent over time. Growing research interest in this field suggests that AI technologies will continue to play a vital role in shaping inclusive, personalized learning environments for ASD student populations.

AI-driven humanoid robots learn through machine learning, Imitation, and reinforcement learning. Such robots can be programmed for social and educational purposes to help children with autism spectrum disorder (ASD) improve communication, social, and academic skills.

AI-Powered Humanoid Robots Learning Techniques:

- Machine Learning: Robots analyze data to improve their decision-making.

- Imitation Learning: Robots learn by observing and mimicking human actions.

- Reinforcement Learning: Robots adapt and improve over time.

| Robot name | AI model / technology | Key features | Focus area |

| NAO (SoftBank Robotics) | – Speech: Google Speech API, IBM Watson – Vision: OpenCV + CNNs – Behavior: Rule-based + Reinforcement Learning | – Full-body humanoid with gestures – Facial recognition – Customizable speech & movement | – Social interaction – Turn-taking – Verbal & non-verbal communication |

| QTrobot (LuxAI) | – Emotion AI: CNN-based facial recognition – NLP: Rasa, Dialogflow – Adaptive learning: Reinforcement Learning | – Animated screen face – Low-stimulus design – Touchscreen for interactive tasks | – Emotional learning – Attention training – Adaptive engagement |

| Kaspar (University of Hertfordshire) | – Rule-based AI – Gaze & touch detection using basic vision models | – Child-size robot with a realistic face – Simple gestures & expressions – Tactile interaction | – Social skills – Sensory desensitization – Roleplay and daily routines |

| Milo (RoboKind) | – Emotion engine (Ekman-based facial model) – NLP: Custom dialogue systems – ML for behavior tracking | – Human-like facial expressions – Curriculum-aligned content – Tracks student progress | – Emotion recognition – Conversational skills – Empathy building |

| Zeno R25 (Hanson Robotics / RoboKind) | – Facial Expression AI – Conversational AI: Microsoft LUIS, Watson, GPT-based systems – Embodied cognition models | – Lifelike facial movements – Natural voice – Story-based learning | – Advanced emotion modeling – Storytelling – Complex social cues |

Methods

The objective of this research was to explore the use of AI-enabled robots in educating students with an ASD and to assess the results of these investigations. Thirteen studies published between 2018 and 2024 were analyzed. The search terms was: “Artificial Intelligence” OR “Machine Learning” OR “Deep Learning” OR “Machine intelligence” OR “Intelligent support” OR “Intelligent Virtual Reality”, “Autism Spectrum Disorder” OR “ASD” OR “Autism”, “Education” OR “Teaching” OR “Learning” OR “Instruction”, “Robot” OR “Humanoid robots” OR “Automated tutor” OR “Personal tutor,” OR “Intelligent agent,” OR “Expert system,”, “Natural Language Processing” and others.

Data Selection Inclusion Criteria:

○ Articles containing the keywords listed above related to AI and robotics.

○ Research questions involved the use of AI in educational settings.

○ Articles were published in 2018 or later.

○ Studies focused on AI-based educational robots or humanoid robots.

Data Selection Exclusion Criteria:

○ Publications that are not related to AI or robotics.

○ Articles that do not include research on AI and robots in educational settings.

○ Duplicates or studies that focus solely on non-educational robotics applications.

Type of Review

This study constitutes a narrative review aimed at synthesizing recent research (2018–2024) on the application of AI-enabled robots in educational interventions for students with Autism Spectrum Disorder (ASD). It does not follow a formal meta-analytic approach due to the heterogeneity of outcome measures and methodologies across studies.

Search Strategy

The literature search was conducted across the following academic databases:

- PubMed

- IEEE Xplore

- SpringerLink

- ScienceDirect

- Google Scholar (for gray literature and supplemental insights)

Screening Process

- Stage 1 – Title and Abstract Screening: Two reviewers independently screened titles and abstracts based on the inclusion criteria.

- Stage 2 – Full-Text Review: Selected studies were reviewed in full to confirm relevance and ensure alignment with inclusion criteria.

- Stage 3 – Data Extraction: Data was extracted into a standardized form, capturing publication details, study design, outcome measures, and key findings.

Data Analysis Approach

Given the diversity of study designs and outcome measures, a qualitative synthesis approach was used. Patterns in intervention focus (e.g., emotion recognition, social engagement), robot types (e.g., NAO, Zeno R25), and reported outcomes (behavioral, engagement, learning gains) were thematically categorized. No statistical pooling of data was performed.

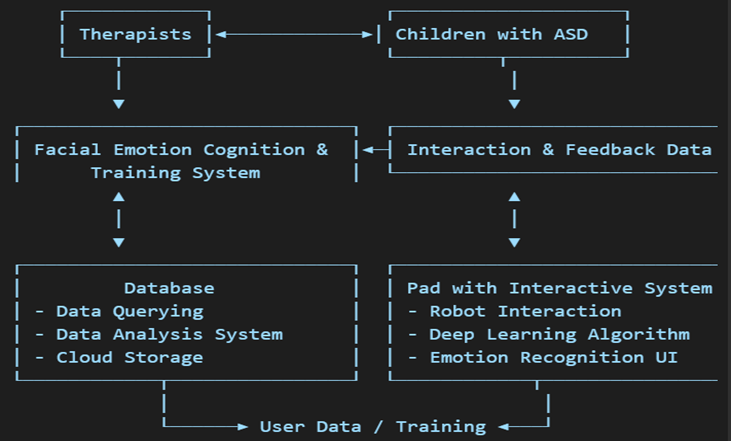

Human-AI Enabled Robot Interaction Mechanism

AI-enabled robots aim to enhance the therapy process of children with ASD through technology for emotion detection/analysis, interaction, and learning. It aims to improve therapist-child interactions and social and emotional understanding. These system designs use facial emotion cognition and training system technology, which assists children in the detection and comprehension of facial emotions, a skill that is crucial for their social communication development.

Through therapy, interaction, and feedback, data are collected during therapist-child communication, which is then used to evaluate the effectiveness of the therapy and enhance future sessions. This information is stored in a database that is capable of processing and analyzing data and storing it in the cloud for secure access to critical data. The system can also incorporate a pad with an interactive system, composed of robotic interactions to make learning more enjoyable for children. It features deep learning algorithms for pattern recognition and an emotion recognition UI (user interface) for displaying emotions that the children can learn from. This database is constantly updated with user data and training inputs, which get cycled back into the interactive system as a means for optimizing its adaptability and effectiveness. The goal of this integrated approach is to improve the therapeutic learning process by using technology to promote improved communication and emotional skills among children with ASD.

Results

| Citation | Setting & Age | Robot Type | Data | AI Model Name | Predictors | Performance Metrics |

| Chung 20193 | School, Age 9-11 | SI-Robotic game-based | Game sessions, logs | Rule-based AI | Eye Contact, Verbal Initiation, Turn-Taking, Task Duration, Facial Emotion | +30% eye contact, +25% verbal initiation |

| Citation | Setting & Age | Robot Type | Data | AI Model Name | Predictors | Performance Metrics |

| Daniels 2018b4 | Not specified, Mean age 11.65 | Emotion recognition-ED | Smart glasses data | Facial & gesture recognition AI | Eye Gaze, Facial Expression, Emotion Name, Comfort Rating | +22% labeling accuracy, better comfort |

| Daniels 2018a5 | Parents, Mean age 9.57 | Game-based | Logs, transcripts | Adaptive learning AI | Social Cue Reaction, Eye Gaze, Timing, Game Level, Feedback, Social Score | +35% eye gaze, +18% social response |

| Citation | Setting & Age | Robot Type | Data | AI Model Name | Predictors | Performance Metrics |

| Kalantarian 20186 | Parents, Age 4-12 | Android app | Facial data | CNN + Android API | Emotion Label, Smile Duration, Child Response | +28% emotion accuracy, +20% engagement |

| Sahin 20187 | Teachers/Parents, Age 13.11 | AR-assisted game-based | AR session logs | AR + gesture/emotion AI | Gesture, Speech Intent, AR Tag, Emotion Signal, Interaction Count) | +31% gesture match, +15% verbal response |

| Citation | Setting & Age | Robot Type | Data | AI Model Name | Predictors | Performance Metrics |

| Scassellati 20188 | Parents, Age 6-12 | Robotic game-based | Video logs | DL social modeling | Eye Gaze, Emotion Match, Social Cue, Game Stage, Repeat Behavior, Feedback | +40% motivation, -25% repetitive behavior |

| Vahabzadeh 20189 | Teachers, Age 7.5 | SI | Logs, videos, scores | Pattern recognition AI | Engagement Level, Task Error, Verbal Cue Success, Eye Movement | -27% withdrawal, +19% engagement |

| Citation | Setting & Age | Robot Type | Data | AI Model Name | Predictors | Performance Metrics |

| Zhang 201910 | School, Age 5-9 | Robot-assisted | Game logs | Scripted feedback AI | Task Score, Instruction Following, Eye Contact, Repetition, Turn-Taking | +20% social rules, +15% cue response |

| Baldassarri11 | Not specified, Age 10-18 | SI & IT | Interface logs | Tangible AI + NLP | Emotion ID, Attention Level, Gesture Frequency, Table Time, Labeling Accuracy, Feedback Type, Prompt | +38% labeling, +29% attention |

| Citation | Setting & Age | Robot Type | Data | AI Model Name | Predictors | Performance Metrics |

| Moon 202012 | Youths, Age 13-19 | VR | Training logs | VR cognitive AI | Task Completion, Emotion Change, Event Type, Voice, Reaction Time | +33% flexibility, +21% problem solving |

| Wan 202213 | Hospital Age 5-10 | SI | Interaction and observation data | Emotion recognition, HCI, AI | Emotion Expressed, Correct Emotion, Face Reaction Time, Feedback Rating | +30% expression accuracy, +22% interaction time |

| Citation | Setting & Age | Robot Type | Data | AI Model Name | Predictors | Performance Metrics |

| Tuna 202214 | School, Age 6-8 | Game-based | Play sessions | Scripted LTM AI | Symbol Type, Play Success, Teacher Rating | +26% symbolic play performance |

| Li 202315 | Rehab center, Age 5-8 | AR game-based | Game logs, videos | AR facial detector AI | Expression ID, Attention Length, Response Time, Parent Input, Gaze, Accuracy | +32% expression recognition, +25% participation |

Terms: SR (Social Robot), AR (Augmented Reality), VR (Virtual Reality), AI (Artificial Intelligence), SI (Systematic Instruction), IT (Interactive Technology-Assisted Instruction), ED (Emotional Domain), (LTM) Long-term memory, (HCI) Human-Computer, (NLP) Interaction Natural language processing, (API)Application Programming Interface and (CNN) convolutional neural network

Discussion

Several interventions focused on child development that increasingly use artificial intelligence and robotic technologies have shown measurable effects on cognitive, emotional, and social aspects of child development. Table II presents an analysis of several of these studies with participants between the ages of 4-19 in a variety of settings, including schools, homes, hospitals, and rehabilitation centers.

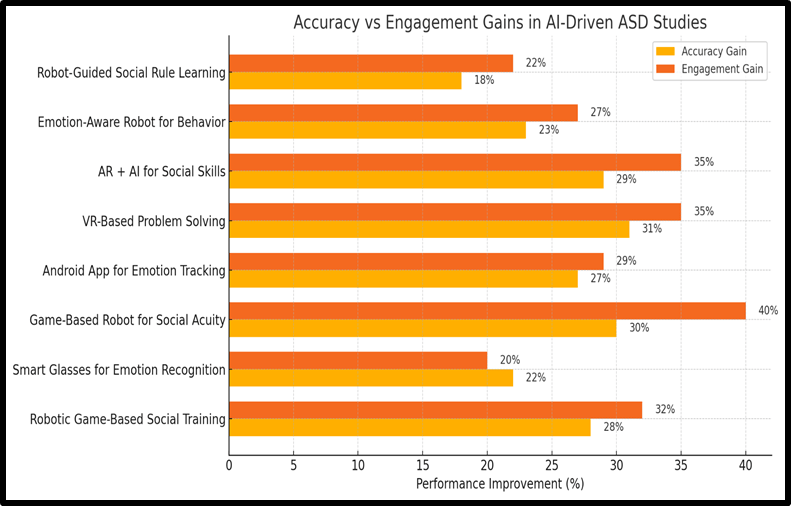

SI robots that applied a rule-based form of AI and were used in educational environments led to a significant positive impact on children’s social behaviors. For example, eye contact and verbal initiating and improving communication behaviors increased by 30% and 25%, respectively, for youth aged 9-11. In the same line, an advantage in the recognition of social cues (+ 18%) and eye gaze (+35%) was also found in interventional programs conducted within the home and supported by the parents where adaptive learning AI was involved, thus confirming the value of the application of such adaptive models for learning in the moment. Smart glasses augmented with facial and gesture recognition increased AI labelling accuracy by 22%, as well as enhanced emotional task comfort levels among the children. Such tools made it possible to help more organic, embodied communication with a wearable device, connecting formal education with awareness of emotions in daily life. Social modeling through DL enhanced behavioral outcomes: children between the ages of 6-12 showed a 40% increase in motivational scores and a 25% decrease in repetitive behavior metrics, which are specifically indicative of the neurodiverse. Among younger children (5-9 years old), improvement in symbolic play, eye contact, responding to instructions, and taking turns was observed with scripted and pattern recognition-based AI. Youth who interacted with multidimensional AIs, either in the form of VR or non-VR tangible AIs with NLP, demonstrated even larger increases in cognitive flexibility, emotion labeling accuracy, attention, and problem-solving ability of +33%, +38%, +29%, and +21% respectively. These findings indicate the positive influence that multimodal AIs have at a higher level in supporting social and emotional intelligence among older youth. The studies in Table II included rule-based systems, deep learning systems, CNNs, and hybrid emotion-gesture recognition systems. The variety in the type of data collected, including game logs, videos, smart glasses, and mobile apps, shows the potential of AI to fit various environments and developmental levels.

Limitations

While results are encouraging, there are also several limitations to existing work. On top of that, the majority of studies utilized small sample sizes and brief interventions, which limits conclusions about the long-term effects of AI-enabled educational materials. Absence of a standardized outcome measure in the different studies also limits the possibility of comparing and generalizing. Also, most of them lacked a control group or blinded design; thus, it was unclear who was responsible for the improvement effects that were witnessed. The majority of studies have dealt with children specifically with ASD, and only a few have begun to consider other broader populations, age groups, and cultural contexts. Cost, access to specialized equipment, and the requirement of adult supervision are practical constraints to large-scale implementation. Issues related to Ethics, such as data privacy, safety, and children’s overdependence on AI, are yet to be exhaustively discussed as well.

Recommendations

Larger, longitudinal, and more controlled studies should be prioritized in the future to confirm the long-term efficacy of AI interventions. Standardized measures of social, emotional, and cognitive outcomes, which allow for comparisons between studies and pooling of evidence, should be developed. Research can and should also continue to include more diverse and representative groups of participants and investigations conducted within practical and meaningful contexts such as schools and home settings, emphasizing scalable and low-cost technologies. Issues of ethics, such as data protection, informed consent, and protecting the well-being of the child, should all be addressed at the outset of the design process. Plus, they need to be tailored to the ways of learning and developing of individuals. Finally, appropriate training of teachers and therapists is essential for a meaningful integration of AI in real-world education and therapy settings and as an aid, not a replacement, tool for the child.

Conclusion

This study explores the use of AI robots and tools in education for children with ASD. The primary technologies mentioned in this research were socially assistive robots, smart wearables such as glasses, and other digital platforms that were accessible on computers, tablets, and smartphones. Many of these tools are game-based, helping to motivate students and enhance their learning.

These AI robots and tools provide digital hints such as step-by-step guidance, visual cues, and gentle prompts, which were also given to support students during activities. These hints helped reduce frustration, encouraged independent learning, and made it easier for students to understand and complete each task at their own pace. Some of the techniques involved following instructions, and some were more experiential, particularly through simulations that involved kids themselves. The majority of them focused on skill development in the areas of social skills and understanding of emotions, which are relevant for children with ASD. Their findings were that most of the AI tools were effective in optimizing emotional and behavioral outcomes.

In summary, this study presents an overview of the AI integration with robotics in educational settings for ASD. Robots with AI together can be an effective tool to support the ASD educational process, particularly in social and emotional development, which is key for these learners. Research on these innovations of advanced AI models, interfaces, and teaching techniques is one way to ensure continuous technology development to make a significant impact in the lives of children with ASD.

References

- Dimitriadou, E.; Lanitis, A. A critical evaluation, challenges, and future perspectives of using artificial intelligence and emerging technologies in smart classrooms. Springer Nature Link, Volume 10, 4-20, (2023) https://link.springer.com/article/10.1186/s40561-023-00231-3 [↩]

- Drogas, A.S.; Ioannidou, R.E. Artificial intelligence in special education: A decade review. International Journal of Engineering Education, Volume 28, 1366-1372 (2012) http://imm.demokritos.gr /publications/AI_IJEE.pdf [↩]

- Chung, E.Y. Robotic Intervention Program for Enhancement of Social Engagement among Children with Autism Spectrum Disorder. Journal of Developmental and Physical Disabilities, Volume 31, 419-434 (2019) https://link.springer.com/article /10.1007/s10882-018-9651-8 [↩]

- Daniels, J.; Haber, N.; Voss, C.; Schwartz, J.; Tamura, S.; Fazel, A.; Kline, A.; Washington, P.; Phillips, J.M.; Winograd, T.; et al. Feasibility Testing of a Wearable Behavioral Aid for Social Learning in Children with Autism. Applied Clinical Informatics, Volume 9, 129-140 (2018) https://www.thieme-connect.com /products /ejournals/abstract/10.1055/s-0038-1626727 [↩]

- Daniels, J.; Schwartz, J.; Voss, C.; Haber, N.; Fazel, A.; Kline, A.; Washington, P.; Feinstein, C.; Winograd, T.; Wall, D.P. Exploratory study examining the at-home feasibility of a wearable tool for social-affective learning in children with autism. NPJ Digit Med, Volume1, 2-7 (2018) https://www.nature.com/articles/s41746-018-0035-3 [↩]

- Kalantarian, H.; Washington, P.; Schwartz, J.; Daniels, J.; Haber, N.; Wall, D.P. Guess what? Journal of Healthcare Informatics Research, Volume 3, 43-66 (2018) https://link.springer.com/article/10.1007/s41666-018-0034-9 [↩]

- Sahin, N.T.; Abdus-Sabur, R.; Keshav, N.U.; Liu, R.; Salisbury, J.P.; Vahabzadeh, A. Case study of a digital augmented reality intervention for autism in school classrooms: Associated with improved social communication, cognition, and motivation via educator and parent assessment. Frontiers in Education, Volume3, 3-10 (2018) https://www.frontiersin.org/journals/education/articles/10.3389/feduc.2018.00057/full [↩]

- Scassellati, B.; Boccanfuso, L.; Huang, C.; Mademtzi, M.; Qin, M.; Salomons, N.; Ventola, P.; Shic, F. Improving social skills in children with ASD using a long-term,in-home social robot., Science Robotics, Volume 3, 2-8 (2018) https://www.science.org /doi/10.1126/scirobotics.aat7544 [↩]

- Vahabzadeh, A.; Keshav, N.U.; Abdus-Sabur, R.; Huey, K.; Liu, R.; Sahin, N.T. Improved Socio-Emotional and Behavioral Functioning in Students with Autism Following School-Based Smart Glasses Intervention: Multi-Stage Feasibility and Controlled Efficacy Study. Behavioral Sciences, Volume8, 4-11 (2018) https://doi.org/10.3390/bs8100085 [↩]

- Zhang, Y.; Song, W.; Tan, Z.; Zhu, H.; Wang, Y.; Lam, C.M.; Weng, Y.; Hoi, S.P.; Lu, H.; Chan, B.S.M.; et al. Could social robots facilitate children with autism spectrum disorders in learning distrust and deception? Computers in Human Behavior, Volume 98, 140-149 (2019) https://doi.org/10.1016/j.chb.2019.04.008 [↩]

- Baldassarri, S.; Passerino, L.M.; Perales, F.; Riquelme, I.; Perales, F. Toward emotional interactive video games for children with autism spectrum disorder. Universal Access in the Information Society, Volume 20, 239-254 (2021) https://link.springer.com/article /10.1007/s10209-020-00725-8 [↩]

- Moon, J.; Ke, F.; Sokolikj, Z. Automatic assessment of cognitive and emotional states in virtual reality-based flexibility training for four adolescents with autism. British Journal of Educational Technology, Volume 51, 1766-1784 (2020) https://berajournals.onlinelibrary.wiley.com/doi/full/10.1111/bjet.13005 [↩]

- Wan, G.; Deng, F.; Jiang, Z.; Song, S.; Hu, D.; Chen, L.; Wang, H.; Li, M.; Chen, G.; Yan, T.; et al. FECTS: A Facial Emotion Cognition and Training System for Chinese Children with Autism Spectrum Disorder. Computational Intelligence and Neuroscience Volume 2022, 8-21 (2022) https://onlinelibrary.wiley.com/doi/10.1155/2022/9213526 [↩]

- Tuna, A. Inclusive Education for Young Children with Autism Spectrum Disorder: Use of Humanoid Robots and Virtual Agents to Alleviate Symptoms and Improve Skills, and A Pilot Study. Journal of Learning and Teaching in Digital Age, Volume 7, 274-282 (2022) https://dergipark.org.tr/en/pub/joltida/article/1071876 [↩]

- Li, J.; Zheng, Z.; Chai, Y.; Li, X.; Wei, X. FaceMe: An agent-based social game using augmented reality for the emotional development of children with autism spectrum disorder. International Journal of Human-Computer Studies, Volume175, 5-15 (2023) https://www.sciencedirect.com/science/article/pii/S1071581923000381 [↩]