Abstract

Breast cancer persists as the predominant cancer among women worldwide. According to the World Health Organization, breast cancer resulted in approximately 375,000 to 670,000 deaths worldwide from 2000 to 20221. Timely detection is crucial for optimizing survival rates. Although traditional imaging models—such as mammography, ultrasound, MRI, and CT—have evolved, they have limitations. Artificial Intelligence (AI) innovations in imaging present language learning models to enhance lesion detection, outlining, and evaluation, thereby improving speed and dependability. This review compiles insights from prior investigations, emphasizing AI technologies like convolutional neural networks (CNNs), vision transformers (ViTs), computer vision, and generative AI, along with their roles in improving image-based diagnostics and augmenting traditional modalities. These AI solutions excel in tumor outlining, categorization, and spotting, which improves detection rates, decreases misdiagnoses, and alleviates analytical load on radiologists. AI models exhibit high accuracy and sensitivity by using synthetic datasets for training. Studies show that GAN-driven techniques, relying on fabricated images, achieve an average sensitivity rate of 90% and specificity of 85%, exceeding traditional machine learning approaches that typically fall short of 80% on comparable datasets2. Moreover, generative AI tackles data scarcity by producing realistic synthetic images. Structured on the latest studies, this article divides the content into two primary areas: conventional imaging and AI-enhanced imaging. It explores data synthesis and refined approaches to cancer screening. These findings have important applications and also highlight limitations like clinical trials, data bias, data privacy, and training for clinicians and patients. Overcoming these hurdles will enhance AI’s diagnostic and screening efficacy while alleviating potential risks.

Keywords: Breast Cancer, Mammogram, MRI, CT Scan, CNN, Vision Transformers (ViT), Computer Vision, Generative AI

Introduction

Breast cancer has become the most common cancer amongst women, with 13% of women in the United States developing breast cancer at least once in their lifetime3. With new advancements in technology, breast cancer deaths have exponentially decreased in Western countries, by 44% in the United States over the past 20 years4. However, due to regional disparities, such as limited healthcare access and lack of money, breast cancer mortality has increased in less developed nations.

Early diagnosis of breast cancer saves lives by up to 40% as compared to later diagnosis, significantly increasing the lifespan of those patients. Furthermore, the five-year survival rate is around 99%, as compared to the 32% of patients diagnosed with stage four5. This increased survival rate highlights the importance of early diagnosis in treatment.

To this end, various technologies have been developed and enhanced to improve early diagnosis. The three most effective and common technologies to obtain an image of breast tissue include mammography, ultrasound, and Magnetic Resonance Imaging (MRI).

Current Imaging Technologies

Mammography is a specialized X-ray technique specifically used for Breast Cancer screening. It uses low doses of X-rays to create an image to highlight specific abnormalities. Scientists have been incorporating mammography with CAD (Computer-Aided Detection) and Tomosynthesis. It is highly sensitive in detecting microcalcifications, which signal early signs of breast cancer, and has fewer false positives. It is also widely available and cost-efficient. However, it is less sensitive when scanning deep breasts and exposes patients to ionizing radiation.

Ultrasound utilizes high-frequency sound waves to create an image based on tissue density differences. Ultrasound is used in distinguishing the tissue mass and providing real-time guidance during biopsies. There is no ionizing radiation, making ultrasound safer. However, it demands highly trained operators, yields less detailed images than mammography, and is less effective for early-stage detection.

MRI uses radio pulses and strong magnetic fields to generate images of breast tissue. It is used during high-risk screening, assessing breast implants, and analyzing the spread of the cancer throughout the body. MRI is highly specific for denser tissue and provides anatomical data to aid in surgery without the use of radiation. However, it is highly costly, making accessibility limited, and it contains contrasting agents that might induce an allergic reaction6.

Although these traditional imaging technologies have revolutionized early cancer detection, they still have significant limitations, particularly in interpreting complex medical images and spotting irregularities through manual visual inspection. Artificial Intelligence(AI) is transforming various sectors and can be implemented to overcome the challenges by providing enhanced precision and more accurate imaging. The following section reviews three of the marketed AI-based products: WAUVE (Whole Lesion Aware Network), Lunit Insight MMG, and DeepHealth AI.

Current AI-Based Products

WAUVE is a deep learning AI model that uses CNNs (Convolution Neural Networks) to analyze the AUC (Area Under the Curve) metric to process Ultrasound videos and help in cancer detection. It processes the ultrasound image from a 4D perspective, and the CNN is used to focus on specific regions, allowing greater specificity, reaching an AUC metric of about 0.88, avoiding false positives. WAUVE uses varied datasets to validate imaging reliability, easing the burden on radiologists while yielding superior diagnostic outcomes7.

Lunit Insight MMG is a CNN-based technology used in detecting breast cancer, specifically microcalcifications and large masses of tumors8. It scrutinizes mammograms by spotting unusual patterns, including asymmetry, that signal tissue size anomalies potentially linked to tumors. Through comparisons with earlier scans, it amplifies these discrepancies and rates tumor severity on a 7-point scale9. By enhancing mammogram accuracy, Lunit Insight MMG minimizes false positives and detects microcalcifications, which are common in the early stages of cancer. Furthermore, the system is very efficient, delivering swift results that conserve time in clinical workflows.

DeepHealth AI is a CNN-based system that creates tools to assist radiologists in reading mammograms10. It is known for its high sensitivity and ability to scan deep tissue. It uses microcalcifications and image distortions to extract the texture and shape of the tumor. Using these metrics, the system assesses a malignancy score. It is characterized by its adaptability, versatility, and heavy use of multiple datasets11. DeepHealth AI further reduces false positive occurrences and uncovers subtle screening discrepancies for superior accuracy.

Aims and Objectives

This review investigation assesses specific AI-based models through a structured evidence synthesis, analyzing deep learning AI algorithms used on MRI, Ultrasound, and Mammogram screening techniques in breast cancer imaging. The aim is to investigate the future of breast cancer detection through examining the role of Deep Learning in diagnosis. This study will review different imaging techniques for early breast cancer detection. One of the goals is to compare different AI models, their performance on tumor classification, further tissue analysis, and suggest a future research pathway. This review is divided into two parts: Traditional imaging techniques and new AI techniques, with a goal to review different modalities, provide an overview, models, key studies, limitations, and advancements in each modality. The review aims to provide a thorough analysis of past studies and the application of AI in early breast cancer detection, while also addressing the ethical implications of AI integration in early breast cancer detection.

Methodology

A comprehensive review of AI-based breast cancer detection studies published between 1998-2025 was conducted. Recent research from 2020 to 2025 was selected for AI-based modalities, whereas publications from 1998 to 2025 were selected for traditional modalities. Key search phrases centered on “Breast Cancer” were paired with terms such as “Artificial Intelligence,” “Deep Learning,” “Machine Learning,” “Large Language Model,” “Multimodal,” “Self-Supervised Learning,” “Mammography,” “Digital Breast Tomosynthesis,” “Ultrasound,” “MRI,” “PET,” “Detection,” “Diagnosis,” “Staging,” “Subtyping,” “Recurrence,” “Treatment Response,” “Survival Prediction, and “Legal-Ethical Challenges”. Relevant articles were picked from the following sources: ScienceDirect, PubMed, Research Gate, Cancer Journal for Physicians, Nature, JAMA, arXiv, and Google Scholar. Population-based cancer incident data were obtained from reputable sources such as the American Cancer Society and the National Breast Cancer Organization, detailing the significant increase in breast cancer cases in the United States and worldwide over the past couple of years. Additionally, research studies published in the IEEE, for AI imaging modalities, such as CNNs, Vision Transformers, and Generative AI, were used. The figures and tables used were from the same sources used to write the contents of the review paper. Websites from companies such as Lunit and DeepHealth were referenced, as their products are actively used in breast cancer detection.

Section 1. Traditional Imaging Techniques

X-Ray Mammography

Overview and Application of Mammography

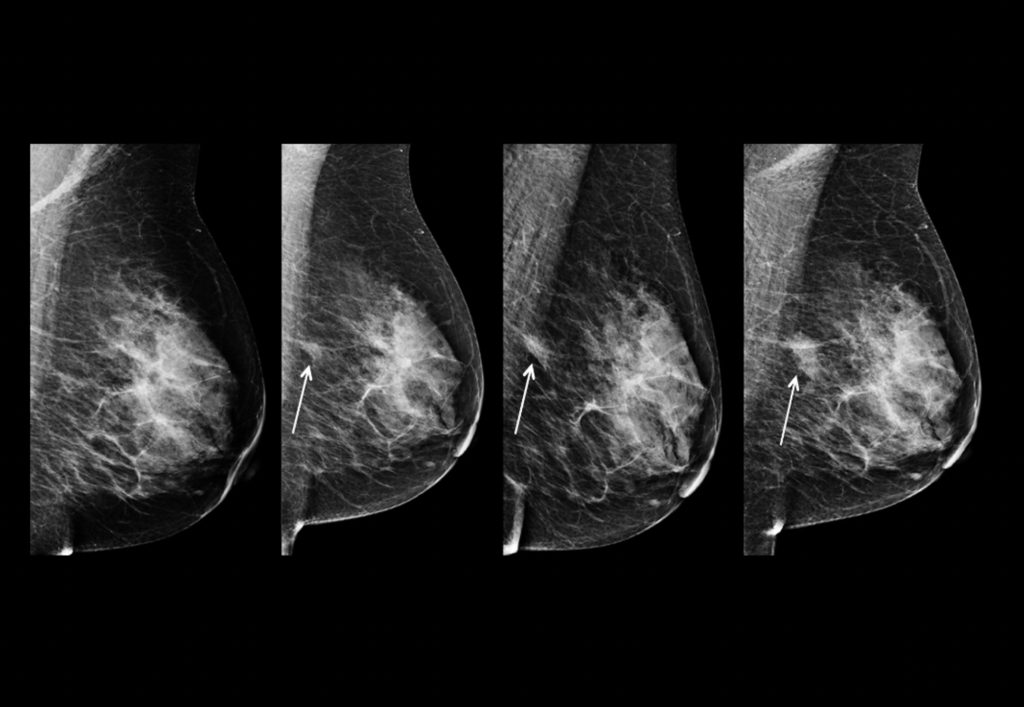

X-ray mammography is one of the most well-recognized and popular methods of breast cancer screening. It utilizes radiation to detect microcalcifications (small specks of calcium that are typically benign but can show early signs of cancer), tumor masses, and image distortions (which show contrast to highlight tumors). This radiation produces high-contrast images, with tumors and calcifications appearing as white, while other noninvasive tissue appears as a darker color, as shown in Figure 1. Radiation analyzes the shape (round and oval shapes are benign, while jagged shapes are typically malignant) and margins of a tumor to determine whether it is benign or malignant. Whenever the suspicious regions are not definitive for cancer, physicians perform biopsies, an invasive procedure. Additionally, the machines use breast compression to keep the breast in place to minimize image blurring and reduce the amount of radiation.

Mammography is primarily used in breast cancer screening, specifically in early detection for women between the ages of 40 and 74. It has been shown to decrease mortality rate by 20-40% for women over the age of 50. Additionally, mammography monitors patients post-cancer treatment to monitor the detection or emergence of a new cancer or the same one, and it uses specific therapy methods to check changes in tumor size, which allows doctors to track tumor growth to continue further treatment methods.

Mammography Models

Screen Film Mammography, known widely as SMF, uses X-rays to capture analog images by using low doses of X-rays that pass through the breast tissue. It has a 90% sensitivity and is used primarily to scan lower-density breast tissue. Screen Filming Mammography utilizes CAD (Computer Aided Detection) to integrate electronic records of past scans to compare with real-time scans to analyze the tumor and whether it is benign or malignant. It also enhances scan resolution. However, Screen Film Mammography has higher overall costs and overlapping dense tissue, reducing clarity at times.

Digital breast tomosynthesis (DBT), or 3D mammography, takes X-ray scans from multiple angles to generate a 3D image. It improves scan accuracy by 15-20% and identifies smaller distortions much better. However, this technique requires high maintenance and uses a significantly high amount of radiation13.

S2D, or synthetic mammography, generates data for the 2D image from DBT data without the need for separate mammograms. This type of machinery decreases radiation and is much more cost-effective, but it has a lower resolution.

Key Studies and Advancements in Mammography

Overall, Mammography has decreased mortality amongst women between the ages of 50 and 69 by 20-40% internationally14. Key studies highlight further improvements in the mammogram over time. The DMIST (Digital Mammography Screening Trial) study from 2001-2003 showed a 15% higher detection rate for women under fifty, and ones with denser breast tissue. The Tomosynthesis studies from 2010-2012 found that DBT increased the detection rate by 1-2 women among 1000, and reduced false positive rates by 15-30%. Contrast-enhanced mammography (CEM), still being studied today, operates on 90-95% tumor sensitivity, working very well on denser tissue, while also decreasing overall costs15.

There have been significant advancements in the field recently, however. AI has been used to detect false positives in many places, proving to be as effective and sometimes even more time and cost-efficient. Companies like WISDOM have tailored personalized screening based on one’s genetics and past history, allowing for safer and more accurate scans through personalized safety and more accurate data. S2D and other lower-dose techniques for radiation are being used to prevent cancer rates. Furthermore, scientists are combining X-rays with ultrasound for more accurate data16.

Limitations of Mammography

However, with the improvements, Mammography still has significant shortcomings. Over the past ten years, 50% of women have experienced false diagnoses, leading to 539 extra mammograms, 186 ultrasounds, and 188 biopsies per 2400 women. Additionally, when scanning denser breast tissue, 10-20% of women had false negatives due to the lack of scanning. Furthermore, women are exposed to a lot of radiation, about 0.29 mSv to the body. Annual screening can lead to higher chances of cancer, especially for women from 40-80. Finally, 25% of women have reported financial anxiety due to the cost of these procedures17.

Magnetic Resonance Imaging(MRI)

Overview of MRI and Application

Magnetic Resonance Imaging (MRI) is a critical tool in breast imaging, serving as a key method alongside mammography and ultrasonography for assessing breast conditions. It is primarily used to evaluate and characterize malignancies. Breast MRI demonstrates higher sensitivity in detecting breast cancer compared to mammography, ultrasonography, or their combination, particularly in asymptomatic high-risk women.

MRI, known for its high sensitivity, employs powerful magnetic fields to create detailed images of breast tissue by using radio waves to stimulate protons in water and fat molecules. As these molecules return to their normal state, they emit signals that produce contrasted images. A Gadolinium-based contrast agent is often injected to enhance the visibility of areas with high vascularity or permeability, aiding in the identification of malignant tumors. The MRI machine captures multi-angle images of a patient, generating a 3D view that enhances sensitivity, as shown in Figure 2. It is especially effective for imaging dense tissue, screening high-risk women (e.g., those with BRCA1/2 mutations or a strong family history of cancer), evaluating multifocal or multicentric tissue, and monitoring chemotherapy responses by tracking tumor size and changes.

Figure 2: Higher rate of cancer detection with MRI. Bright tissue highlights tumor growth through the use of a scan and magnetic impulse fields18.

MRI Models

MRI uses multiple types of models to tailor to address the needs of patients, using properties for highly specific tissue19.

T1-Weighted Contrast-Enhanced MRI, noted as the cornerstone of breast cancer detection, offers high sensitivity by analyzing areas with higher blood flow, which shows the invasiveness of the tumor, and has a higher sensitivity for identifying malignant and benign tumors.

T2-Weighed MRI analyzes the composition of the tissue to differentiate between benign conditions, such as cysts and tumors. It portrays this contrast through T2-weighted images.

DWI, or Diffusion Weighted Imaging, analyzes the diffusion of water molecules and areas with higher tissue density. This differentiation of different tissue areas through the apparent diffusion coefficient maps shows the benign and malignant tumors through heavy contrast.

MR Spectroscopy, known as Magnetic Resonance Spectroscopy, analyzes the chemistry and composition of the tissue by detecting parts such as choline peaks to check if the tissues are malignant or not.

HighSpeed MRI uses strong magnetic fields with a very high resolution to improve the detection of smaller lesions. However, this technology is new, and not experimented much, raising questions about its efficacy19.

Key studies and Advancements in MRI

There have been multiple key studies on MRI research. RSNA’s study from 2019 on MRI’s sensitivity highlights the machine’s over 90% sensitivity, and also its guidance in neoadjuvant therapies, with an average of 92% sensitivity, and 90% specificity. Additionally, neoadjuvant chemotherapies show an increase from 76.7% to 94.4% with percutaneous biopsies, enhancing the probability of processing effective data through PCR by 28 times. BRCA ½ carriers’ high-risk screening shows a cancer detection rate of 26.2 per 1000 people, and a 5.4 per 1000 people of high-risk cancer. Chest irradiation screening has a sensitivity ranging from 94-100%, and a 4.1% detection when MRI was combined with Mammograms. DWI for lesion discrimination shows a 90% specificity, and 1.4*10^-3 mm/s detection speed for rare cancers19.

There are advancements being made to improve MRI scans such as Multi-Parametric MRI, diffusion-weighted imaging, and MR spectroscopy. Multi-parametric MRI combines multiple MRI models, such as DWI and T1-weighted for lesion assessments, which help analyze and improve scan specificities. DWI, or Diffusion Weighted Imaging, shows strong image contrast without the use of Gadolinium, making it much safer and easier to use for patients. MR Spectroscopy detects choline peaks, which helps in biochemical research and also in identifying malignant tumors20.

Limitations of MRI

Although MRI has been the face of Breast Cancer imaging, it nevertheless comes with stark limitations. Firstly, the machine is highly costly compared to the other alternatives, resulting in low availability in less developed areas . Since MRI is very specific, it can sometimes lead to false positives, resulting in unnecessary biopsies that can lead to tissue damage and bacterial infections. MRI technologies also require a lot of training, which needs to be very specialized. This specialization is not available in lower-income areas, which makes the devices sometimes inaccurate. Furthermore, MRI does not work for patients with pacemakers, metal implants, and most importantly, Gadolinium allergies. Gadolinium also causes the patient a significant amount of pain, making them feel very uncomfortable.

Computer Tomography (CT) Scans

Overview of CT Scans

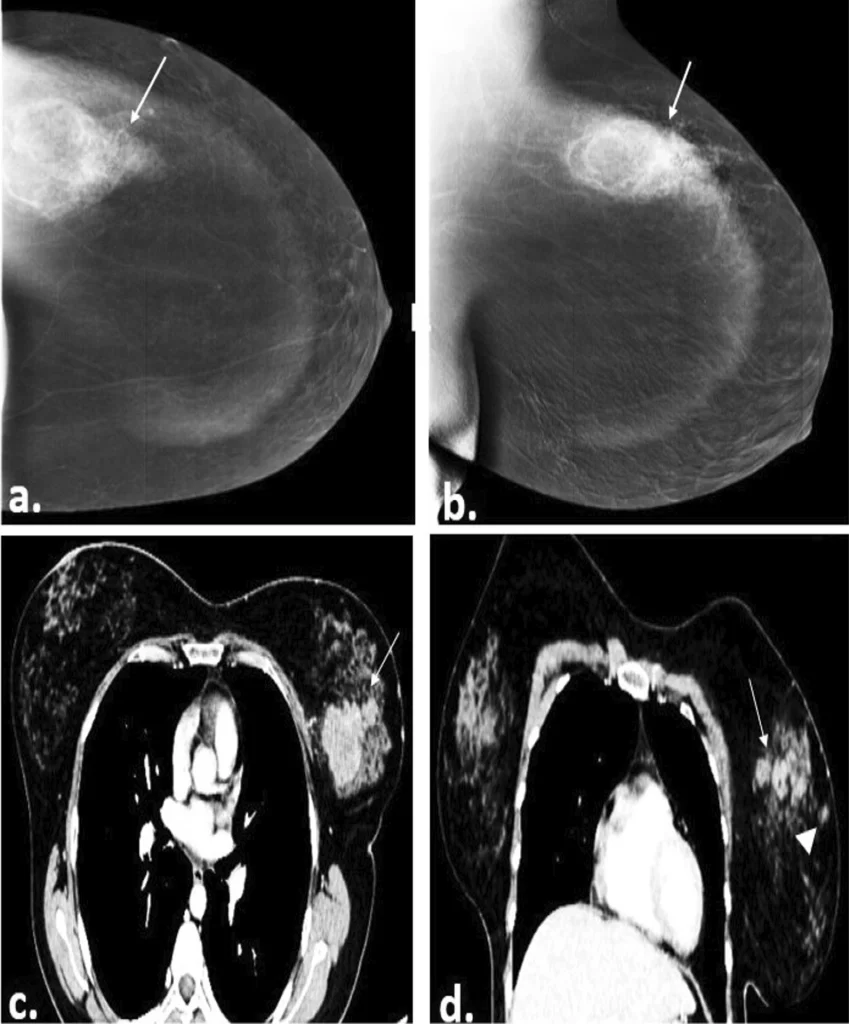

CT scans, or computed tomography, are a non-invasive method for diagnosing breast cancer using X-rays. Unlike standard X-rays, CT scans produce cross-sectional, multi-dimensional images from various angles. These images are combined to create a comprehensive view of the breast tissue from all sides. The process involves a person lying down while X-rays pass through the body, with detectors measuring variations in light intensity across the tissue. A computer then processes this data into 3D images, enabling radiologists to identify abnormalities, such as white dots indicating tumor activity (Figure 3). In cases where distinguishing between benign and malignant tumors is challenging, contrast-enhanced CT scans or ionizing agents may be used to improve clarity.

Figure 3: Image of a 40-year-old female patient’s right breast with CESM (contrast-enhanced mammography) (a, b) and contrast-enhanced CT chest (c, d) showing multiple spiculated heterogeneously enhancing masses (straight arrows). The histopathology result was Invasive Ductile Carcinoma grade II21.

CT Scan Models

CT scans utilize multiple different models to help with the image processing and the scanning process to retrieve the best data.

Chest CT is the most commonly used technology in breast cancer diagnosis, also used for lung and thorax cancer. It works by identifying the tumor masses and then recommending a biopsy for larger tumors. Chest CT works best for larger-sized tumors that are about 34 mm in length. This technology works best for denser tissue22.

CBBCT, Cone-Beam Breast CT is a more specialized version of Chest CT, with a much higher sensitivity, but a lower area under the curve accuracy23.

PET, or Positron Emission Tomography, shows not only the functional, but also the anatomical information of the tissue. It analyzes blood vessels and bone matter to analyze bone health and blood flow to see if the cancer is spreading or not. It is highly effective in detecting bone metastasis in individuals24.

Key Studies and Advancements in CT Scan

Multiple studies have been conducted over the years on CT scans, especially on the newer models. In 2022, a study conducted an analysis on the effectiveness of chest CT, highlighting that the technology has a 99.3% specificity, 84.21% sensitivity, and over 98% accuracy, which shows the effectiveness of newer models. FDG/PET scans have a 97.6% accuracy in determining bone metastasis, helping analyze and detect later stages of cancer with a higher efficiency to recommend future actions, such as chemotherapy22. Boone’s study in 2006 designed a model for specific breast diagnosis, which was able to produce 300 images for analysis in less than 17 seconds25. The images show the tissue clearly, especially highlighting the anatomy of the breast, and analyzing bone structure and blood flow in detail.

There has been significant research pioneering the advancement of CT scans, attempting to fix the stark limitations through new technologies. Clinics have already started using less radiation, especially in Chest CT scans. There have also been advancements in PET technology, combining the imaging technologies of both MRI and PET to make a system that uses less radiation, while having a higher sensitivity. This increased sensitivity is evident when examining bone metastasis.

Limitations of CT Scans

Although CT scans have been one of the efficient forms of breast cancer diagnosis technology, they still come with stark limitations. Compared to other imaging techniques like MRI, it is less sensitive and provides only a 2D view, restricting the range of angles available for detailed analysis from multiple perspectives. CT scans can sometimes overlook microcalcifications, which are early indicators of cancer. Research also indicates that CT scans have reduced sensitivity when imaging dense tissue26. Additionally, certain CT scan types, like FDG and PET, use significant amounts of ionizing radiation, which can cause patient stress due to potential DNA damage and an increased cancer risk. This radiation may also lead to physical discomfort when used to enhance tissue abnormality visibility through color contrast.

Table 1. The performance metrics of traditional imaging modalities for breast cancer detection27.

| Modality/Combination | Overall Sensitivity (n=475 for MG/US; n=282 for MRI) |

Sensitivity (Tumor ≤1 cm) (n=221/134) |

Sensitivity (Tumor 1–2 cm) (n=254/148) |

Key Notes/Comparisons |

|---|---|---|---|---|

| Mammography (MG) | 72.4% | 65.2% | 78.7% | Lower in dense breasts (65.8% vs. 84.5% low density, P<.001); better for calcifications but misses small tumors. |

| Ultrasound (US) | 88.6% | 83.7% | 92.9% | Higher than MG (P<.001); excels in dense breasts but operator-dependent and weaker for DCIS (78.3%). |

| MG + US | 93.1% | 91.0% | 94.9% | Superior to single modalities (P<.001); standard for preoperative assessment. |

| MRI (standalone) | 94.7% | 91.0% | 97.3% | Highest sensitivity; best for triple-negative subtypes and positive nodes (100%). |

| MG + US + MRI | 98.2% | 98.5% | 98.0% | Incremental gain over MG+US (P<.001); no increase in mastectomy rate (49.3% vs. 48.2%, P=.177). |

Section 2: Integration of AI with Imaging Techniques

Convolution Neural Networks (CNN)

Overview and Application of CNNs

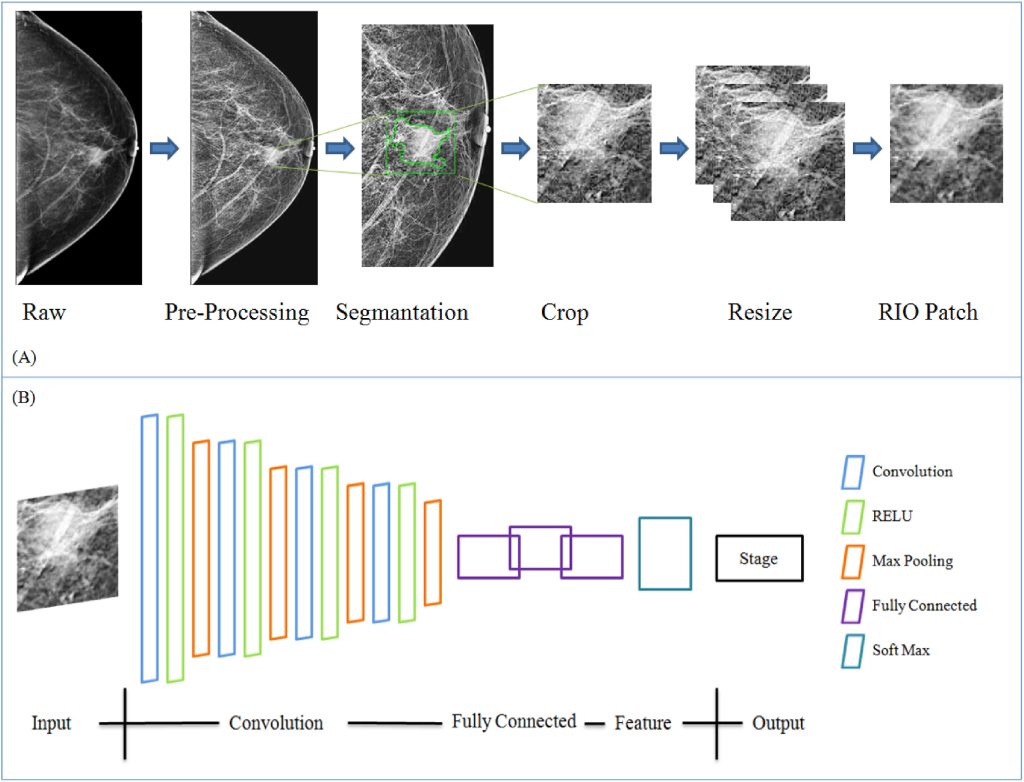

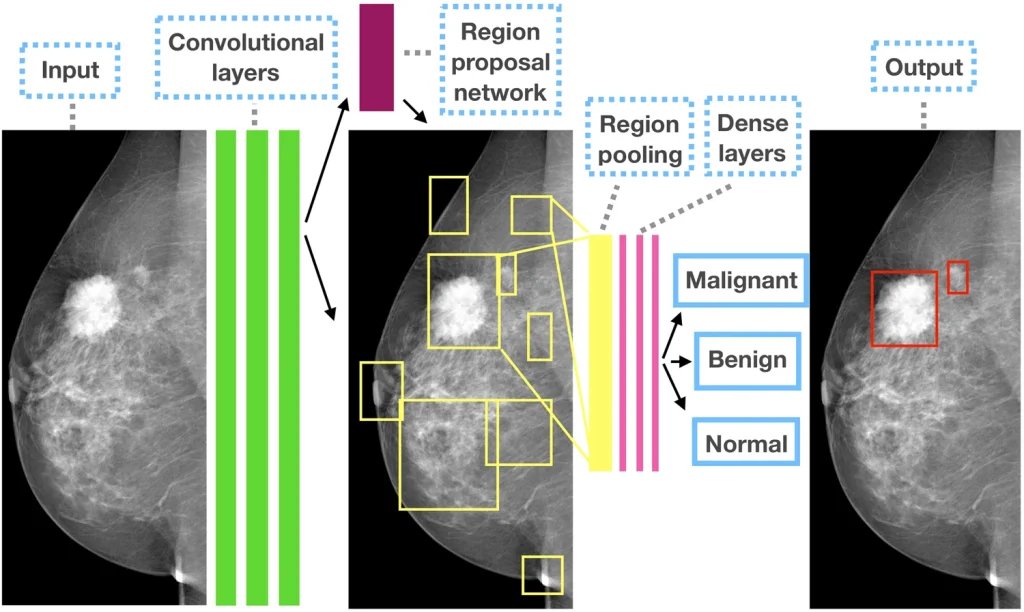

CNNs or Convolutional Neural Networks are AI models designed for processing images and visual graph related data. They are effective in medical imaging tasks like breast cancer detection. Unlike traditional neural networks, CNNs maintain the 2D structure of images, avoiding flattening into vectors. They detect image features hierarchically, such as basic edges and complex tumor shapes, through learning filters (e.g., 3×3 or 5×5 kernels) that slide across images to create feature maps as shown in Figure 4. These maps highlight unique patterns like microcalcifications, tissue irregularity, and texture differences. CNNs employ non-linearity to capture complex patterns and adapt through weighted filters. Pooling layers (e.g., reducing from 5×5 to 2×2) enhance efficiency by scanning larger images with less computational load. Fully connected layers flatten feature maps into vectors for classifying tissue as either benign or malignant, allowing CNNs to identify tumor malignancy, shapes, and microcalcifications for early cancer detection28.

Figure 4: An illustration of how Convolutional Neural Networks use kernels to draw a specific output29.

CNNs improve image quality through techniques like data processing, augmentation, and normalization, and are trained on labeled datasets to reduce classification errors. These data sets utilize techniques such as weight loss functions and oversampling malignant data cases. CNNs use AUC (Area Under the Curve metric) to analyze sensitivity and F-1 score (a measure of the test’s accuracy). Their output scores assist radiologists in identifying urgent cases for prompt treatment. Models such as R-CNN help in tumor localization. Furthermore, CNNs also help improve ultrasound accuracy by 7%, and reduce diagnostic-related errors30. Figure 5 represents the pipeline for processing medical images, specifically a mammogram by focusing on areas of specialized interest for tumor examination.

Figure 5: The Image illustrates a proposed CNN model with A) Steps for preparing data . B) The structure of the proposed models. The softmax function is used to classify and increase detection accuracy31.

Different Models of CNN and Key Studies

CNN uses multiple models to enhance early breast cancer detection. Learning filters, such as AlexNet and VGGNet, are used32. AlexNet uses 8 layers of 11 X 11 learning filters, with an 91.8% accuracy when analyzing early studies. VGGNet uses 3 X 3 learning filters over more layers for in depth analysis with an accuracy of 91.4%. Multi-scanning models, such as Inception, analyze histopathology (microscopic examination of biological tissue) images with a 85% accuracy. Encoders and decoders for segments, such as Inception V3, make it easier for radiologists to process and analyze scans by giving more specific details of the image. EfficientNet enhances scan accuracy through more time-efficient processes, achieving a 92.7% sensitivity rate in mammogram analysis32.

A 2025 study by Naga et al developed a CNN model with transfer learning to automate IDC (Invasive Ductile Carcinoma) detection using a Kaggle dataset of 277,524 images (71.6% IDC-positive). Images were preprocessed, augmented, and split 80/20. A custom 4-block CNN and VGG19 transfer learning were tested. Custom CNN resulted in 89% accuracy and VGG19 90% accuracy, with precision of 0.93 (benign)/0.82 (IDC)33. The VGG19-based model offers reliable, high-accuracy IDC detection as a pathologist’s aid, with open-source access boosting equity in underserved areas.

Vision Transformers (ViTs)

Overview and Application of ViTs

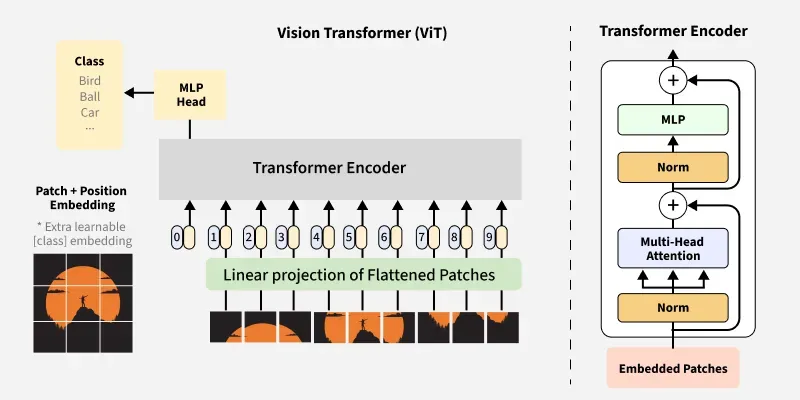

ViTs or Vision Transformers are transformer neural networks adapted from natural language processors (NLPs) used for computer vision tasks. Unlike CNNs, which use local extraction through single kernels that scan entire images, ViTs divide images into fixed-size areas such as 16×16 kernels for classification and processing. These areas are embedded with positional filters to adjust kernel size during scanning as illustrated in Figure 6. The transformer encoder, a key component, employs self-attention mechanisms to capture contextual relationships in the image and global dependencies. This makes ViTs effective at detecting subtle patterns in images, such as those on histopathology slides, that CNNs might miss. ViTs are highly adaptable and scalable, suitable for various medical fields. Their output is processed through Multi-Layer Perceptrons (MLPs) to aid image classification, assisting radiologists and doctors in image processing and readability.

Table 2. The performance metrics of models of CNN for breast cancer detection34

| Model | AUC | Specificity | Accuracy | F1-Score |

|---|---|---|---|---|

| AlexNet | 0.988 | 97.3% | 91.8% | 85.9% |

| VGG | 0.987 | 97.2% | 91.4% | 84.7% |

| InceptionV3 | 0.958 | 94.0% | 85.0% | 69.2% |

| EfficientNet | 0.990 | 997.7% | 92.7% | 89.9% |

A 2025 study by Naga et al developed a CNN model with transfer learning to automate IDC (Invasive Ductile Carcinoma) detection using a Kaggle dataset of 277,524 images (71.6% IDC-positive). Images were preprocessed, augmented, and split 80/20. A custom 4-block CNN and VGG19 transfer learning were tested. Custom CNN resulted in 89% accuracy and VGG19 90% accuracy, with precision of 0.93 (benign)/0.82 (IDC)33. The VGG19-based model offers reliable, high-accuracy IDC detection as a pathologist’s aid, with open-source access boosting equity in underserved areas.

Vision Transformers (ViTs)

Overview and Application of ViTs

ViTs or Vision Transformers are transformer neural networks adapted from natural language processors (NLPs) used for computer vision tasks. Unlike CNNs, which use local extraction through single kernels that scan entire images, ViTs divide images into fixed-size areas such as 16×16 kernels for classification and processing. These areas are embedded with positional filters to adjust kernel size during scanning as illustrated in Figure 6. The transformer encoder, a key component, employs self-attention mechanisms to capture contextual relationships in the image and global dependencies. This makes ViTs effective at detecting subtle patterns in images, such as those on histopathology slides, that CNNs might miss. ViTs are highly adaptable and scalable, suitable for various medical fields. Their output is processed through Multi-Layer Perceptrons (MLPs) to aid image classification, assisting radiologists and doctors in image processing and readability.

Figure 6: An overview of architecture and working of Vision Transformer (ViT)35).

Vision Transformers (ViTs) are widely applied in medical imaging for tasks like image classification, segmentation, histopathological analysis, and implementing Low-to-High Multi-Level Vision Transformers (LMLTs). They differentiate benign from malignant tumors by dividing the images and identifying subtle abnormalities such as irregular tissue patterns, microcalcifications, and varying tissue densities.

Techniques like Wiener processing or total variation filtering improve image quality by minimizing external noise during scan analysis. ViTs are utilized in imaging modalities including mammograms, ultrasounds, DCE-MRI (Dynamic Contrast Enhanced MRI), and histopathology. It supports functions like lesion classification, digital mammogram analysis, abnormal tissue pattern detection, and tumor classification (benign, malignant, or non-tumor). Additionally, ViTs excel in image segmentation for tumor analysis and aiding in delineating tumor boundaries. It also helps in treatment planning, and monitoring disease progression through semantic segmentation, which classifies tissue malignancy at the pixel level to guide future treatments.

Vision Transformer Models and Key Studies

Models like U-KAN utilize hybrid properties, combining global data analysis with feature extraction to improve tumor segmentation accuracy across imaging modalities such as mammography, ultrasound, and MRI. U-KAN segments microcalcifications into distinct datasets, identifies boundaries in ultrasound images, and performs segmentation in MRI scans . In histopathological analysis, it categorizes tissue samples into site carcinoma (non-invasive), invasive carcinoma, and normal (non-tumor) regions36.

Vision Transformers (ViTs) integrate tools like Virchow2 (for detailed tumor analysis) and Nomic (a vision-based transformer for contextual and spatial analysis). The process involves dividing histopathology slides into datasets, analyzing their spatial relationships, and classifying the data. ViTs then use global datasets to refine the results, aiding doctors in creating personalized treatment plans. Applications include real-time ultrasound screening, remote image analysis via telemedicine, and integrating health records with real-time imaging for accurate, high-quality data37.

Table 3: The Performance Metrics of models of Vision Transformers 38,39.

| Model | AUC | Specificity | Accuracy | Sensitivity |

|---|---|---|---|---|

| U-KAN | 0.95 | 92.0% | 94.5% | 93.8% |

| ViT-Virchow2-Nomic Hybrid | 0.97 | 96.5% | 96.8% | 96.2% |

A 2025 research by Mouhamed Laid Abimouloud et al found that TokenMixer, a hybrid CNN-ViT model improves the detection of breast cancer in histopathology slides through convolutional operations for streamlined patch selection and transformer layers for advanced feature analysis. This research was evaluated on the BreakHis dataset for both binary (benign/malignant) and multi-class (four subtypes) classification across 40× to 400× magnifications. It delivered 97.02% accuracy in binary tasks and 93.29% in multi-class tasks, with superior efficiency to vision transformers40. By lowering computational demands and elevating precision, it offers strong potential for rapid clinical applications.

Computer Vision

Overview and Application of Computer Vision in Breast Cancer Detection

Computer vision analyzes and interprets visual data like images or videos using image processing, pattern recognition, and machine learning to extract meaningful information. In medicine, it aids in analyzing complex datasets, classifying abnormalities, and supporting diagnoses through stages like image acquisition, preprocessing, feature extraction, model training, and post-scan processing. Preprocessing involves adjusting pixel intensities, applying noise-reducing filters, using data augmentation to diversify datasets, and adding visibility enhancers for contrast. After scans like mammography or MRI, feature extraction identifies patterns such as textures, shapes, boundaries, and gradient intensities to detect abnormalities. Post-processing generates heatmaps to highlight potential tumor areas and refines segments using thresholding techniques.

Computer vision is crucial for early breast cancer detection with other imaging modalities. It identifies abnormalities like microcalcifications, spiculated masses, or tissue density irregularities with high sensitivity and specificity, reducing false positives. These systems help radiologists and can perform secondary scans to detect additional cancer indicators. By employing techniques like saliency mapping to highlight key patterns and SHAP (SHapley Additive exPlanations) to explain predictions through feature importance, computer vision enhances clinical confidence. Additionally, it supports personalized treatment plans by combining imaging data with clinical factors such as age and genetic markers.

Figure 7: The outline of the Faster R-CNN model for CAD in mammography41.

Computer Vision Models and Key Studies

Computer vision utilizes multiple different types of deep and machine learning models to enhance scan accuracy, such as Logistic Regression, Support Vector Machine, Random Forest, UNET, and Graph Neural Network42.

LR or Logistic Regression, is a linear model used for binary classification, which uses logistic functions to predict and identify benign or malignant tumors. It uses sigmoid functions (mathematical functions with S-shaped graphs) to give estimations based on maximum likelihood. The model trains on WDBC (Wisconsin Diagnostic Breast Cancer), a public dataset and focuses on features such as radius, concavity, and texture, which are scaled to show convergence. It utilizes a tool called RFE, which selects the top feature in the scan and highlights it. This model works best on linear data sets.

SVM’s or Support Vector Machines map specific features of an image, which is later used on a hyperplane for image classification. They utilize particular kernel types to facilitate image scaling, enabling the identification of microcalcifications and other indicators of malignancy. It trains on WDBC models, similar to Logistic Regression. It is very effective for analyzing multidimensional data, but struggles with analyzing larger data sets.

RF or Random Forest Mapping, utilizes feature randomness and decision trees to help in detection. It has 100-500 trees that are trained on different data sets, which identify concavity patterns, area, and texture to give an in-depth analysis of the tissue scanned. It is strong in analyzing non linear data, but takes a lot of time due to the computationally intensive process.

U-Net is a CNN architecture that is used in segmentation to encode structures for localization. It is trained on DDSM (Digital Database for Screening Mammography) images to segment areas such as tumors or microcalcifications, automatically extracting specific features of the image.

Graph Neural Networks are models that identify a relationship between the different features of an image through creating graphs utilizing CNN’s showing edges and other feature interactions. It uses parameters such as filter layers, aggregators, and learning rates. It selects features through graph pruning. Graph Neural Networks are amazing at analyzing structured data, but are not good for raw imaging.

In a 2025 study by Ahmed et al, a two-phase classification pipeline processes ultrasound datasets: Phase 1 employed ResNet-101 to differentiate normal from abnormal images, while Phase 2 applied InceptionV3 to further classify abnormal ones as benign or malignant. Key outcomes of this model were 99.31% accuracy in Phase 1, 86% in Phase 2, and 88% for the integrated system43.

There has been a significant increase in the advancement of different models used in computer vision. Firstly, scientists have been utilizing lighter models with the help of Raspberry Pi and ultrasound, reducing model sizes by 60%, making them less energy intensive while processing the data at the same rate. Additionally, models use extended FL (Federated learning) and FedProx with incremental learning to adapt to clinical data, enhancing model accuracy43. Other advancements include using 3D CNNs to analyze data from all angles to improve sensitivity and specificity. Diffusion models solve data discrepancies, and Grad Cam identifies overlapping tissue and allows radiologists to annotate their studies to track the minor specificities in the image.

Generative AI

Overview and Application of Generative AI

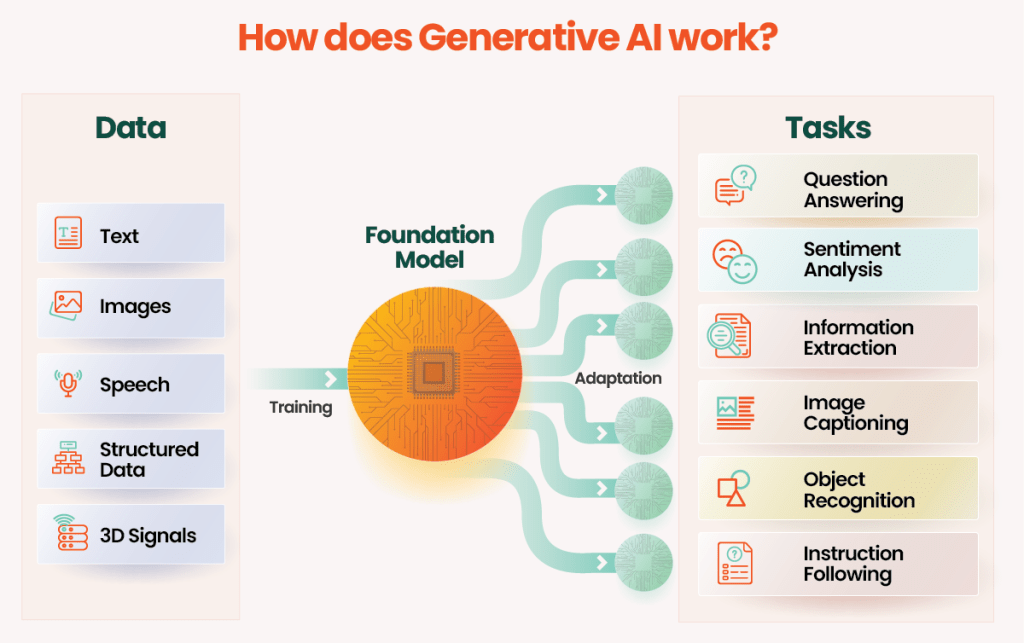

Generative AI is a unique form of AI focused on generating images, text, audio, and data. Unlike traditional AI models, which classify or predict results, Generative AI produces an output that is similar to training data with the help of deep learning, making it indistinguishable from real-world data. Generative AI works through data interpretation, training, model architecture, generation, evaluation, and refinement shown in Figure 8.

Generative AI is used to generate data and images for breast cancer detection. It requires an input of large datasets from scans of different models, such as MRI, mammograms, ultrasound, and histopathology. After data training completes, the model generates new data from the sampled training set. The data is guided by input values such as conditional variables and prompts. After receiving the output, machines rectify the product by checking for minute errors, such as the quality of data and the diversity of data sets, and adjust data based on those parameters to reduce error scores and present unbiased data. In comparison to other AI’s, generative AI is known for its greater emphasis on a mathematical and computational approach since it generates most of the data on its own and requires high computational demands.

Figure 8: The diagram illustrates the functioning of Generative AI44.

Models of Generative AI and Key Studies

Generative AI utilizes different types of models when conducting breast cancer scans.

GANs, or General Adversarial Networks, are typically used to generate high-quality images, similar to those of scanning modalities like MRI, mammography, and X-ray. It uses generator neural networks to create the data, and discriminator neural networks to evaluate data authenticity and precision. There are many variants of GANs, such as StyleGan2, which generate higher resolution images. A study conducted by Scientific Reports in 2023 on StyleGan2 showed a 70% Area Under the Curve Accuracy, 78% overall sensitivity, with a 52% specificity45. A 2023 study by Franzes et al on GANs also analyzed its ability to generate synthetic contrast as an alternative to MRI46).

TVAE’s or Tabular Variation Autoencoders, are variants of regular Variation Autoencoders, which are specifically used for designing tabular data, like tumor size, biomarker status, and tissue density. VAEs enhance cancer detection in mammograms through feature extraction and anomaly detection. They encode images into a latent space to capture complex patterns linked to cancer, enabling models to identify subtle indicators. Additionally, VAEs reconstruct images based on learned patterns, flagging significant deviations in new mammograms as anomalies for further investigation.

DDPM’s or Denoising Diffusion Probabilistic Models, produce high-quality images by de noising. It utilizes a forward process to continually add noise coming from the model’s scans, and a reverse process that utilizes neural network models to denoise the scan, producing data with high resolution. Studies examining the diffusion models used in MRI shows they have a greater image quality than GANs, which are renowned for their accuracy. Recent advances highlight the importance of diffusion models in generating images, especially when detecting early signs such as microcalcification and density discrepancies. Diffusion models have a very high image quality, in fact, one of the best till date47. However, it is computationally intensive, making real-time applications tougher, hard to train compared to the other AI models because of its specificity, and less tested in medical imaging, with limited studies available.

Both GANs and VAEs have strengths and weaknesses, making them suitable for different aspects of breast cancer prediction.

Table 4: Comparison of different models of Generative AI

| Model | Best For | Advantages | Limitations |

|---|---|---|---|

| GANs | Image Synthesis | High-quality image generation, data diversity | Requires significant computational power, can be unstable during training |

| VAEs | Feature Extraction, Anomaly Detection |

Effective in learning complex data distributions, interpretable latent space | Generates images with lower realism compared to GANs |

| DDPM | Image Synthesis | Best image quality generation, and detects early microcalcifications | High computational load when generating images; can crash due to overload; hard to train since less tested in medical imaging |

A 2023 study by Franzes et al compared DDPM with GANs, ( Pix2PixHD) to reconstruct enhanced images in contrast-enhanced breast MRI from diminished doses (25%, 10%, and 5%), with the goal of curbing contrast agent application in screening procedures. Reconstruction fidelity, lesion visibility, and false-positive instances were evaluated blindly by two radiologists. Results revealed radiologists inclination toward GANs for the 5% dose and DDPM for the 25% dose; in general, the techniques offer encouraging prospects for low-dose MRI to decrease contrast demands, yet they need optimization to curb false positives48).

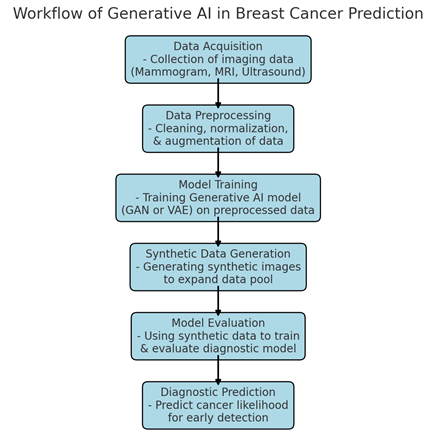

Figure 9: Workflow Diagram: Generative AI in Breast Cancer Prediction2.

Generative artificial intelligence, including GANs and diffusion models, is frequently applied to augment datasets or generate synthetic images for breast cancer detection, thereby boosting the effectiveness of conventional AI methods such as CNNs and SVMs. Direct comparisons reveal that these generative techniques generally elevate accuracy when combined with other models, although they underperform discriminative approaches like CNNs when used independently for classification.

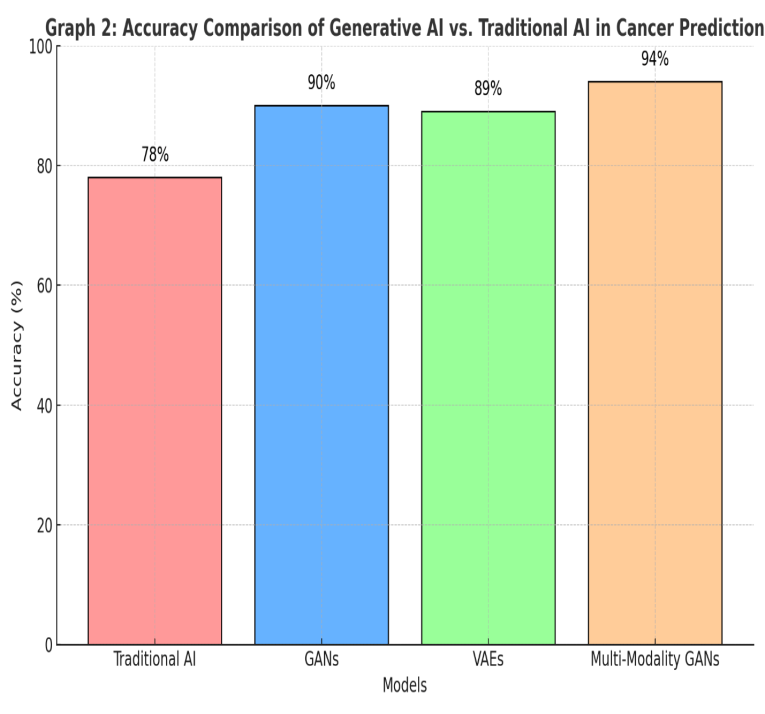

Generative AI systems have shown strong accuracy and sensitivity in predicting breast cancer, especially when using synthetic data for training. Research reveals that GAN-based approaches, trained on artificial images, attain an average sensitivity of 90% and specificity of 85%, outperforming conventional machine learning methods that generally stay under 80% on equivalent datasets2.

Figure 10: Accuracy Comparison of Generative AI vs. Traditional AI in Cancer Prediction2

Limitations of AI in Breast cancer imaging

Artificial intelligence (AI) is rapidly advancing in breast cancer imaging streamlining routine tasks, patient care, and resource allocation. However, its progress is impeded by limitations in machine learning, flawed data access, inherent biases, and systemic inequities. Numerous research-driven algorithms power modern AI implementations, yet a primary worry is their potential for errors, which can endanger patients or trigger complications. For instance, erroneous false negatives in breast cancer screenings might allow undetected tumor progression. Additionally, as AI models evolve from training to autonomous operation, they risk exceeding human oversight—developing self-directed behaviors that ignore directives and yield unpredictable consequences. A major obstacle to AI’s precision is insufficient data: robust, expansive datasets from credible origins are essential for training, but inadequate data frameworks and stringent privacy regulations often restrict access in medical contexts49. AI bias emerges as a distortion in algorithmic outputs, stemming from skewed training datasets or flawed design assumptions. To counter this, proactive vigilance is vital—identifying risks during initial data collection, annotation, and deployment phases is essential.

Discussion

This review paper analyzed the evolution of breast cancer imaging technologies, from MRI, Mammography, and X-Rays to various AI models such as CNNs, Generative AI, and Vision Transformers. The impact of these new AI models and how they can further improve parts of scans, such as sensitivity, accuracy, and radiation exposure, was analyzed.

Traditional Breast Cancer imaging models are still highly effective tools in early diagnosis, but pose significant limitations. Integrating AI into these scanning modalities has improved the accuracy and efficiency of these models. CNN models such as WUAVE and Deep Health have an edge over traditional imaging techniques when analyzing larger data sets or detecting small patterns such as microcalcifications. Vision Transformers, commonly known as VIT’s utilize hybridization and self-attention machines to improve segmentation and analysis in noisy environments. Computer vision techniques, such as Logistic Regression and Random Forest, utilize tools like SHAP to aid in detecting abnormalities and smaller signs of cancer, including microcalcifications. Generative AI generates its own data based on real studies, which helps solve problems such as data scarcity through models such as TVAEs, which produce tabular data, and GANs, which generate image-based data.

Although there have been significant advancements and improvements in AI technology, more work is needed to be done. AI is very computationally intensive, making some processes much longer. Additionally, legal and ethical considerations are critical, requiring robust regulations, ethical frameworks, and transparent AI models. Addressing issues such as data bias, health equity, data control, and patient privacy are important for fostering patient trust with open communication.

Conclusion

In conclusion, AI and machine learning play a pivotal role in revolutionizing breast cancer imaging by augmenting accuracy, sensitivity, efficiency, and access. These tools not only excel in processing datasets for segmentation, classification, and early detection, but also support less invasive screening. They enhance modalities like mammography, CT scans, and MRI through precise analysis and aiding radiologists in image analysis. That said, ongoing studies are essential for investigating challenges like data quality, transparency, and trust, which rely on legal collaborations and commitments to transparency, accountability, and inclusivity. It is important to focus on data sharing through platforms such as explainable AI (XAI). Additionally, forward-looking clinical trials, data bias reduction via detailed demographic data, and targeted education for healthcare providers and patients will help in long-term AI integration. Tackling these problems will improve AI’s diagnostic and screening impacts while alleviating risks in breast imaging.

Acknowledgements

I would like to thank my mentor, Morteza Samardi from MIT, for his invaluable guidance in helping me strategize this scientific review paper. I am very thankful to him for providing details and in-depth knowledge on the topic. His expertise and support were pivotal in this project. I would also like to thank my family for their encouragement and continued support, which helped me complete this project.

References

- World Health Organization. (2025). Breast cancer [↩]

- Mohapatra AIML, A. (2024). Generative AI to Predict Breast Cancer: Current Approaches, Advancements, and Challenges. International Journal of Medical Science and Clinical Invention, 11(11), 7441–7456 [↩] [↩] [↩] [↩]

- The American Cancer Society medical and editorial content team. (2025). Breast cancer statistics: How common is breast cancer? American Cancer Society [↩]

- Giaquinto, A. N., Sung, H., Newman, L. A., Freedman, R. A., Smith, R. A., Star, J., Jemal, A., & Siegel, R. L. (2024). Breast cancer statistics, 2024. CA: A Cancer Journal for Clinicians, 74(6), 477-495 [↩]

- Shockney, L. D. (2025). Breast cancer facts & stats 2025 – incidence, age, survival, & more. National Breast Cancer Foundation [↩]

- Abdul Halim, A. A., Andrew, A. M., Mohd Yasin, M. N., Abd Rahman, M. A., Jusoh, M., Veeraperumal, V., Rahim, H. A., Illahi, U., Abdul Karim, M. K., & Scavino, E. (2021). Existing and emerging breast cancer detection technologies and its challenges: A review. Applied Sciences, 11(22), 10753 [↩]

- Han, J., Gao, Y., Huo, L., Wang, D., Xie, X., Zhang, R., Guo, L., Zhou, T., Shi, Y., Zhou, J., Duan, S., Shan, F., Jia, S., Liu, X., Xu, W., Chen, Q., Liang, L., Zhai, H., & Wang, Y. (2025). Whole-lesion-aware network based on freehand ultrasound video for breast cancer assessment: A prospective multicenter study. Cancer Imaging, 25(1), 11 [↩]

- Media, L. (2024). Lunit AI predicts breast cancer risk up to 6 years in advance. Lunit [↩]

- Gjesvik, J., Moshina, N., Lee, C. I., Miglioretti, D. L., & Hofvind, S. (2024). Artificial Intelligence Algorithm for Subclinical Breast Cancer Detection. JAMA Network Open, 7(10), e2437402–e2437402 [↩]

- Stefani, N. (2025). AI is transforming cancer detection – what’s next? DeepHealth [↩]

- Kim, J. G., Haslam, B., Diab, A. R., Sakhare, A., Griost, G., Lee, H., Kolli, S., Le, S. E., Mueller, J., Choudhery, S., Freer, P., Pahwa, A., Grimm, L., & Sorensen, A. G. (2024). Impact of a categorical AI system for digital breast tomosynthesis on breast cancer interpretation by both general radiologists and breast imaging specialists. Radiology: Artificial Intelligence, 6(4), e230137 [↩]

- Fornell, D. (2022). Photo gallery: What does breast cancer look like on mammography. Radiology Business [↩]

- Friedewald, S. M., Rafferty, E. A., Rose, S. L., Durand, M. A., Plecha, D. M., Greenberg, J. S., Hayes, M. K., Copit, D. S., Carlson, K. L., Cink, T. M., Barke, L. D., Greer, L. N., Miller, D. P., & Conant, E. F. (2014). Breast cancer screening using tomosynthesis in combination with digital mammography. JAMA, 311(24), 2499-2507 [↩]

- Pisano, E. D., Gatsonis, C., Hendricks, E., Yaffe, M., Baum, J. K., Acharyya, S., Conant, E. F., Fajardo, L. L., Bassett, L., D’Orsi, C., Jong, R., & Rebner, M. (2005). Diagnostic performance of digital versus film mammography for breast-cancer screening. New England Journal of Medicine, 353(17), 1773-1783 [↩]

- Berg, W. A., Vargo, A., Lu, A. H., Berg, J. M., Bandos, A. I., Hartman, J. Y., Nair, H., Holt, S. E., & Sumkin, J. H. (2025). Screening for breast cancer with contrast-enhanced mammography as an alternative to MRI: SCEMAM trial results. Radiology, 242634 [↩]

- The WISDOM Study. (2025). The WISDOM study – join the movement [↩]

- Elmore, J. G., Barton, M. B., Moceri, V. M., Polk, S., Arena, P. J., & Fletcher, S. W. (1998). Ten-year risk of false positive screening mammograms and clinical breast examinations. New England Journal of Medicine, 338(16), 1089-1096 [↩]

- Aguilar, J. R. (2022). Higher rate of breast cancer detected with MRI. Tunisie Soir [↩]

- Mann, R. M., Cho, N., & Moy, L. (2019). Breast MRI: State of the art. Radiology, 292(3), 520-536 [↩] [↩] [↩]

- Bolan, P. J., Kim, E., Herman, B. A., Newstead, G. M., Rosen, M. A., Schnall, M. D., Pisano, E. D., Weatherall, P. T., Morris, E. A., Lehman, C. D., Grp, A. T., & Hylton, N. M. (2017). Magnetic resonance spectroscopy of breast cancer for assessing early treatment response: Results from the ACRIN 6657 MRS trial. Journal of Magnetic Resonance Imaging, 46(1), 290-302 [↩]

- Rahman, R. W. A., Al-Dhurani, S. Y. A., Radwan, A. H., Mohamed, A. A., & Kamal, E. F. (2022). Multi-detector CT chest: Can it omit the further need for contrast enhanced spectral mammography in breast cancer patients candidate for CT staging? Egyptian Journal of Radiology and Nuclear Medicine, 53(1), 152 [↩]

- Desperito, E., Schwartz, L., Capaccione, K. M., Collins, B. T., Jamabawalikar, S., Peng, B., Salvatore, M., & Salvatore, M. M. (2022). Chest CT for breast cancer diagnosis. Life, 12(11), 1699 [↩] [↩]

- Gong, W., Zhu, J., Hong, C., Liu, X., Li, S., Chen, Y., Chen, Z., Lin, X., Liu, Z., Shao, G., & Li, X. (2023). Diagnostic accuracy of cone-beam breast computed tomography and head-to-head comparison of digital mammography, magnetic resonance imaging and cone-beam breast computed tomography for breast cancer: A systematic review and meta-analysis. Gland Surgery, 12(10), 1360-1374 [↩]

- Hadebe, B., Harry, L., Ebrahim, T., Pillay, V., & Vorster, M. (2023). The role of PET/CT in breast cancer. Diagnostics, 13(4), 597 [↩]

- Boone, J. M., Kwan, A. L. C., Yang, K., Burkett, G. W., Lindfors, K. K., & Nelson, T. R. (2006). Computed tomography for imaging the breast. Journal of Mammary Gland Biology and Neoplasia, 11(2), 103-111 [↩]

- Hussain, S., Mubeen, I., Ullah, N., Shah, S. S. U. D., Khan, B. A., Zahoor, M., Ullah, R., Khan, F. A., & Sultan, M. A. (2022). Modern diagnostic imaging technique applications and risk factors in the medical field: A review. BioMed Research International, 2022, 5164970 [↩]

- Chen, H. L., Zhou, J. Q., Chen, Q., & Deng, Y. C. (2021). Comparison of the sensitivity of mammography, ultrasound, magnetic resonance imaging and combinations of these imaging modalities for the detection of small (≤2 cm) breast cancer. Medicine, 100(26), e26531 [↩]

- Tang, S., Jing, C., Jiang, Y., Yang, K., Huang, Z., Wu, H., Yu, S., Shi, G., & Dong, F. (2023). The effect of image resolution on convolutional neural networks in breast ultrasound. Heliyon, 9(8), e19253 [↩]

- Alzubaidi, L., Zhang, J., Humaidi, A. J., Al-Dujaili, A., Duan, Y., Al-Shamma, O., Santamaría, J., Fadhel, M. A., Al-Amidie, M., & Farhan, L. (2021). Review of deep learning: Concepts, CNN architectures, challenges, applications, future directions. Journal of Big Data, 8(1), 53 [↩]

- Xiao, T., Liu, L., Li, K., Qin, W., Yu, S., & Li, Z. (2018). Comparison of transferred deep neural networks in ultrasonic breast masses discrimination. BioMed Research International, 2018, 4605191 [↩]

- Tarighati Sereshkeh, E., Keivan, H., Shirbandi, K., Khaleghi, F., & Bagheri Asl, M. M. (2024). A convolution neural network for rapid and accurate staging of breast cancer based on mammography. Informatics in Medicine Unlocked, 47, 101497 [↩]

- Yuan, B., Hu, Y., Liang, Y., Zhu, Y., Zhang, L., Cai, S., Peng, R., Wang, X., Yang, Z., & Hu, J. (2025). Comparative analysis of convolutional neural networks and transformer architectures for breast cancer histopathological image classification. Frontiers in Medicine, 12, 1606336 [↩] [↩]

- Chilumukuru, N. S., Priyadarshini, P., & Ezunkpe, Y. (2025). Deep learning for the early detection of invasive ductal carcinoma in histopathological images: Convolutional neural network approach with transfer learning. JMIR Formative Research, 9, e62996 [↩] [↩]

- Yuan, B., Hu, Y., Liang, Y., Zhu, Y., Zhang, L., Cai, S., Peng, R., Wang, X., Yang, Z., & Hu, J. (2025). Comparative analysis of convolutional neural networks and transformer architectures for breast cancer histopathological image classification. Frontiers in Medicine, 12, 1606336 [↩]

- Vision Transformer (ViT) Architecture – GeeksforGeeks. (2025, July 23 [↩]

- Zhu, Z., Sun, Y., & Honarvar Shakibaei Asli, B. (2024). Early Breast Cancer Detection Using Artificial Intelligence Techniques Based on Advanced Image Processing Tools. Electronics 2024, Vol. 13, Page 3575, 13(17), 3575 [↩]

- Alwateer, M., Bamaqa, A., Farsi, M., Aljohani, M., Shehata, M., & Elhosseini, M. A. (2025). Transformative Approaches in Breast Cancer Detection: Integrating Transformers into Computer-Aided Diagnosis for Histopathological Classification. Bioengineering, 12(3), 212 [↩]

- Alwateer, M., Bamaqa, A., Farsi, M., Aljohani, M., Shehata, M., & Elhosseini, M. A. (2025). Transformative Approaches in Breast Cancer Detection: Integrating Transformers into Computer-Aided Diagnosis for Histopathological Classification. Bioengineering, 12(3), 212 [↩]

- Zhu, Z., Sun, Y., & Honarvar Shakibaei Asli, B. (2024). Early Breast Cancer Detection Using Artificial Intelligence Techniques Based on Advanced Image Processing Tools. Electronics 2024, Vol. 13, Page 3575, 13(17), 3575 [↩]

- Abimouloud, M. L., Bensid, K., Elleuch, M., Ammar, M. ben, & Kherallah, M. (2025). Advancing breast cancer diagnosis: token vision transformers for faster and accurate classification of histopathology images. Visual Computing for Industry, Biomedicine, and Art, 8(1), 1–27 [↩]

- Ribli, D., Horváth, A., Unger, Z., Pollner, P., & Csabai, I. (2018). Detecting and classifying lesions in mammograms with Deep Learning. Scientific Reports, 8(1), 1–7 [↩]

- Monirujjaman Khan, M., Islam, S., Sarkar, S., Ayaz, F. I., Ananda, M. K., Tazin, T., Albraikan, A. A., & Almalki, F. A. (2022). Machine Learning Based Comparative Analysis for Breast Cancer Prediction. Journal of Healthcare Engineering, 2022, 4365855 [↩]

- Mahmoud, A., Afifi, D., Salem, M. A.-M. M., & Taha, E. (2025). Embedded system for ultrasound breast cancer diagnosis. ResearchGate [↩] [↩]

- Raju, H. (2024, November 18). Unleashing Creativity with Generative AI: A Step-by-Step Guide to Building Your Own AI Project [↩]

- Park, S., Lee, K. H., Ko, B., & Kim, N. (2023). Unsupervised anomaly detection with generative adversarial networks in mammography. Scientific Reports, 13(1), 1–10 [↩]

- Müller-Franzes, G., Huck, L., Bode, M., Nebelung, S., Kuhl, C., Truhn, D., & Lemainque, T. (2024). Diffusion probabilistic versus generative adversarial models to reduce contrast agent dose in breast MRI. European Radiology Experimental, 8(1 [↩]

- Guerreiro, J., Tomás, P., Garcia, N., & Aidos, H. (2023). Super-resolution of magnetic resonance images using Generative Adversarial Networks. Computerized Medical Imaging and Graphics, 108 [↩]

- Müller-Franzes, G., Huck, L., Arasteh, S. T., Khader, F., Han, T., Schulz, V., Dethlefsen, E., Kather, J. N., Nebelung, S., Nolte, T., Kuhl, C., & Truhn, D. (2023). Using Machine Learning to Reduce the Need for Contrast Agents in Breast MRI through Synthetic Images. Radiology, 307(3 [↩]

- Goh, S., Goh, R. S. J., Chong, B., Ng, Q. X., Koh, G. C. H., Ngiam, K. Y., & Hartman, M. (2025). Challenges in Implementing Artificial Intelligence in Breast Cancer Screening Programs: Systematic Review and Framework for Safe Adoption. Journal of Medical Internet Research, 27, e62941 [↩]